第19关 Rancher-Longhorn

大约 6 分钟

5.2k star 开源分布式存储服务Rancher-Longhorn在k8s上部署

自建机房k8s

什么是Longhorn

Longhorn是一个轻量级、可靠且易于使用的Kubernetes分布式块存储系统。

Longhorn 是免费的开源软件。它最初由 Rancher Labs 开发,现在作为云原生计算基金会的孵化项目进行开发。

官方文档: https://longhorn.io/docs/

真的决定在生产中使用,最好看一遍,对它的原理、备份、监控有所理解,做到数据不丢失

使用 Longhorn,您可以:

- 使用 Longhorn 卷作为 Kubernetes 集群中分布式有状态应用程序的持久存储

- 将块存储分区为 Longhorn 卷,以便您可以在有或没有云提供商的情况下使用 Kubernetes 卷

- 跨多个节点和数据中心复制块存储以提高可用性

- 将备份数据存储在外部存储中,例如 NFS 或 AWS S3(云端对象储存)或者本地部署minio

- 创建跨集群灾难恢复卷,以便主 Kubernetes 集群中的数据可以从第二个 Kubernetes 集群中的备份快速恢复

- 计划卷的定期快照,并计划定期备份到 NFS 或 S3 兼容的辅助存储

- 从备份恢复卷

- 在不中断持久卷的情况下升级 Longhorn

Longhorn 带有独立的 UI,可以使用 Helm、kubectl 或 Rancher 应用程序目录进行安装。

Longhorn的底层存储协议

iSCSI(Internet Small Computer Systems Interface)是一种网络协议,用于在 TCP/IP 网络上传输 SCSI 命令,允许两台计算机进行远程存储和检索操作。iSCSI 是一种流行的存储区域网络(SAN)技术,广泛用于连接存储设备,如磁盘阵列和磁带库,与服务器和数据中心。

在K8S上部署Longhorn

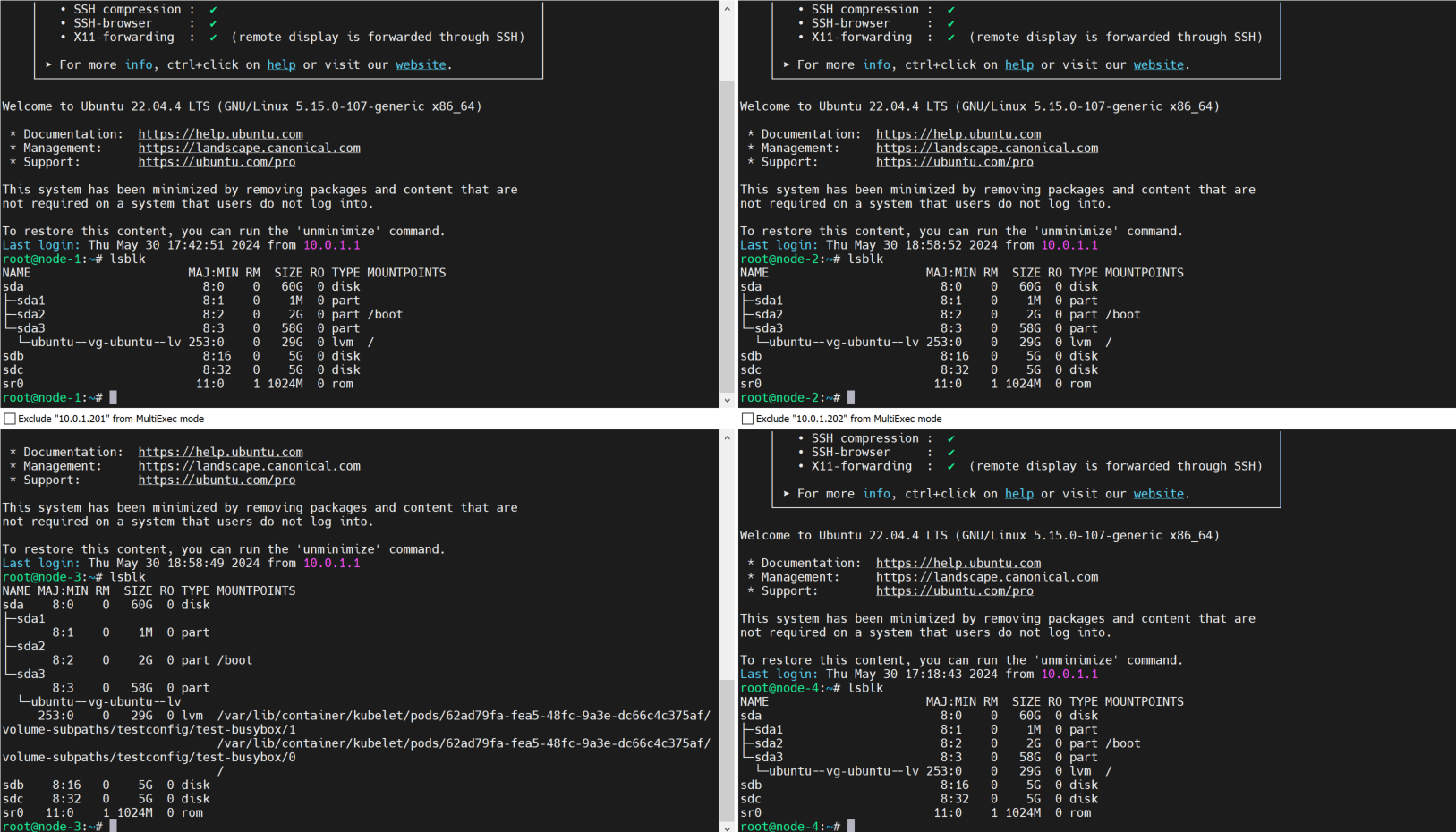

在集群所有节点上添加两块硬盘并挂载目录

有线下物理机房k8s环境可以每台机器多放两个磁盘

虚拟机在VMare给每台机器添加两个5G的新硬盘

lsblk

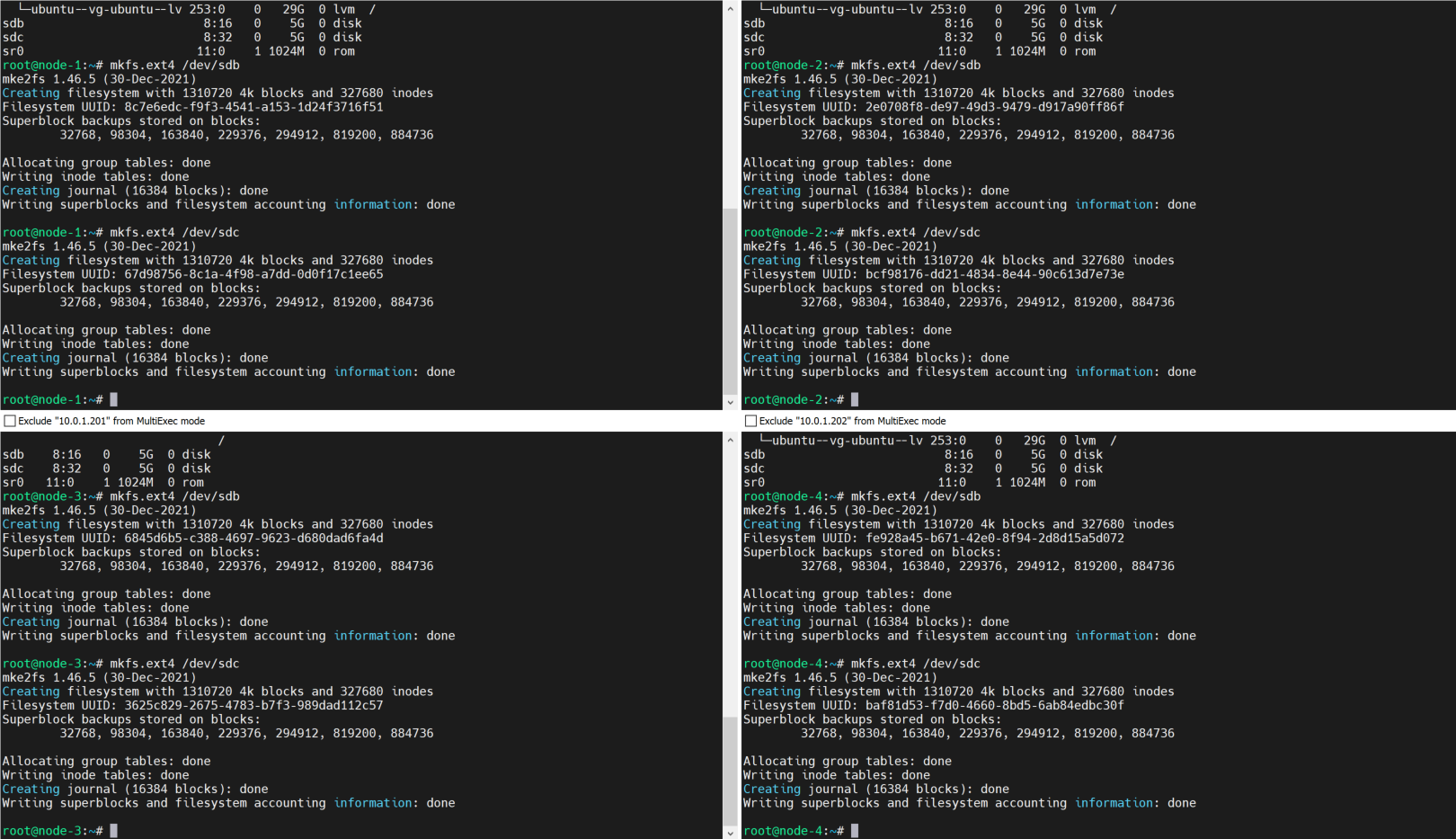

每台节点都格式化

mkfs.ext4 /dev/sdb

mkfs.ext4 /dev/sdc

# 创建目录

mkdir /mnt/longhorn-sdb

mkdir /mnt/longhorn-sdc

# 加到开机自启挂载

# cat /etc/fstab

/dev/sdb /mnt/longhorn-sdb ext4 defaults 0 1

/dev/sdc /mnt/longhorn-sdc ext4 defaults 0 1

#

# 重新加载

# mount -a

# df -Th|grep -E 'longhorn-sdb|longhorn-sdc'

/dev/sdb ext4 4.9G 24K 4.6G 1% /mnt/longhorn-sdb

/dev/sdc ext4 4.9G 24K 4.6G 1% /mnt/longhorn-sdc

# 给每一台k8s节点加上磁盘,打上标签,让longhorn知道在节点上用那些盘做分布式存储磁盘

# 配置节点信息(每一台都要)

# kubectl edit node 10.0.1.201

metadata:

labels:

node.longhorn.io/create-default-disk: "config"

annotations:

node.longhorn.io/default-disks-config: '[

{

"path":"/mnt/longhorn-sdb",

"allowScheduling":true

},

{

"path":"/mnt/longhorn-sdc",

"allowScheduling":true

}

]'

# 利用helm安装longhorn服务

wget -O longhorn-1.5.3.zip https://github.com/longhorn/longhorn/archive/refs/tags/v1.5.3.zip

unzip longhorn-1.5.3.zip

rm longhorn-1.5.3.zip

cd longhorn-1.5.3/

检查helm是否安装,软连接

# helm version

version.BuildInfo{Version:"v3.12.3", GitCommit:"3a31588ad33fe3b89af5a2a54ee1d25bfe6eaa5e", GitTreeState:"clean", GoVersion:"go1.20.7"}

# 测试跑一遍,输出yaml配置文件

# helm install longhorn ./chart/ --namespace longhorn-system --create-namespace --set defaultSettings.createDefaultDiskLabeledNodes=true --dry-run --debug

# 正式安装

# helm install longhorn ./chart/ --namespace longhorn-system --create-namespace --set defaultSettings.createDefaultDiskLabeledNodes=true

# kubectl -n longhorn-system get pod -o wide -w

12分钟后还剩10.0.1.201的longhorn-csi-plugin、longhorn-manager-rjjzr在拉取镜像

# 测试挂载存储

# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

longhorn (default) driver.longhorn.io Delete Immediate true 3h9m

nfs-boge nfs-provisioner-01 Retain Immediate false 19h

查看ui

把svc映射到宿主机端口

kubectl -n longhorn-system edit svc longhorn-frontend

NodePort

# 把ui的svc改成NodePort,查看页面,生产的话可以弄个ingress

# kubectl -n longhorn-system get svc longhorn-frontend

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

longhorn-frontend NodePort 10.68.135.61 <none> 80:31408/TCP 68m

# kubectl -n longhorn-system get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

longhorn-admission-webhook ClusterIP 10.68.31.18 <none> 9502/TCP 10m

longhorn-backend ClusterIP 10.68.128.26 <none> 9500/TCP 10m

longhorn-conversion-webhook ClusterIP 10.68.150.68 <none> 9501/TCP 10m

longhorn-engine-manager ClusterIP None <none> <none> 10m

longhorn-frontend ClusterIP 10.68.56.104 <none> 80/TCP 10m

longhorn-recovery-backend ClusterIP 10.68.30.50 <none> 9503/TCP 10m

longhorn-replica-manager ClusterIP None <none> <none> 10m

# kubectl -n longhorn-system edit svc longhorn-frontend

NodePort

# kubectl -n longhorn-system get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

longhorn-admission-webhook ClusterIP 10.68.31.18 <none> 9502/TCP 11m

longhorn-backend ClusterIP 10.68.128.26 <none> 9500/TCP 11m

longhorn-conversion-webhook ClusterIP 10.68.150.68 <none> 9501/TCP 11m

longhorn-engine-manager ClusterIP None <none> <none> 11m

longhorn-frontend NodePort 10.68.56.104 <none> 80:31121/TCP 11m

longhorn-recovery-backend ClusterIP 10.68.30.50 <none> 9503/TCP 11m

longhorn-replica-manager ClusterIP None <none> <none> 11m

10.0.1.201:31121

不调度10.0.1.201、10.0.1.202

调度 10.0.1.203

缺少 10.0.1.204

全部重启节点后

不调度10.0.1.201、10.0.1.202

调度 10.0.1.203 10.0.1.204测试

# cat test.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: test-claim

spec:

storageClassName: longhorn

accessModes:

- ReadWriteMany

resources:

requests:

storage: 1Mi # 实测最小存储分配为10Mi

---

kind: Pod

apiVersion: v1

metadata:

name: test

spec:

containers:

- name: test

# image: busybox:1.28.4

image: registry.cn-shanghai.aliyuncs.com/acs/busybox:v1.29.2

imagePullPolicy: IfNotPresent

command:

- "/bin/sh"

args:

- "-c"

- "echo 'hello k8s' > /mnt/SUCCESS && sleep 36000 || exit 1"

volumeMounts:

- name: longhorn-pvc

mountPath: "/mnt"

restartPolicy: "Never"

volumes:

- name: longhorn-pvc

persistentVolumeClaim:

claimName: test-claim# 创建命名空间

kubectl create ns test-longhron-pv

# 部署

kubectl -n test-longhron-pv apply -f test-longhron-pv.yaml

# 查看Pod

kubectl -n test-longhron-pv get pod -o wide

# 查看pod详情

kubectl -n test-longhron-pv describe pod testEvents:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedScheduling 2m10s default-scheduler 0/4 nodes are available: pod has unbound immediate PersistentVolumeClaims. preemption: 0/4 nodes are available: 4 No preemption victims found for incoming pod..

Warning FailedScheduling 2m6s default-scheduler 0/4 nodes are available: pod has unbound immediate PersistentVolumeClaims. preemption: 0/4 nodes are available: 4 No preemption victims found for incoming pod..

Normal Scheduled 2m3s default-scheduler Successfully assigned test-longhron-pv/test to 10.0.1.203

Warning FailedAttachVolume 30s attachdetach-controller AttachVolume.Attach failed for volume "pvc-2e9869a1-e128-41e6-a7c8-4d3dab399a5d" : rpc error: code = Internal desc = volume pvc-2e9869a1-e128-41e6-a7c8-4d3dab399a5d failed to attach to node 10.0.1.203 with attachmentID csi-1c42dde29e99de14bcc56fcd827b278d8e5a4789436769ce1328b59f98d1b1b4: Waiting for volume share to be available

Normal SuccessfulAttachVolume 29s attachdetach-controller AttachVolume.Attach succeeded for volume "pvc-2e9869a1-e128-41e6-a7c8-4d3dab399a5d"

Normal Pulled 24s kubelet Container image "registry.cn-shanghai.aliyuncs.com/acs/busybox:v1.29.2" already present on machine

Normal Created 24s kubelet Created container test

Normal Started 24s kubelet Started container test创建久可能是没挂载上,删除pod重启

# 写入严格限制大小

# kubectl -n test-longhron-pv exec -it test -- sh

/ # cd /mnt/

/mnt # ls -lh

total 13

-rw-r--r-- 1 root root 10 Dec 8 04:11 SUCCESS

drwx------ 2 root root 12.0K Dec 8 04:11 lost+found

# 生成文件 大小1M,11个

/mnt # dd if=/dev/zero of=./test.log bs=1M count=11

dd: ./test.log: No space left on deviceLonghorn监控

https://longhorn.io/docs/1.5.3/monitoring/

Longhorn备份还原

https://longhorn.io/docs/1.5.3/snapshots-and-backups/