prometheus-rules

1

https://blog.itmonkey.icu/2020/11/09/prometheus-operator-servicemanager/

使用prometheusrules自定义创建告警规则

介绍

首先这篇文章是跟着上一篇helm 部署prometheus-operator来的,部署完成之后,我们就需要自定义一些配置。

这篇文章主要讲解如何自定义告警规则,如何让prometheus发现他。

步骤

- 添加prometheusrules规则

- 验证

名词解释

prometheusrules,也是安装好prometheus-operator后创建的一种自定义资源,我们可以看下默认自带了哪些规则:

[root@localhost]# kubectl get prometheusrules -n monitoring

NAME AGE

prometheus-operator-me-alertmanager.rules 2d23h

prometheus-operator-me-etcd 2d23h

prometheus-operator-me-general.rules 2d23h

prometheus-operator-me-k8s.rules 2d23h

prometheus-operator-me-kube-apiserver-availability.rules 2d23h

prometheus-operator-me-kube-apiserver-slos 2d23h

prometheus-operator-me-kube-apiserver.rules 2d23h

prometheus-operator-me-kube-prometheus-general.rules 2d23h

prometheus-operator-me-kube-prometheus-node-recording.rules 2d23h

prometheus-operator-me-kube-scheduler.rules 2d23h

prometheus-operator-me-kube-state-metrics 2d23h

prometheus-operator-me-kubelet.rules 2d23h

prometheus-operator-me-kubernetes-apps 2d23h

prometheus-operator-me-kubernetes-resources 2d23h

prometheus-operator-me-kubernetes-storage 2d23h

prometheus-operator-me-kubernetes-system 2d23h

prometheus-operator-me-kubernetes-system-apiserver 2d23h

prometheus-operator-me-kubernetes-system-controller-manager 2d23h

prometheus-operator-me-kubernetes-system-kubelet 2d23h

prometheus-operator-me-kubernetes-system-scheduler 2d23h

prometheus-operator-me-node-exporter 2d23h

prometheus-operator-me-node-exporter.rules 2d23h

prometheus-operator-me-node-network 2d23h

prometheus-operator-me-node.rules 2d23h

prometheus-operator-me-prometheus 2d23h

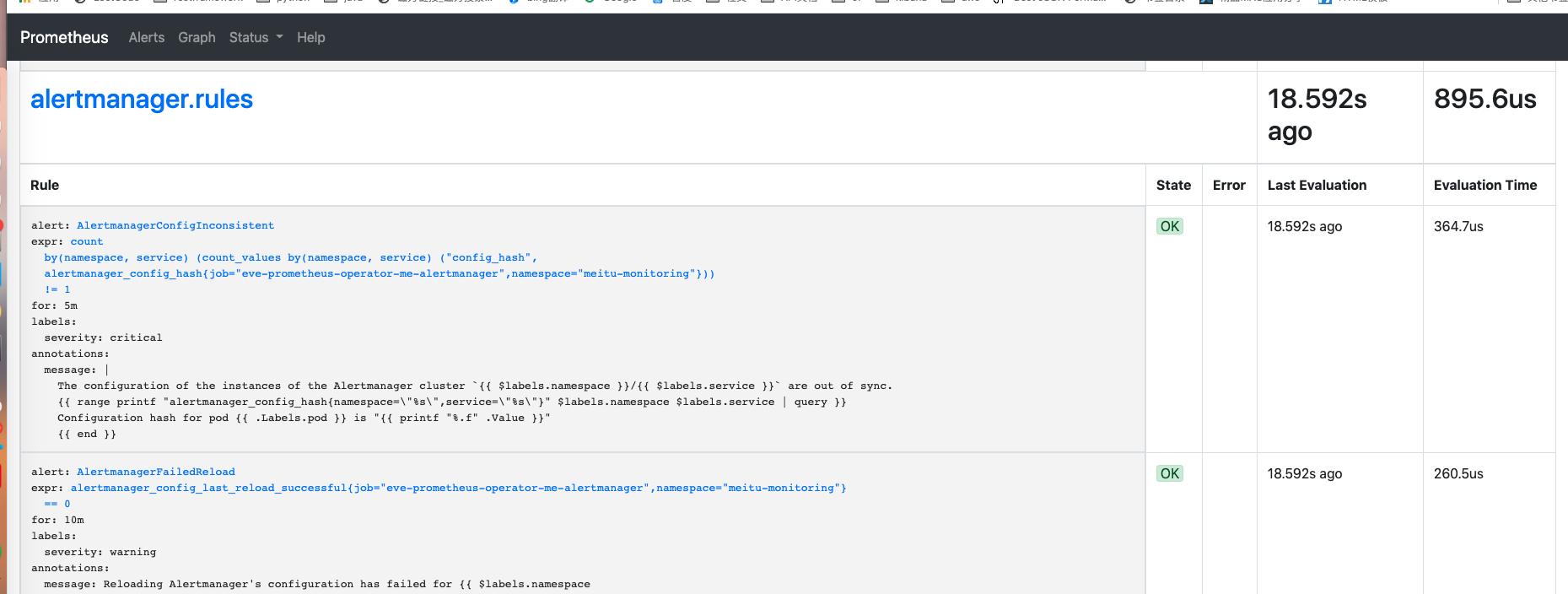

prometheus-operator-me-prometheus-operator 2d23h当然这些规则,你也可以在prometheus的界面上看到,具体也就是对应一个一个的rules

开始

①添加prometheusrules规则

创建自定义rules文件

[root@localhost]# cat demo1.yaml

apiVersion: monitoring.coreos.com/v1

kind: PrometheusRule

metadata:

labels:

app: prometheus-operator

release: eve-prometheus-operator

name: testtalus-rules-1

namespace: lb6

spec:

groups:

- name: testtalus.rules

rules:

- alert: processorNatGatewayMonitor_snat_to_hight_100

expr: processorNatGatewayMonitor_snat > 100

for: 1m

labels:

severity: warning

annotations:

summary: "nat gateway {{ $labels.natgatewayid }} snat连接数过高"

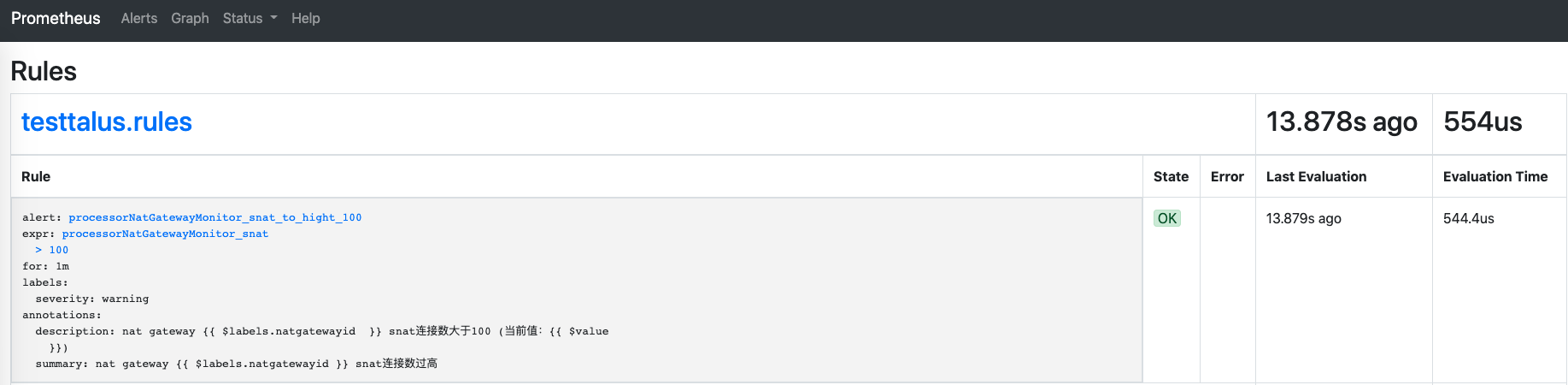

description: "nat gateway {{ $labels.natgatewayid }} snat连接数大于100 (当前值:{{ $value }})"具体的指标不解释了,这个文档一大堆,简单说下groups.name这个,就是一个组名,然后下面有很多很多的规则,比如当前processorNatGatewayMonitor_snat_to_hight_100就是testtalus.rules这个组里面的一个指标而已。

开始创建:

[root@localhost]# kubectl delete prometheusrules testtalus-rules-1 -n lb6

prometheusrule.monitoring.coreos.com "testtalus-rules-1" deleted如果你这里报错,并且报错信息如下:

[root@localhost]# kubectl apply -f demo1.yaml

Error from server (InternalError): error when creating "demo1.yaml": Internal error occurred: failed calling webhook "prometheusrulemutate.monitoring.coreos.com": Post https://prometheus-operator-me-operator.meitu-monitoring.svc:443/admission-prometheusrules/mutate?timeout=30s: context deadline exceeded (Client.Timeout exceeded while awaiting headers)那么在这里找答案:跳转

我的解决方案是:删除资源validatingwebhookconfigurations.admissionregistration.k8s.io和MutatingWebhookConfiguration,并且重新创建你的rules

[root@localhost]# kubectl get validatingwebhookconfigurations.admissionregistration.k8s.io

NAME CREATED AT

prometheus-operator-me-admission 2020-11-06T10:47:12Z

[root@localhost]# kubectl get MutatingWebhookConfiguration

NAME CREATED AT

prometheus-operator-me-admission 2020-11-06T10:47:12Z

pod-ready.config.common-webhooks.networking.gke.io 2020-02-25T13:52:06Z

[root@localhost]# kubectl delete validatingwebhookconfigurations.admissionregistration.k8s.io eve-prometheus-operator-me-admission

validatingwebhookconfiguration.admissionregistration.k8s.io "eve-prometheus-operator-me-admission" deleted

[root@localhost]# kubectl delete MutatingWebhookConfiguration eve-prometheus-operator-me-admission

mutatingwebhookconfiguration.admissionregistration.k8s.io "eve-prometheus-operator-me-admission" deleted②验证

到prometheus的rules界面,你就可以看到你自定义的规则了

2

https://segmentfault.com/a/1190000040639025

prometheus-operator使用(四) -- 自定义报警规则prometheurule

传统的prometheus单进程部署模式下,我们如何定义报警规则:

- 修改配置文件prometheus.yaml,增加报警规则定义;

- POST /-/reload让配置生效;

在prometheus-operator部署模式下,我们仅需定义prometheusrule资源对象即可,operator监听到prometheusrule资源对象被创建,会自动为我们添加告警规则文件,自动reload。

1. 默认的告警规则

prometheus-operator部署出来的prometheus默认已经有一些规则,在prometheus-k8s-0这个pod的目录下面:

# kubectl exec -it prometheus-k8s-0 /bin/sh -n monitoring

Defaulting container name to prometheus.

Use 'kubectl describe pod/prometheus-k8s-0 -n monitoring' to see all of the containers in this pod.

/prometheus $ ls -l /etc/prometheus/rules/prometheus-k8s-rulefiles-0/

monitoring-prometheus-k8s-rules.yaml

/prometheus $ ls /etc/prometheus/rules/prometheus-k8s-rulefiles-0/ -l

total 32

lrwxrwxrwx 1 root 2000 83 Jul 5 05:47 monitoring-alertmanager-main-rules-b254413c-d5fa-4518-947a-a80613bc1051.yaml -> ..data/monitoring-alertmanager-main-rules-b254413c-d5fa-4518-947a-a80613bc1051.yaml

lrwxrwxrwx 1 root 2000 73 Jul 5 05:47 monitoring-grafana-rules-24dffb10-7e71-475f-9a7d-14fa94d5b672.yaml -> ..data/monitoring-grafana-rules-24dffb10-7e71-475f-9a7d-14fa94d5b672.yaml

lrwxrwxrwx 1 root 2000 81 Jul 5 05:47 monitoring-kube-prometheus-rules-677a8ef2-4079-4957-9174-8b1162724d1f.yaml -> ..data/monitoring-kube-prometheus-rules-677a8ef2-4079-4957-9174-8b1162724d1f.yaml

lrwxrwxrwx 1 root 2000 84 Jul 5 05:47 monitoring-kube-state-metrics-rules-38790470-6a90-4fd6-b729-9ea39f070b85.yaml -> ..data/monitoring-kube-state-metrics-rules-38790470-6a90-4fd6-b729-9ea39f070b85.yaml

lrwxrwxrwx 1 root 2000 87 Jul 5 05:47 monitoring-kubernetes-monitoring-rules-beb41dea-c0bb-4930-b8da-18456f830298.yaml -> ..data/monitoring-kubernetes-monitoring-rules-beb41dea-c0bb-4930-b8da-18456f830298.yaml

lrwxrwxrwx 1 root 2000 79 Jul 5 05:47 monitoring-node-exporter-rules-4f9cb55a-aa24-42c6-bec5-a879d54842b1.yaml -> ..data/monitoring-node-exporter-rules-4f9cb55a-aa24-42c6-bec5-a879d54842b1.yaml

lrwxrwxrwx 1 root 2000 91 Jul 5 05:47 monitoring-prometheus-k8s-prometheus-rules-372349a2-a0cb-472e-b81a-41ad170a1f30.yaml -> ..data/monitoring-prometheus-k8s-prometheus-rules-372349a2-a0cb-472e-b81a-41ad170a1f30.yaml

lrwxrwxrwx 1 root 2000 85 Jul 5 05:47 monitoring-prometheus-operator-rules-60f40ae6-78f0-4b8f-b7da-cb4013d581f1.yaml -> ..data/monitoring-prometheus-operator-rules-60f40ae6-78f0-4b8f-b7da-cb4013d581f1.yaml

/prometheus $ ls -l /etc/prometheus/rules/prometheus-k8s-rulefiles-0/

total 32

lrwxrwxrwx 1 root 2000 83 Jul 5 05:47 monitoring-alertmanager-main-rules-b254413c-d5fa-4518-947a-a80613bc1051.yaml -> ..data/monitoring-alertmanager-main-rules-b254413c-d5fa-4518-947a-a80613bc1051.yaml

lrwxrwxrwx 1 root 2000 73 Jul 5 05:47 monitoring-grafana-rules-24dffb10-7e71-475f-9a7d-14fa94d5b672.yaml -> ..data/monitoring-grafana-rules-24dffb10-7e71-475f-9a7d-14fa94d5b672.yaml

lrwxrwxrwx 1 root 2000 81 Jul 5 05:47 monitoring-kube-prometheus-rules-677a8ef2-4079-4957-9174-8b1162724d1f.yaml -> ..data/monitoring-kube-prometheus-rules-677a8ef2-4079-4957-9174-8b1162724d1f.yaml

lrwxrwxrwx 1 root 2000 84 Jul 5 05:47 monitoring-kube-state-metrics-rules-38790470-6a90-4fd6-b729-9ea39f070b85.yaml -> ..data/monitoring-kube-state-metrics-rules-38790470-6a90-4fd6-b729-9ea39f070b85.yaml

lrwxrwxrwx 1 root 2000 87 Jul 5 05:47 monitoring-kubernetes-monitoring-rules-beb41dea-c0bb-4930-b8da-18456f830298.yaml -> ..data/monitoring-kubernetes-monitoring-rules-beb41dea-c0bb-4930-b8da-18456f830298.yaml

lrwxrwxrwx 1 root 2000 79 Jul 5 05:47 monitoring-node-exporter-rules-4f9cb55a-aa24-42c6-bec5-a879d54842b1.yaml -> ..data/monitoring-node-exporter-rules-4f9cb55a-aa24-42c6-bec5-a879d54842b1.yaml

lrwxrwxrwx 1 root 2000 91 Jul 5 05:47 monitoring-prometheus-k8s-prometheus-rules-372349a2-a0cb-472e-b81a-41ad170a1f30.yaml -> ..data/monitoring-prometheus-k8s-prometheus-rules-372349a2-a0cb-472e-b81a-41ad170a1f30.yaml

lrwxrwxrwx 1 root 2000 85 Jul 5 05:47 monitoring-prometheus-operator-rules-60f40ae6-78f0-4b8f-b7da-cb4013d581f1.yaml -> ..data/monitoring-prometheus-operator-rules-60f40ae6-78f0-4b8f-b7da-cb4013d581f1.yaml而这个yaml文件,就是部署prometheus-operator的时候,提供的prometheus-rules.yaml文件内容:

# pwd

/etc/kubernetes/prometheus

# ls prometheus-rules.yaml

prometheus-rules.yaml2. 创建prometheurule资源对象

我们创建1个prometheusrule资源对象后,prometheus-k8s-0这个pod下的prometheus-k8s-rulefile-0目录下,会生成一个{{namespace}}-{{rule_name}}.yaml文件。

# cat prometheus-etcdRules.yaml

apiVersion: monitoring.coreos.com/v1

kind: PrometheusRule

metadata:

labels:

prometheus: k8s

role: alert-rules

name: etcd-rules

namespace: monitoring

spec:

groups:

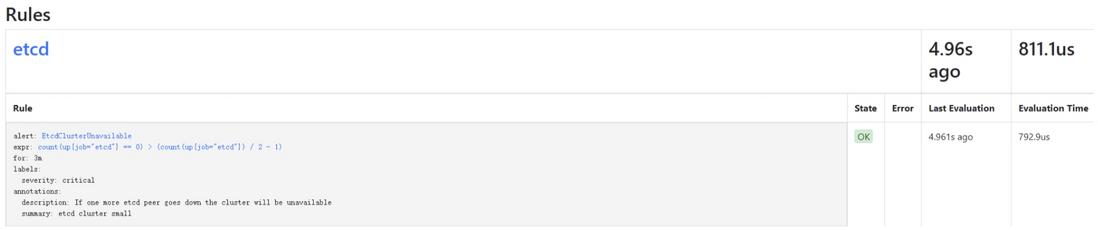

- name: etcd

rules:

- alert: EtcdClusterUnavailable

annotations:

summary: etcd cluster small

description: If one more etcd peer goes down the cluster will be unavailable

expr: |

count(up{job="etcd"} == 0) > (count(up{job="etcd"}) / 2 - 1)

for: 3m

labels:

severity: critical# cat prometheus-etcdRules.yaml

apiVersion: monitoring.coreos.com/v1

kind: PrometheusRule

metadata:

labels:

prometheus: k8s

role: alert-rules

name: etcd-rules

namespace: monitoring

spec:

groups:

- name: etcd

rules:

- alert: EtcdClusterUnavailable

annotations:

summary: etcd cluster small

description: If one more etcd peer goes down the cluster will be unavailable

expr: |

count(up{job="etcd"} == 0) > (count(up{job="etcd"}) / 2 - 1)

for: 3m

labels:

severity: criticalkubectl -n monitoring get prometheusrules

# cat prometheus-testRules.yaml

apiVersion: monitoring.coreos.com/v1

kind: PrometheusRule

metadata:

labels:

prometheus: k8s

role: alert-rules

name: test-rules

namespace: monitoring

spec:

groups:

- name: test

rules:

- alert: test-alert

annotations:

summary: test-summary

description: test-description

expr: |

vector(1)

for: 5s

labels:

severity: criticalkubectl -n monitoring apply -f prometheus-testRules.yamlyaml文件中需要标识label:

- prometheus=k8s;

- role=alert-rules;

因为prometheus实例的ruleSelector有如下的筛选规则:

ruleSelector:

matchLabels:

prometheus: k8s

role: alert-rulesyaml中定义了告警规则:etcd可用实例小于一半告警

count(up{job="etcd"} == 0) > (count(up{job="etcd"}) / 2 -1)3. prometheus dashboard确认规则已生效

进入prometheus pod看规则文件是否生成:

# kubectl exec -it prometheus-k8s-0 /bin/sh -n monitoring

/prometheus $ ls -alh /etc/prometheus/rules/prometheus-k8s-rulefiles-0/

total 0

lrwxrwxrwx 1 root 2000 33 Feb 2 07:40 monitoring-etcd-rules.yaml -> ..data/monitoring-etcd-rules.yaml

lrwxrwxrwx 1 root root 43 Feb 2 06:03 monitoring-prometheus-k8s-rules.yaml -> ..data/monitoring-prometheus-k8s-rules.yaml访问prometheus dashboard确认规则已生效:

参考: 1.Prometheus-Operator自定义报警:https://www.qikqiak.com/post/...

3

https://blog.51cto.com/u_12462495/2481281

实用干货丨如何使用Prometheus配置自定义告警规则

前 言

Prometheus是一个用于监控和告警的开源系统。一开始由Soundcloud开发,后来在2016年,它迁移到CNCF并且称为Kubernetes之后最流行的项目之一。从整个Linux服务器到stand-alone web服务器、数据库服务或一个单独的进程,它都能监控。在Prometheus术语中,它所监控的事物称为目标(Target)。每个目标单元被称为指标(metric)。它以设置好的时间间隔通过http抓取目标,以收集指标并将数据放置在其时序数据库(Time Series Database)中。你可以使用PromQL查询语言查询相关target的指标。

本文中,我们将一步一步展示如何:

- 安装Prometheus(使用prometheus-operator Helm chart)以基于自定义事件进行监控/告警

- 创建和配置自定义告警规则,它将会在满足条件时发出告警

- 集成Alertmanager以处理由客户端应用程序(在本例中为Prometheus server)发送的告警

- 将Alertmanager与发送告警通知的邮件账户集成。

理解Prometheus及其抽象概念

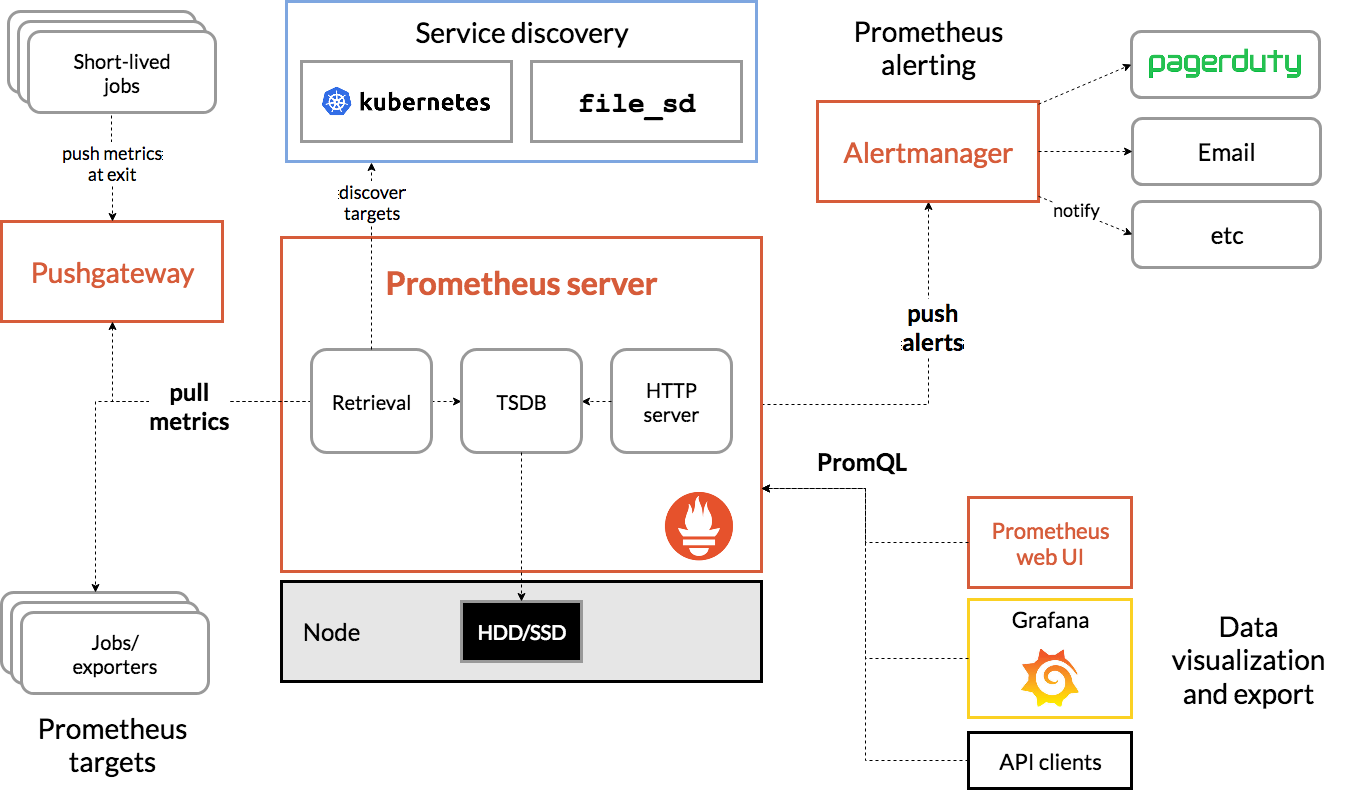

从下图我们将看到所有组成Prometheus生态的组件:

以下是与本文相关的术语,大家可以快速了解:

Prometheus Server:在时序数据库中抓取和存储指标的主要组件

抓取:一种拉取方法以获取指标。它通常以10-60秒的时间间隔抓取。

Target:检索数据的server客户端

服务发现:启用Prometheus,使其能够识别它需要监控的应用程序并在动态环境中拉取指标

Alert Manager:负责处理警报的组件(包括silencing、inhibition、聚合告警信息,并通过邮件、PagerDuty、Slack等方式发送告警通知)。

数据可视化:抓取的数据存储在本地存储中,并使用PromQL直接查询,或通过Grafana dashboard查看。

理解Prometheus Operator

根据Prometheus Operator的项目所有者CoreOS称,Prometheus Operator可以配置原生Kubernetes并且可以管理和操作Prometheus和Alertmanager集群。

该Operator引入了以下Kubernetes自定义资源定义(CRDs):Prometheus、ServiceMonitor、PrometheusRule和Alertmanager。如果你想了解更多内容可以访问链接:

https://github.com/coreos/prometheus-operator/blob/master/Documentation/design.md

在我们的演示中,我们将使用PrometheusRule来定义自定义规则。

首先,我们需要使用 stable/prometheus-operator Helm chart来安装Prometheus Operator,下载链接:

https://github.com/helm/charts/tree/master/stable/prometheus-operator

默认安装程序将会部署以下组件:prometheus-operator、prometheus、alertmanager、node-exporter、kube-state-metrics以及grafana。默认状态下,Prometheus将会抓取Kubernetes的主要组件:kube-apiserver、kube-controller-manager以及etcd。

安装Prometheus软件

前期准备

要顺利执行此次demo,你需要准备以下内容:

- 一个Google Cloud Platform账号(免费套餐即可)。其他任意云也可以

- Rancher v2.3.5(发布文章时的最新版本)

- 运行在GKE(版本1.15.9-gke.12.)上的Kubernetes集群(使用EKS或AKS也可以)

- 在计算机上安装好Helm binary

启动一个Rancher实例

直接按照这一直观的入门指南进行操作即可:

https://rancher.com/quick-start

使用Rancher部署一个GKE集群

使用Rancher来设置和配置你的Kubernetes集群:

https://rancher.com/docs/rancher/v2.x/en/cluster-provisioning/hosted-kubernetes-clusters/gke/

部署完成后,并且为kubeconfig文件配置了适当的credential和端点信息,就可以使用kubectl指向该特定集群。

部署Prometheus 软件

首先,检查一下我们所运行的Helm版本

$ helm version

version.BuildInfo{Version:"v3.1.2", GitCommit:"d878d4d45863e42fd5cff6743294a11d28a9abce", GitTreeState:"clean", GoVersion:"go1.13.8"}当我们使用Helm 3时,我们需要添加一个stable 镜像仓库,因为默认状态下不会设置该仓库。

$ helm repo add stable https://kubernetes-charts.storage.googleapis.com

"stable" has been added to your repositories$ helm repo update

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "stable" chart repository

Update Complete. ⎈ Happy Helming!⎈$ helm repo list

NAME URL

stable https://kubernetes-charts.storage.googleapis.comHelm配置完成后,我们可以开始安装prometheus-operator

$ kubectl create namespace monitoring

namespace/monitoring created$ helm install --namespace monitoring demo stable/prometheus-operator

manifest_sorter.go:192: info: skipping unknown hook: "crd-install"

manifest_sorter.go:192: info: skipping unknown hook: "crd-install"

manifest_sorter.go:192: info: skipping unknown hook: "crd-install"

manifest_sorter.go:192: info: skipping unknown hook: "crd-install"

manifest_sorter.go:192: info: skipping unknown hook: "crd-install"

manifest_sorter.go:192: info: skipping unknown hook: "crd-install"

NAME: demo

LAST DEPLOYED: Sat Mar 14 09:40:35 2020

NAMESPACE: monitoring

STATUS: deployed

REVISION: 1

NOTES:

The Prometheus Operator has been installed. Check its status by running:

kubectl --namespace monitoring get pods -l "release=demo"

Visit https://github.com/coreos/prometheus-operator for instructions on how

to create & configure Alertmanager and Prometheus instances using the Operator.规 则

除了监控之外,Prometheus还让我们创建触发告警的规则。这些规则基于Prometheus的表达式语言。只要满足条件,就会触发告警并将其发送到Alertmanager。之后,我们会看到规则的具体形式。

我们回到demo。Helm完成部署之后,我们可以检查已经创建了什么pod:

$ kubectl -n monitoring get pods

NAME READY STATUS RESTARTS AGE

alertmanager-demo-prometheus-operator-alertmanager-0 2/2 Running 0 61s

demo-grafana-5576fbf669-9l57b 3/3 Running 0 72s

demo-kube-state-metrics-67bf64b7f4-4786k 1/1 Running 0 72s

demo-prometheus-node-exporter-ll8zx 1/1 Running 0 72s

demo-prometheus-node-exporter-nqnr6 1/1 Running 0 72s

demo-prometheus-node-exporter-sdndf 1/1 Running 0 72s

demo-prometheus-operator-operator-b9c9b5457-db9dj 2/2 Running 0 72s

prometheus-demo-prometheus-operator-prometheus-0 3/3 Running 1 50s为了从web浏览器中访问Prometheus和Alertmanager,我们需要使用port转发。

由于本例中使用的是GCP实例,并且所有的kubectl命令都从该实例运行,因此我们使用实例的外部IP地址访问资源。

$ kubectl port-forward --address 0.0.0.0 -n monitoring prometheus-demo-prometheus-operator-prometheus-0 9090 >/dev/null 2>&1 &$ kubectl port-forward --address 0.0.0.0 -n monitoring alertmanager-demo-prometheus-operator-alertmanager-0 9093 >/dev/null 2>&1 &

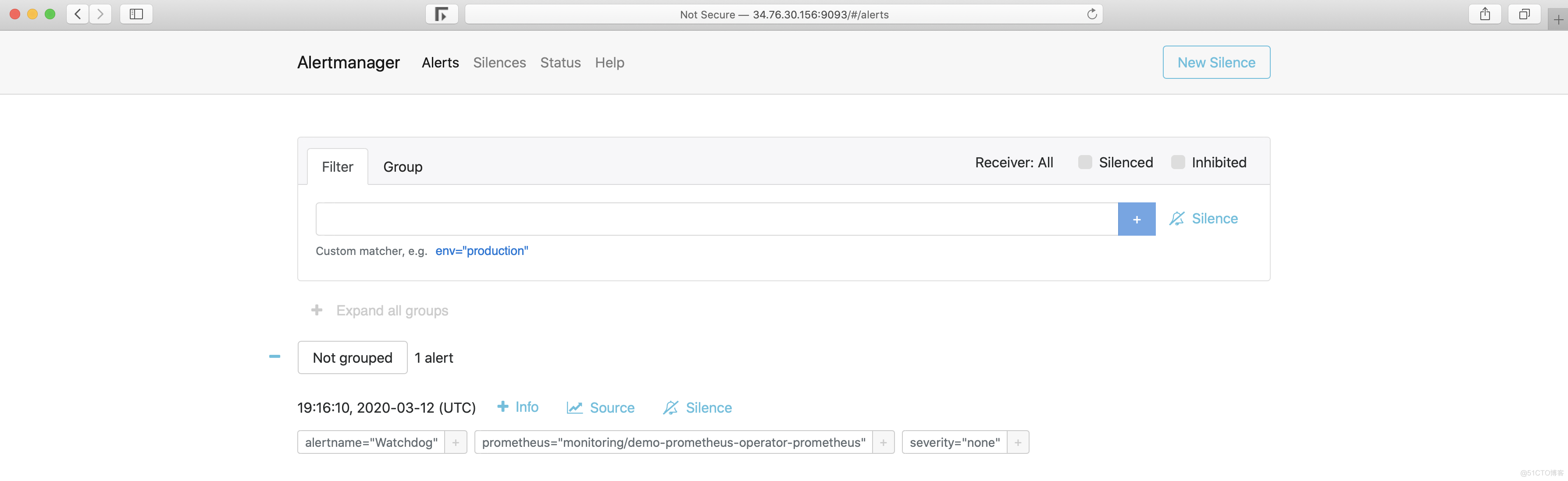

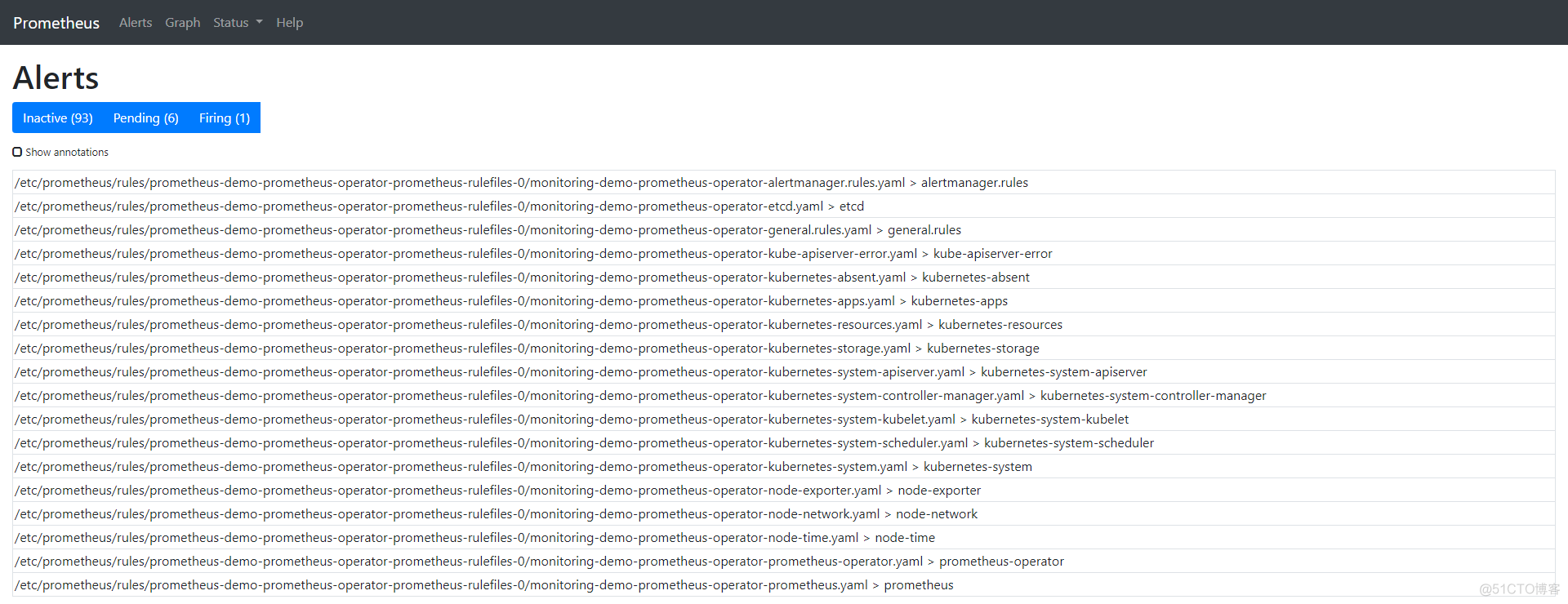

“Alert”选项卡向我们展示了所有当前正在运行/已配置的告警。也可以通过查询名称为prometheusrules的CRD从CLI进行检查:

$ kubectl -n monitoring get prometheusrules

NAME AGE

demo-prometheus-operator-alertmanager.rules 3m21s

demo-prometheus-operator-etcd 3m21s

demo-prometheus-operator-general.rules 3m21s

demo-prometheus-operator-k8s.rules 3m21s

demo-prometheus-operator-kube-apiserver-error 3m21s

demo-prometheus-operator-kube-apiserver.rules 3m21s

demo-prometheus-operator-kube-prometheus-node-recording.rules 3m21s

demo-prometheus-operator-kube-scheduler.rules 3m21s

demo-prometheus-operator-kubernetes-absent 3m21s

demo-prometheus-operator-kubernetes-apps 3m21s

demo-prometheus-operator-kubernetes-resources 3m21s

demo-prometheus-operator-kubernetes-storage 3m21s

demo-prometheus-operator-kubernetes-system 3m21s

demo-prometheus-operator-kubernetes-system-apiserver 3m21s

demo-prometheus-operator-kubernetes-system-controller-manager 3m21s

demo-prometheus-operator-kubernetes-system-kubelet 3m21s

demo-prometheus-operator-kubernetes-system-scheduler 3m21s

demo-prometheus-operator-node-exporter 3m21s

demo-prometheus-operator-node-exporter.rules 3m21s

demo-prometheus-operator-node-network 3m21s

demo-prometheus-operator-node-time 3m21s

demo-prometheus-operator-node.rules 3m21s

demo-prometheus-operator-prometheus 3m21s

demo-prometheus-operator-prometheus-operator 3m21s我们也可以检查位于prometheus容器中prometheus-operator Pod中的物理文件。

$ kubectl -n monitoring exec -it prometheus-demo-prometheus-operator-prometheus-0 -- /bin/sh

Defaulting container name to prometheus.

Use 'kubectl describe pod/prometheus-demo-prometheus-operator-prometheus-0 -n monitoring' to see all of the containers in this pod.在容器中,我们可以检查规则的存储路径:

/prometheus $ ls /etc/prometheus/rules/prometheus-demo-prometheus-operator-prometheus-rulefiles-0/

monitoring-demo-prometheus-operator-alertmanager.rules.yaml monitoring-demo-prometheus-operator-kubernetes-system-apiserver.yaml

monitoring-demo-prometheus-operator-etcd.yaml monitoring-demo-prometheus-operator-kubernetes-system-controller-manager.yaml

monitoring-demo-prometheus-operator-general.rules.yaml monitoring-demo-prometheus-operator-kubernetes-system-kubelet.yaml

monitoring-demo-prometheus-operator-k8s.rules.yaml monitoring-demo-prometheus-operator-kubernetes-system-scheduler.yaml

monitoring-demo-prometheus-operator-kube-apiserver-error.yaml monitoring-demo-prometheus-operator-kubernetes-system.yaml

monitoring-demo-prometheus-operator-kube-apiserver.rules.yaml monitoring-demo-prometheus-operator-node-exporter.rules.yaml

monitoring-demo-prometheus-operator-kube-prometheus-node-recording.rules.yaml monitoring-demo-prometheus-operator-node-exporter.yaml

monitoring-demo-prometheus-operator-kube-scheduler.rules.yaml monitoring-demo-prometheus-operator-node-network.yaml

monitoring-demo-prometheus-operator-kubernetes-absent.yaml monitoring-demo-prometheus-operator-node-time.yaml

monitoring-demo-prometheus-operator-kubernetes-apps.yaml monitoring-demo-prometheus-operator-node.rules.yaml

monitoring-demo-prometheus-operator-kubernetes-resources.yaml monitoring-demo-prometheus-operator-prometheus-operator.yaml

monitoring-demo-prometheus-operator-kubernetes-storage.yaml monitoring-demo-prometheus-operator-prometheus.yaml为了详细了解如何将这些规则加载到Prometheus中,请检查Pod的详细信息。我们可以看到用于prometheus容器的配置文件是etc/prometheus/config_out/prometheus.env.yaml。该配置文件向我们展示了文件的位置或重新检查yaml的频率设置。

$ kubectl -n monitoring describe pod prometheus-demo-prometheus-operator-prometheus-0完整命令输出如下:

Name: prometheus-demo-prometheus-operator-prometheus-0

Namespace: monitoring

Priority: 0

Node: gke-c-7dkls-default-0-c6ca178a-gmcq/10.132.0.15

Start Time: Wed, 11 Mar 2020 18:06:47 +0000

Labels: app=prometheus

controller-revision-hash=prometheus-demo-prometheus-operator-prometheus-5ccbbd8578

prometheus=demo-prometheus-operator-prometheus

statefulset.kubernetes.io/pod-name=prometheus-demo-prometheus-operator-prometheus-0

Annotations: <none>

Status: Running

IP: 10.40.0.7

IPs: <none>

Controlled By: StatefulSet/prometheus-demo-prometheus-operator-prometheus

Containers:

prometheus:

Container ID: docker://360db8a9f1cce8d72edd81fcdf8c03fe75992e6c2c59198b89807aa0ce03454c

Image: quay.io/prometheus/prometheus:v2.15.2

Image ID: docker-pullable://quay.io/prometheus/prometheus@sha256:914525123cf76a15a6aaeac069fcb445ce8fb125113d1bc5b15854bc1e8b6353

Port: 9090/TCP

Host Port: 0/TCP

Args:

--web.console.templates=/etc/prometheus/consoles

--web.console.libraries=/etc/prometheus/console_libraries

--config.file=/etc/prometheus/config_out/prometheus.env.yaml

--storage.tsdb.path=/prometheus

--storage.tsdb.retention.time=10d

--web.enable-lifecycle

--storage.tsdb.no-lockfile

--web.external-url=http://demo-prometheus-operator-prometheus.monitoring:9090

--web.route-prefix=/

State: Running

Started: Wed, 11 Mar 2020 18:07:07 +0000

Last State: Terminated

Reason: Error

Message: caller=main.go:648 msg="Starting TSDB ..."

level=info ts=2020-03-11T18:07:02.185Z caller=web.go:506 component=web msg="Start listening for connections" address=0.0.0.0:9090

level=info ts=2020-03-11T18:07:02.192Z caller=head.go:584 component=tsdb msg="replaying WAL, this may take awhile"

level=info ts=2020-03-11T18:07:02.192Z caller=head.go:632 component=tsdb msg="WAL segment loaded" segment=0 maxSegment=0

level=info ts=2020-03-11T18:07:02.194Z caller=main.go:663 fs_type=EXT4_SUPER_MAGIC

level=info ts=2020-03-11T18:07:02.194Z caller=main.go:664 msg="TSDB started"

level=info ts=2020-03-11T18:07:02.194Z caller=main.go:734 msg="Loading configuration file" filename=/etc/prometheus/config_out/prometheus.env.yaml

level=info ts=2020-03-11T18:07:02.194Z caller=main.go:517 msg="Stopping scrape discovery manager..."

level=info ts=2020-03-11T18:07:02.194Z caller=main.go:531 msg="Stopping notify discovery manager..."

level=info ts=2020-03-11T18:07:02.194Z caller=main.go:553 msg="Stopping scrape manager..."

level=info ts=2020-03-11T18:07:02.194Z caller=manager.go:814 component="rule manager" msg="Stopping rule manager..."

level=info ts=2020-03-11T18:07:02.194Z caller=manager.go:820 component="rule manager" msg="Rule manager stopped"

level=info ts=2020-03-11T18:07:02.194Z caller=main.go:513 msg="Scrape discovery manager stopped"

level=info ts=2020-03-11T18:07:02.194Z caller=main.go:527 msg="Notify discovery manager stopped"

level=info ts=2020-03-11T18:07:02.194Z caller=main.go:547 msg="Scrape manager stopped"

level=info ts=2020-03-11T18:07:02.197Z caller=notifier.go:598 component=notifier msg="Stopping notification manager..."

level=info ts=2020-03-11T18:07:02.197Z caller=main.go:718 msg="Notifier manager stopped"

level=error ts=2020-03-11T18:07:02.197Z caller=main.go:727 err="error loading config from \"/etc/prometheus/config_out/prometheus.env.yaml\": couldn't load configuration (--config.file=\"/etc/prometheus/config_out/prometheus.env.yaml\"): open /etc/prometheus/config_out/prometheus.env.yaml: no such file or directory"

Exit Code: 1

Started: Wed, 11 Mar 2020 18:07:02 +0000

Finished: Wed, 11 Mar 2020 18:07:02 +0000

Ready: True

Restart Count: 1

Liveness: http-get http://:web/-/healthy delay=0s timeout=3s period=5s #success=1 #failure=6

Readiness: http-get http://:web/-/ready delay=0s timeout=3s period=5s #success=1 #failure=120

Environment: <none>

Mounts:

/etc/prometheus/certs from tls-assets (ro)

/etc/prometheus/config_out from config-out (ro)

/etc/prometheus/rules/prometheus-demo-prometheus-operator-prometheus-rulefiles-0 from prometheus-demo-prometheus-operator-prometheus-rulefiles-0 (rw)

/prometheus from prometheus-demo-prometheus-operator-prometheus-db (rw)

/var/run/secrets/kubernetes.io/serviceaccount from demo-prometheus-operator-prometheus-token-jvbrr (ro)

prometheus-config-reloader:

Container ID: docker://de27cdad7067ebd5154c61b918401b2544299c161850daf3e317311d2d17af3d

Image: quay.io/coreos/prometheus-config-reloader:v0.37.0

Image ID: docker-pullable://quay.io/coreos/prometheus-config-reloader@sha256:5e870e7a99d55a5ccf086063efd3263445a63732bc4c04b05cf8b664f4d0246e

Port: <none>

Host Port: <none>

Command:

/bin/prometheus-config-reloader

Args:

--log-format=logfmt

--reload-url=http://127.0.0.1:9090/-/reload

--config-file=/etc/prometheus/config/prometheus.yaml.gz

--config-envsubst-file=/etc/prometheus/config_out/prometheus.env.yaml

State: Running

Started: Wed, 11 Mar 2020 18:07:04 +0000

Ready: True

Restart Count: 0

Limits:

cpu: 100m

memory: 25Mi

Requests:

cpu: 100m

memory: 25Mi

Environment:

POD_NAME: prometheus-demo-prometheus-operator-prometheus-0 (v1:metadata.name)

Mounts:

/etc/prometheus/config from config (rw)

/etc/prometheus/config_out from config-out (rw)

/var/run/secrets/kubernetes.io/serviceaccount from demo-prometheus-operator-prometheus-token-jvbrr (ro)

rules-configmap-reloader:

Container ID: docker://5804e45380ed1b5374a4c2c9ee4c9c4e365bee93b9ccd8b5a21f50886ea81a91

Image: quay.io/coreos/configmap-reload:v0.0.1

Image ID: docker-pullable://quay.io/coreos/configmap-reload@sha256:e2fd60ff0ae4500a75b80ebaa30e0e7deba9ad107833e8ca53f0047c42c5a057

Port: <none>

Host Port: <none>

Args:

--webhook-url=http://127.0.0.1:9090/-/reload

--volume-dir=/etc/prometheus/rules/prometheus-demo-prometheus-operator-prometheus-rulefiles-0

State: Running

Started: Wed, 11 Mar 2020 18:07:06 +0000

Ready: True

Restart Count: 0

Limits:

cpu: 100m

memory: 25Mi

Requests:

cpu: 100m

memory: 25Mi

Environment: <none>

Mounts:

/etc/prometheus/rules/prometheus-demo-prometheus-operator-prometheus-rulefiles-0 from prometheus-demo-prometheus-operator-prometheus-rulefiles-0 (rw)

/var/run/secrets/kubernetes.io/serviceaccount from demo-prometheus-operator-prometheus-token-jvbrr (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

config:

Type: Secret (a volume populated by a Secret)

SecretName: prometheus-demo-prometheus-operator-prometheus

Optional: false

tls-assets:

Type: Secret (a volume populated by a Secret)

SecretName: prometheus-demo-prometheus-operator-prometheus-tls-assets

Optional: false

config-out:

Type: EmptyDir (a temporary directory that shares a pod's lifetime)

Medium:

SizeLimit: <unset>

prometheus-demo-prometheus-operator-prometheus-rulefiles-0:

Type: ConfigMap (a volume populated by a ConfigMap)

Name: prometheus-demo-prometheus-operator-prometheus-rulefiles-0

Optional: false

prometheus-demo-prometheus-operator-prometheus-db:

Type: EmptyDir (a temporary directory that shares a pod's lifetime)

Medium:

SizeLimit: <unset>

demo-prometheus-operator-prometheus-token-jvbrr:

Type: Secret (a volume populated by a Secret)

SecretName: demo-prometheus-operator-prometheus-token-jvbrr

Optional: false

QoS Class: Burstable

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute for 300s

node.kubernetes.io/unreachable:NoExecute for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 4m51s default-scheduler Successfully assigned monitoring/prometheus-demo-prometheus-operator-prometheus-0 to gke-c-7dkls-default-0-c6ca178a-gmcq

Normal Pulling 4m45s kubelet, gke-c-7dkls-default-0-c6ca178a-gmcq Pulling image "quay.io/prometheus/prometheus:v2.15.2"

Normal Pulled 4m39s kubelet, gke-c-7dkls-default-0-c6ca178a-gmcq Successfully pulled image "quay.io/prometheus/prometheus:v2.15.2"

Normal Pulling 4m36s kubelet, gke-c-7dkls-default-0-c6ca178a-gmcq Pulling image "quay.io/coreos/prometheus-config-reloader:v0.37.0"

Normal Pulled 4m35s kubelet, gke-c-7dkls-default-0-c6ca178a-gmcq Successfully pulled image "quay.io/coreos/prometheus-config-reloader:v0.37.0"

Normal Pulling 4m34s kubelet, gke-c-7dkls-default-0-c6ca178a-gmcq Pulling image "quay.io/coreos/configmap-reload:v0.0.1"

Normal Started 4m34s kubelet, gke-c-7dkls-default-0-c6ca178a-gmcq Started container prometheus-config-reloader

Normal Created 4m34s kubelet, gke-c-7dkls-default-0-c6ca178a-gmcq Created container prometheus-config-reloader

Normal Pulled 4m33s kubelet, gke-c-7dkls-default-0-c6ca178a-gmcq Successfully pulled image "quay.io/coreos/configmap-reload:v0.0.1"

Normal Created 4m32s (x2 over 4m36s) kubelet, gke-c-7dkls-default-0-c6ca178a-gmcq Created container prometheus

Normal Created 4m32s kubelet, gke-c-7dkls-default-0-c6ca178a-gmcq Created container rules-configmap-reloader

Normal Started 4m32s kubelet, gke-c-7dkls-default-0-c6ca178a-gmcq Started container rules-configmap-reloader

Normal Pulled 4m32s kubelet, gke-c-7dkls-default-0-c6ca178a-gmcq Container image "quay.io/prometheus/prometheus:v2.15.2" already present on machine

Normal Started 4m31s (x2 over 4m36s) kubelet, gke-c-7dkls-default-0-c6ca178a-gmcq Started container prometheus让我们清理默认规则,使得我们可以更好地观察我们将要创建的那个规则。以下命令将删除所有规则,但会留下monitoring-demo-prometheus-operator-alertmanager.rules。

$ kubectl -n monitoring delete prometheusrules $(kubectl -n monitoring get prometheusrules | grep -v alert)$ kubectl -n monitoring get prometheusrules

NAME AGE

demo-prometheus-operator-alertmanager.rules 8m53s请注意:我们只保留一条规则是为了让demo更容易。但是有一条规则,你绝对不能删除,它位于monitoring-demo-prometheus-operator-general.rules.yaml中,被称为看门狗。该告警总是处于触发状态,其目的是确保整个告警流水线正常运转。

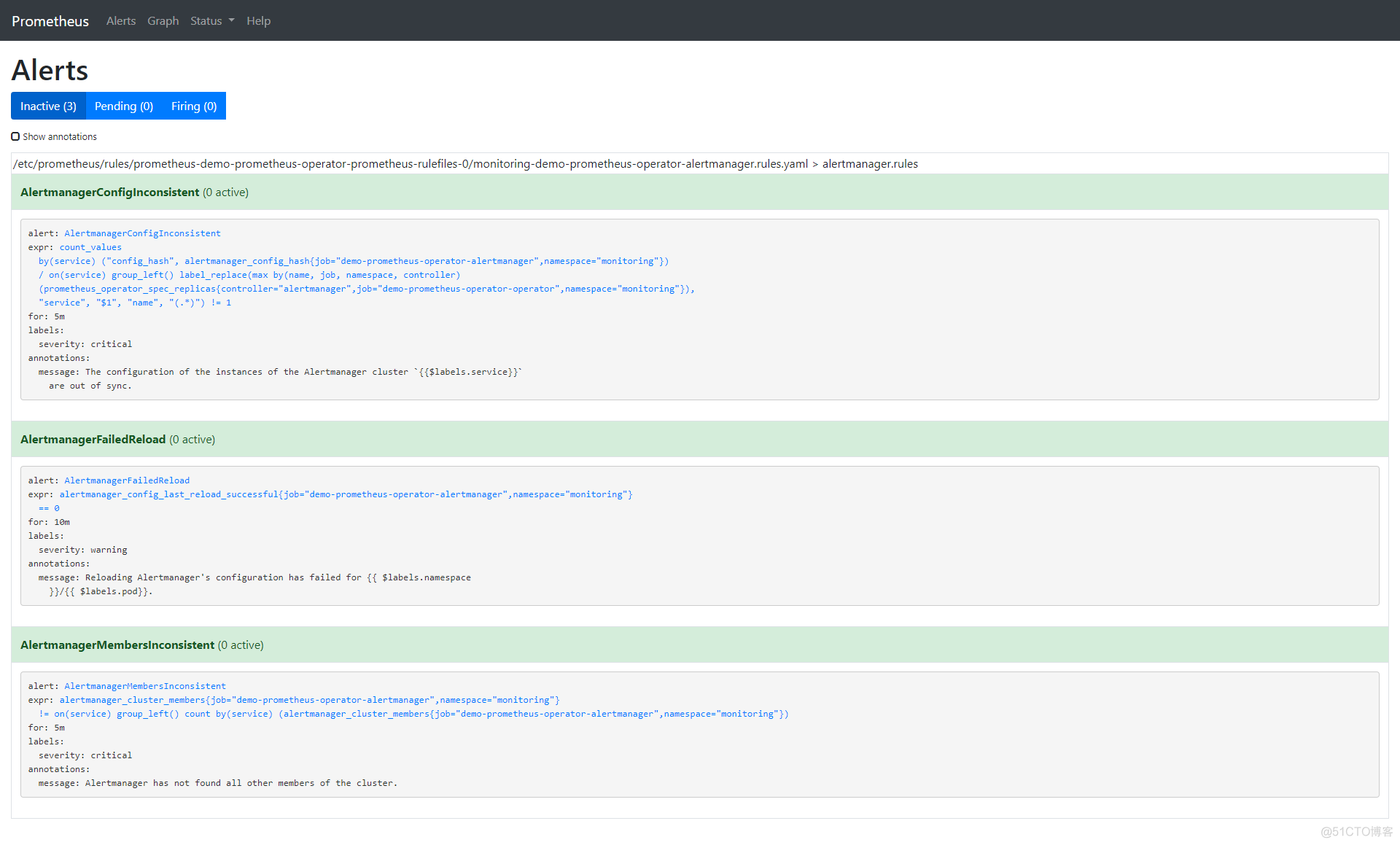

让我们从CLI中检查我们留下的规则并将其与我们将在浏览器中看到的进行比较。

$ kubectl -n monitoring describe prometheusrule demo-prometheus-operator-alertmanager.rules

Name: demo-prometheus-operator-alertmanager.rules

Namespace: monitoring

Labels: app=prometheus-operator

chart=prometheus-operator-8.12.1

heritage=Tiller

release=demo

Annotations: prometheus-operator-validated: true

API Version: monitoring.coreos.com/v1

Kind: PrometheusRule

Metadata:

Creation Timestamp: 2020-03-11T18:06:25Z

Generation: 1

Resource Version: 4871

Self Link: /apis/monitoring.coreos.com/v1/namespaces/monitoring/prometheusrules/demo-prometheus-operator-alertmanager.rules

UID: 6a84dbb0-feba-4f17-b3dc-4b6486818bc0

Spec:

Groups:

Name: alertmanager.rules

Rules:

Alert: AlertmanagerConfigInconsistent

Annotations:

Message: The configuration of the instances of the Alertmanager cluster `{{$labels.service}}` are out of sync.

Expr: count_values("config_hash", alertmanager_config_hash{job="demo-prometheus-operator-alertmanager",namespace="monitoring"}) BY (service) / ON(service) GROUP_LEFT() label_replace(max(prometheus_operator_spec_replicas{job="demo-prometheus-operator-operator",namespace="monitoring",controller="alertmanager"}) by (name, job, namespace, controller), "service", "$1", "name", "(.*)") != 1

For: 5m

Labels:

Severity: critical

Alert: AlertmanagerFailedReload

Annotations:

Message: Reloading Alertmanager's configuration has failed for {{ $labels.namespace }}/{{ $labels.pod}}.

Expr: alertmanager_config_last_reload_successful{job="demo-prometheus-operator-alertmanager",namespace="monitoring"} == 0

For: 10m

Labels:

Severity: warning

Alert: AlertmanagerMembersInconsistent

Annotations:

Message: Alertmanager has not found all other members of the cluster.

Expr: alertmanager_cluster_members{job="demo-prometheus-operator-alertmanager",namespace="monitoring"}

!= on (service) GROUP_LEFT()

count by (service) (alertmanager_cluster_members{job="demo-prometheus-operator-alertmanager",namespace="monitoring"})

For: 5m

Labels:

Severity: critical

Events: <none>

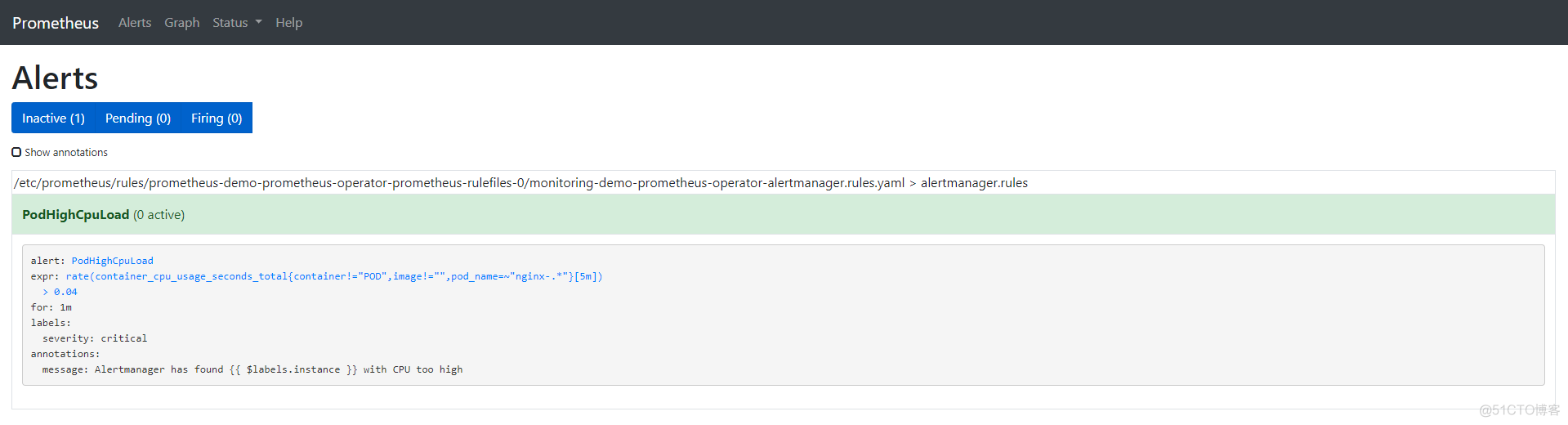

让我们移除所有默认告警并创建一个我们自己的告警:

$ kubectl -n monitoring edit prometheusrules demo-prometheus-operator-alertmanager.rules

prometheusrule.monitoring.coreos.com/demo-prometheus-operator-alertmanager.rules edited我们的自定义告警如下所示:

$ kubectl -n monitoring describe prometheusrule demo-prometheus-operator-alertmanager.rules

Name: demo-prometheus-operator-alertmanager.rules

Namespace: monitoring

Labels: app=prometheus-operator

chart=prometheus-operator-8.12.1

heritage=Tiller

release=demo

Annotations: prometheus-operator-validated: true

API Version: monitoring.coreos.com/v1

Kind: PrometheusRule

Metadata:

Creation Timestamp: 2020-03-11T18:06:25Z

Generation: 3

Resource Version: 18180

Self Link: /apis/monitoring.coreos.com/v1/namespaces/monitoring/prometheusrules/demo-prometheus-operator-alertmanager.rules

UID: 6a84dbb0-feba-4f17-b3dc-4b6486818bc0

Spec:

Groups:

Name: alertmanager.rules

Rules:

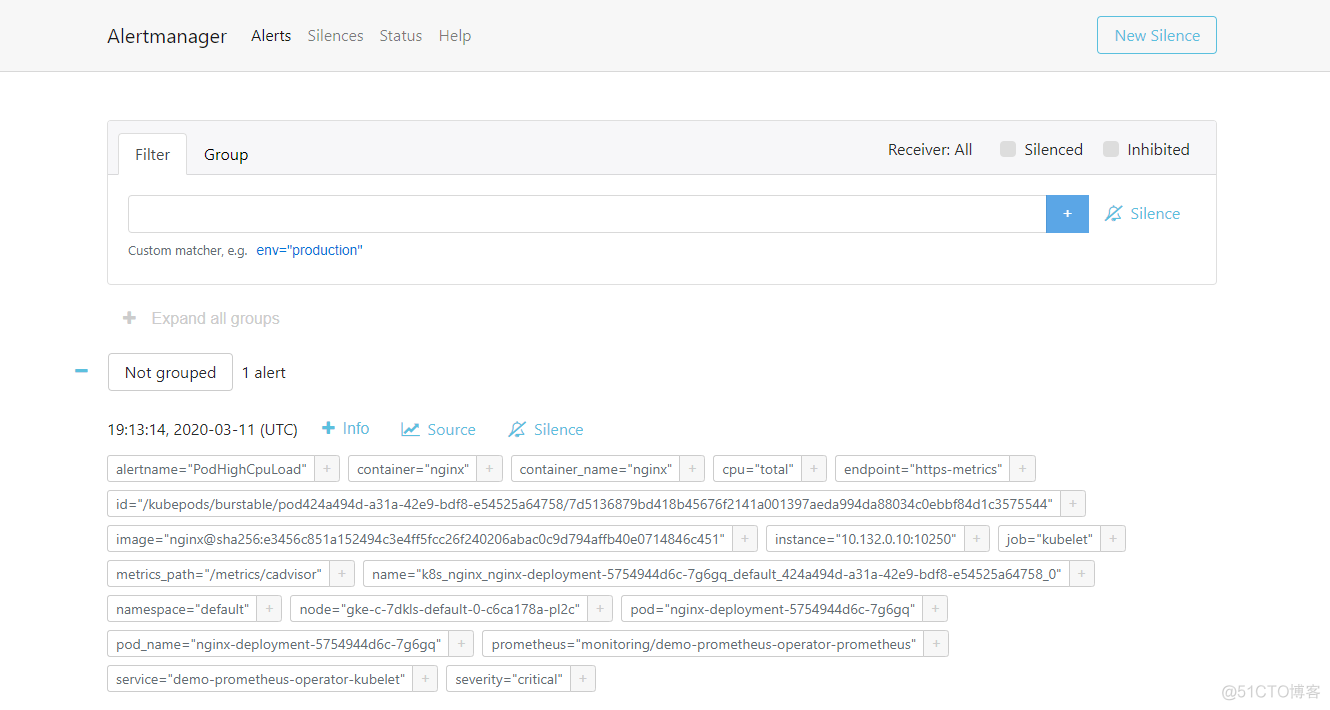

Alert: PodHighCpuLoad

Annotations:

Message: Alertmanager has found {{ $labels.instance }} with CPU too high

Expr: rate (container_cpu_usage_seconds_total{pod_name=~"nginx-.*", image!="", container!="POD"}[5m]) > 0.04

For: 1m

Labels:

Severity: critical

Events: <none>

以下是我们创建的告警的选项:

- annotation:描述告警的信息标签集。

- expr:由PromQL写的表达式

- for:可选参数,设置了之后会告诉Prometheus在定义的时间段内告警是否处于active状态。仅在此定义时间后才会触发告警。

- label:可以附加到告警的额外标签。如果你想了解更多关于告警的信息,可以访问: https://prometheus.io/docs/prometheus/latest/configuration/alerting_rules/

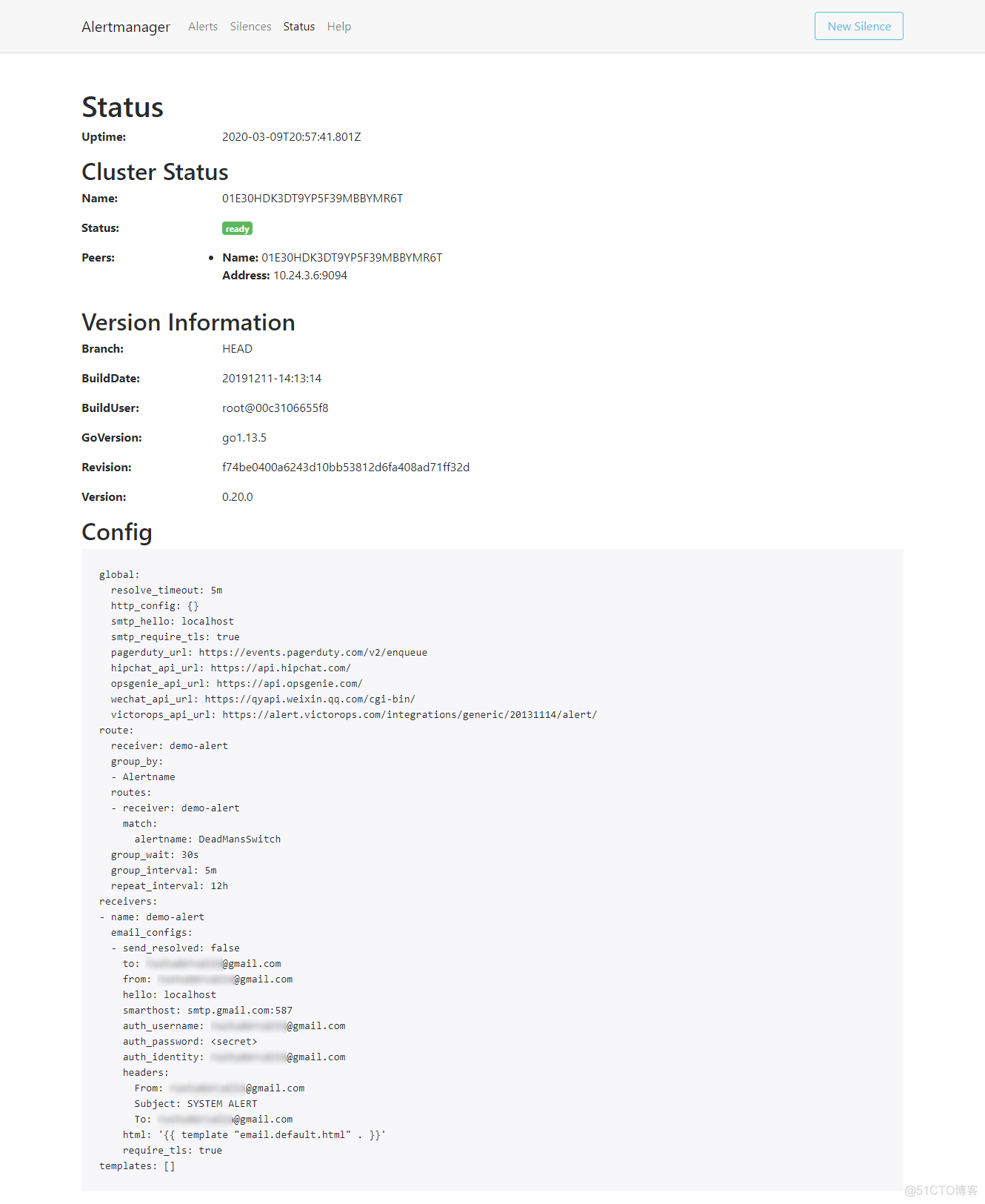

现在我们已经完成了Prometheus告警的设置,让我们配置Alertmanager,使得我们能够通过电子邮件获得告警通知。Alertmanager的配置位于Kubernetes secret对象中。

$ kubectl get secrets -n monitoring

NAME TYPE DATA AGE

alertmanager-demo-prometheus-operator-alertmanager Opaque 1 32m

default-token-x4rgq kubernetes.io/service-account-token 3 37m

demo-grafana Opaque 3 32m

demo-grafana-test-token-p6qnk kubernetes.io/service-account-token 3 32m

demo-grafana-token-ff6nl kubernetes.io/service-account-token 3 32m

demo-kube-state-metrics-token-vmvbr kubernetes.io/service-account-token 3 32m

demo-prometheus-node-exporter-token-wlnk9 kubernetes.io/service-account-token 3 32m

demo-prometheus-operator-admission Opaque 3 32m

demo-prometheus-operator-alertmanager-token-rrx4k kubernetes.io/service-account-token 3 32m

demo-prometheus-operator-operator-token-q9744 kubernetes.io/service-account-token 3 32m

demo-prometheus-operator-prometheus-token-jvbrr kubernetes.io/service-account-token 3 32m

prometheus-demo-prometheus-operator-prometheus Opaque 1 31m

prometheus-demo-prometheus-operator-prometheus-tls-assets Opaque 0 31m我们只对alertmanager-demo-prometheus-operator-alertmanager感兴趣。让我们看一下:

kubectl -n monitoring get secret alertmanager-demo-prometheus-operator-alertmanager -o yaml

apiVersion: v1

data:

alertmanager.yaml: Z2xvYmFsOgogIHJlc29sdmVfdGltZW91dDogNW0KcmVjZWl2ZXJzOgotIG5hbWU6ICJudWxsIgpyb3V0ZToKICBncm91cF9ieToKICAtIGpvYgogIGdyb3VwX2ludGVydmFsOiA1bQogIGdyb3VwX3dhaXQ6IDMwcwogIHJlY2VpdmVyOiAibnVsbCIKICByZXBlYXRfaW50ZXJ2YWw6IDEyaAogIHJvdXRlczoKICAtIG1hdGNoOgogICAgICBhbGVydG5hbWU6IFdhdGNoZG9nCiAgICByZWNlaXZlcjogIm51bGwiCg==

kind: Secret

metadata:

creationTimestamp: "2020-03-11T18:06:24Z"

labels:

app: prometheus-operator-alertmanager

chart: prometheus-operator-8.12.1

heritage: Tiller

release: demo

name: alertmanager-demo-prometheus-operator-alertmanager

namespace: monitoring

resourceVersion: "3018"

selfLink: /api/v1/namespaces/monitoring/secrets/alertmanager-demo-prometheus-operator-alertmanager

uid: 6baf6883-f690-47a1-bb49-491935956c22

type: Opaquealertmanager.yaml字段是由base64编码的,让我们看看:

$ echo 'Z2xvYmFsOgogIHJlc29sdmVfdGltZW91dDogNW0KcmVjZWl2ZXJzOgotIG5hbWU6ICJudWxsIgpyb3V0ZToKICBncm91cF9ieToKICAtIGpvYgogIGdyb3VwX2ludGVydmFsOiA1bQogIGdyb3VwX3dhaXQ6IDMwcwogIHJlY2VpdmVyOiAibnVsbCIKICByZXBlYXRfaW50ZXJ2YWw6IDEyaAogIHJvdXRlczoKICAtIG1hdGNoOgogICAgICBhbGVydG5hbWU6IFdhdGNoZG9nCiAgICByZWNlaXZlcjogIm51bGwiCg==' | base64 --decode

global:

resolve_timeout: 5m

receivers:

- name: "null"

route:

group_by:

- job

group_interval: 5m

group_wait: 30s

receiver: "null"

repeat_interval: 12h

routes:

- match:

alertname: Watchdog

receiver: "null"正如我们所看到的,这是默认的Alertmanager配置。你也可以在Alertmanager UI的Status选项卡中查看此配置。接下来,我们来对它进行一些更改——在本例中为发送邮件:

$ cat alertmanager.yaml

global:

resolve_timeout: 5m

route:

group_by: [Alertname]

# Send all notifications to me.

receiver: demo-alert

group_wait: 30s

group_interval: 5m

repeat_interval: 12h

routes:

- match:

alertname: DemoAlertName

receiver: 'demo-alert'

receivers:

- name: demo-alert

email_configs:

- to: your_email@gmail.com

from: from_email@gmail.com

# Your smtp server address

smarthost: smtp.gmail.com:587

auth_username: from_email@gmail.com

auth_identity: from_email@gmail.com

auth_password: 16letter_generated token # you can use gmail account password, but better create a dedicated token for this

headers:

From: from_email@gmail.com

Subject: 'Demo ALERT'首先,我们需要对此进行编码:

$ cat alertmanager.yaml | base64 -w0我们获得编码输出后,我们需要在我们将要应用的yaml文件中填写它:

cat alertmanager-secret-k8s.yaml

apiVersion: v1

data:

alertmanager.yaml: <paste here de encoded content of alertmanager.yaml>

kind: Secret

metadata:

name: alertmanager-demo-prometheus-operator-alertmanager

namespace: monitoring

type: Opaque$ kubectl apply -f alertmanager-secret-k8s.yaml

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

secret/alertmanager-demo-prometheus-operator-alertmanager configured该配置将会自动重新加载并在UI中显示更改。

接下来,我们部署一些东西来对其进行监控。对于本例而言,一个简单的nginx deployment已经足够:

$ cat nginx-deployment.yaml

apiVersion: apps/v1 # for versions before 1.9.0 use apps/v1beta2

kind: Deployment

metadata:

name: nginx-deployment

spec:

selector:

matchLabels:

app: nginx

replicas: 3 # tells deployment to run 2 pods matching the template

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.7.9

ports:

- containerPort: 80$ kubectl apply -f nginx-deployment.yaml

deployment.apps/nginx-deployment created根据配置yaml,我们有3个副本:

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-deployment-5754944d6c-7g6gq 1/1 Running 0 67s

nginx-deployment-5754944d6c-lhvx8 1/1 Running 0 67s

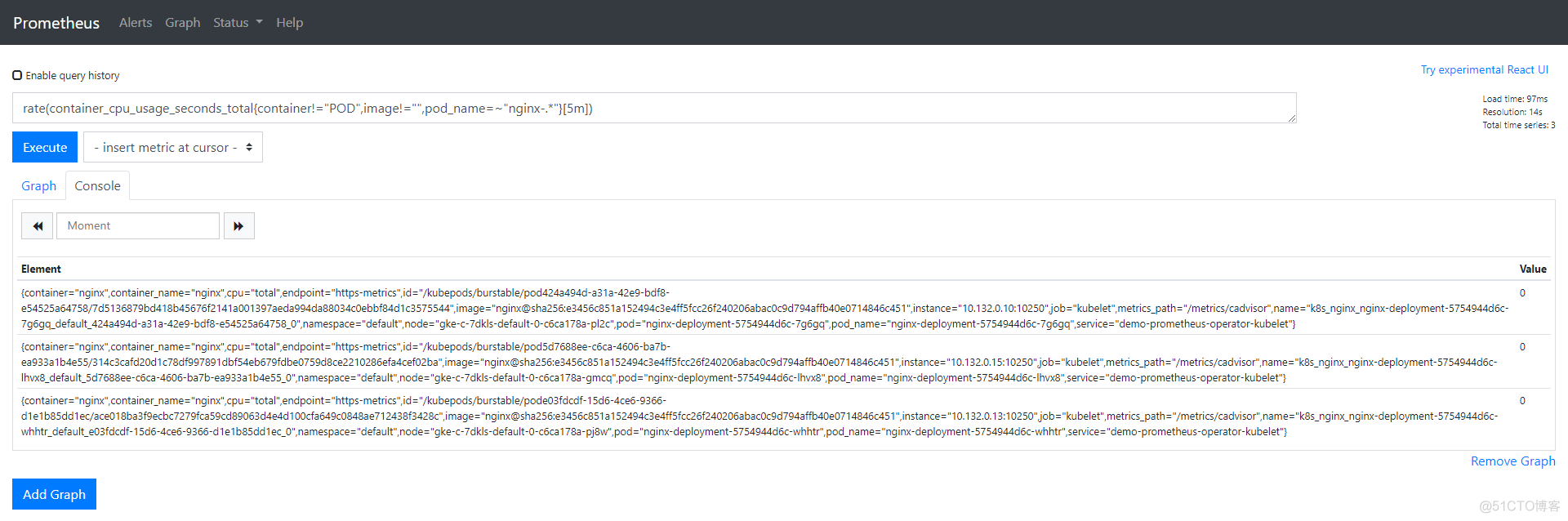

nginx-deployment-5754944d6c-whhtr 1/1 Running 0 67s在Prometheus UI中,使用我们为告警配置的相同表达式:

rate (container_cpu_usage_seconds_total{pod_name=~"nginx-.*", image!="", container!="POD"}[5m])我们可以为这些Pod检查数据,所有Pod的值应该为0。

让我们在其中一个pod中添加一些负载,然后来看看值的变化,当值大于0.04时,我们应该接收到告警:

$ kubectl exec -it nginx-deployment-5754944d6c-7g6gq -- /bin/sh

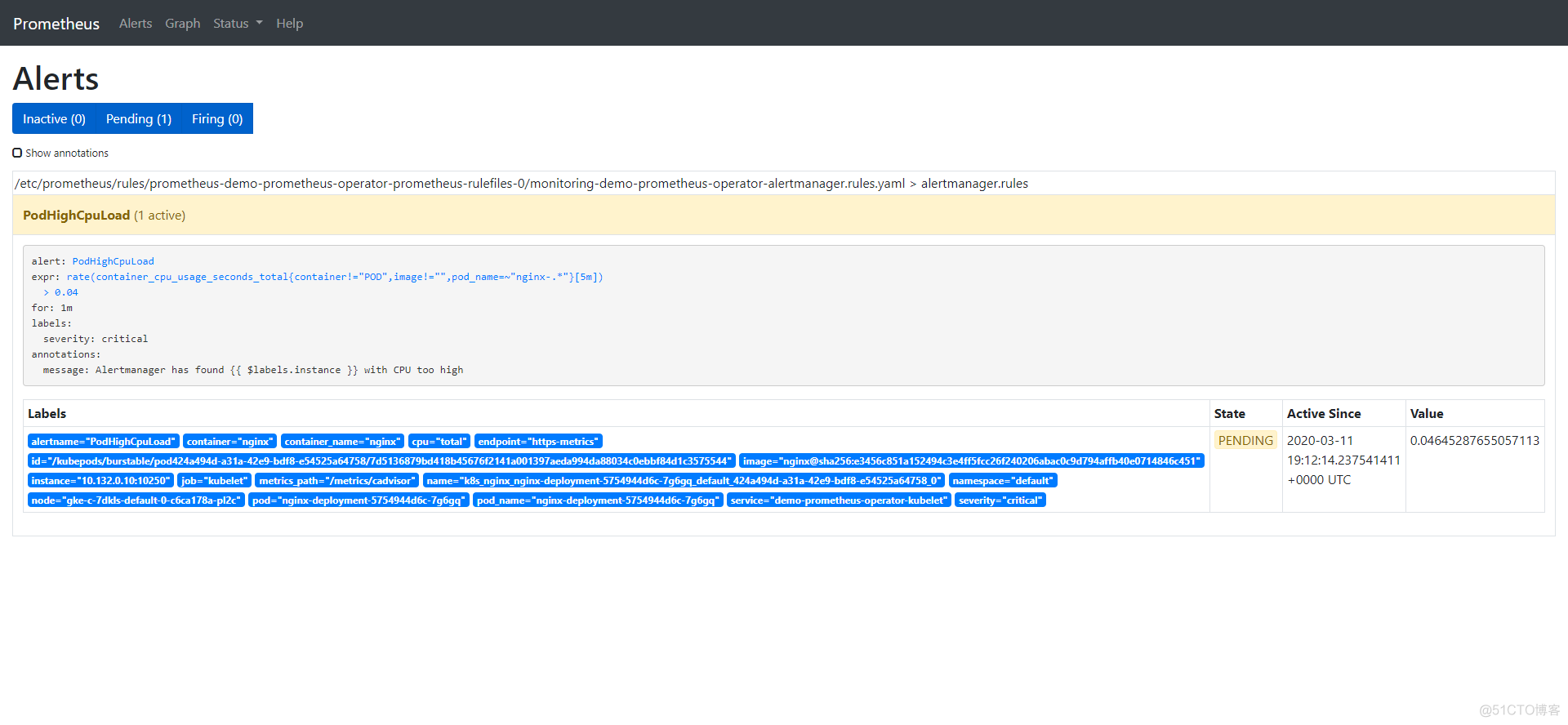

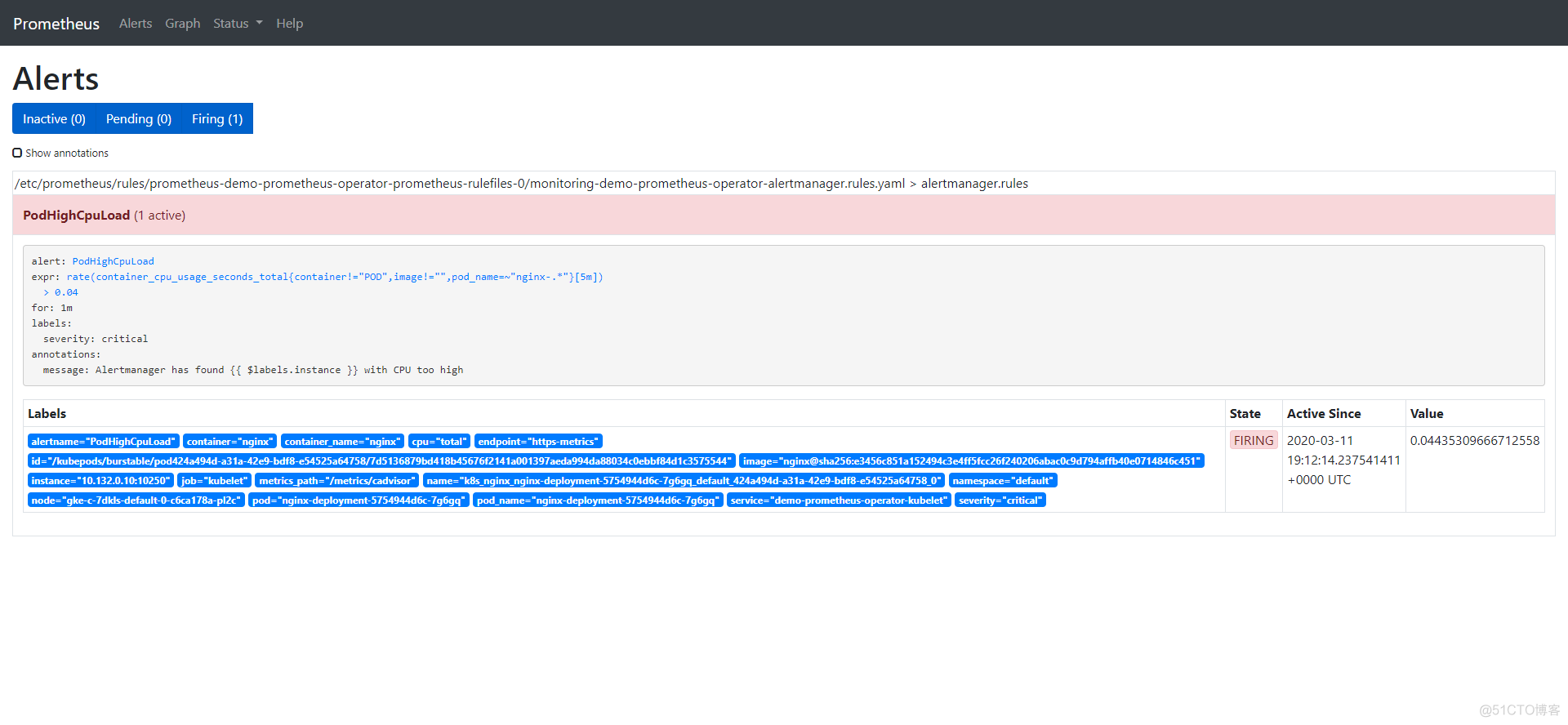

# yes > /dev/null该告警有3个阶段:

- Inactive:不满足告警触发条件

- Pending:条件已满足

- Firing:触发告警

我们已经看到告警处于inactive状态,所以在CPU上添加一些负载,以观察到剩余两种状态:

告警一旦触发,将会在Alertmanager中显示:

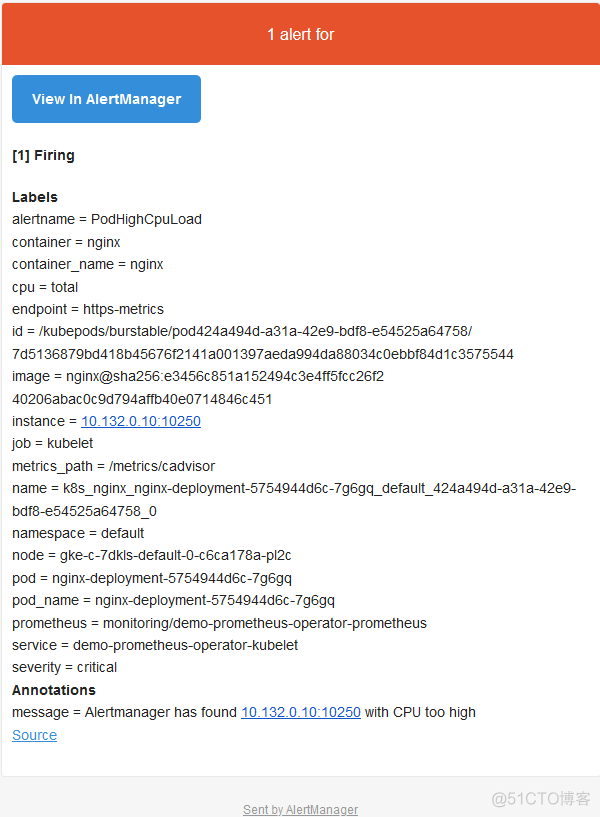

Alertmanger配置为当我们收到告警时发送邮件。所以此时,如果我们检查收件箱,会看到类似以下内容:

总 结

我们知道监控的重要性,但是如果没有告警,它将是不完整的。发生问题时,告警可以立即通知我们,让我们立即了解到系统出现了问题。而Prometheus涵盖了这两个方面:既有监控解决方案又通过Alertmanager组件发出告警。本文中,我们看到了如何在Prometheus配置中定义告警以及告警在触发时如何到达Alertmanager。然后根据Alertmanager的定义/集成,我们收到了一封电子邮件,其中包含触发的告警的详细信息(也可以通过Slack或PagerDuty发送)。

4

https://www.cnblogs.com/nb-blog/p/18101799

Kubernetes集群Prometheus Operator钉钉报警配置

文档参考《Kubernetes环境使用Prometheus Operator自发现监控SpringBoot》,各类监控项的数据采集,以及grafana的监控展示测试都正常,于是进入下一步报警的迁入测试,alertmanager原生不支持钉钉报警,所以只能通过webhook的方式,好在已经有大佬开源了一套基于prometheus 钉钉报警的webhook(项目地址https://github.com/timonwong/prometheus-webhook-dingtalk),所以我们直接配置使用就可以了。

怎么创建钉钉机器人非常简单这里就不介绍了,创建好钉钉机器人以后,下一步就是部署webhook,接收alertmanager的报警信息,格式化以后再发送给钉钉机器人。非kubernetes集群部署也是非常简单,直接编写一个docker-compose文件,直接运行就可以了。

1、在kubernetes集群中,pod之间需要通信,需要使用service,所以先编写一个kubernetes的yaml文件dingtalk-webhook.yaml。

[](javascript:void(0)😉

apiVersion: apps/v1

kind: Deployment

metadata:

name: webhook-dingtalk

namespace: monitoring

spec:

selector:

matchLabels:

app: dingtalk

replicas: 1

template:

metadata:

labels:

app: dingtalk

spec:

restartPolicy: Always

containers:

- name: dingtalk

image: timonwong/prometheus-webhook-dingtalk:v1.4.0

imagePullPolicy: IfNotPresent

args:

- '--web.enable-ui'

- '--web.enable-lifecycle'

- '--config.file=/config/config.yaml'

ports:

- containerPort: 8060

protocol: TCP

volumeMounts:

- mountPath: "/config"

name: dingtalk-volume

resources:

limits:

cpu: 100m

memory: 100Mi

requests:

cpu: 100m

memory: 100Mi

volumes:

- name: dingtalk-volume

persistentVolumeClaim:

claimName: dingding-pvc

---

apiVersion: v1

kind: Service

metadata:

name: webhook-dingtalk

namespace: monitoring

spec:

ports:

- port: 80

protocol: TCP

targetPort: 8060

selector:

app: dingtalk

sessionAffinity: None[](javascript:void(0)😉

1.1、第一种方式通过数据持久化,把配置文件config.yaml和报警模板放在了共享存储里面,这样webhook不管部署到哪台node,都可以读取到配置文件和报警模板。怎么通过NFS让数据持久化可以参考文档《Kubernetes使用StorageClass动态生成NFS类型的PV》。

dingding-pvc.yaml

[](javascript:void(0)😉

# cat dingding-pvc.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: dingding-pvc

annotations:

volume.beta.kubernetes.io/storage-class: "nfs-client"

namespace: monitoring

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 50Mi[](javascript:void(0)😉

配置文件config.yaml:

templates:

- /config/template.tmpl

targets:

webhook1:

url: https://oapi.dingtalk.com/robot/send?access_token=替换成自己的钉钉机器人token报警模板template.tmpl:

[](javascript:void(0)😉

{{ define "ding.link.title" }}[监控报警]{{ end }}

{{ define "ding.link.content" -}}

{{- if gt (len .Alerts.Firing) 0 -}}

{{ range $i, $alert := .Alerts.Firing }}

[告警项目]:{{ index $alert.Labels "alertname" }}

[告警实例]:{{ index $alert.Labels "instance" }}

[告警级别]:{{ index $alert.Labels "severity" }}

[告警阀值]:{{ index $alert.Annotations "value" }}

[告警详情]:{{ index $alert.Annotations "description" }}

[触发时间]:{{ (.StartsAt.Add 28800e9).Format "2006-01-02 15:04:05" }}

{{ end }}{{- end }}

{{- if gt (len .Alerts.Resolved) 0 -}}

{{ range $i, $alert := .Alerts.Resolved }}

[项目]:{{ index $alert.Labels "alertname" }}

[实例]:{{ index $alert.Labels "instance" }}

[状态]:恢复正常

[开始]:{{ (.StartsAt.Add 28800e9).Format "2006-01-02 15:04:05" }}

[恢复]:{{ (.EndsAt.Add 28800e9).Format "2006-01-02 15:04:05" }}

{{ end }}{{- end }}

{{- end }}[](javascript:void(0)😉

可以根据自己的喜欢自己修改模板,“.EndsAt.Add 28800e9”是UTC时间+8小时,因为prometheus和alertmanager默认都是使用的UTC时间,另外需要把这两个文件的属主和属组设置成65534,不然webhook容器没有权限访问这两个文件。

1.2、第二种方式通过configMap方式(推荐)挂载配置文件和模板,需要修改原来的dingtalk-webhook.yaml文件,添加挂载为configMap。

[](javascript:void(0)😉

[root@master tmp]# cat dingtalk-webhook.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: dingtalk-config

namespace: monitoring

data:

config.yaml: |

templates:

- /config/template.tmpl

targets:

webhook1:

url: https://oapi.dingtalk.com/robot/send?access_token=d6fab51d798f81b10a464fd232d4d3ec6d2aa9ed2df3dd013c659d7c11d946ff (钉钉机器人地址)

template.tmpl: |

{{ define "ding.link.title" }}[监控报警]{{ end }}

{{ define "ding.link.content" -}}

{{- if gt (len .Alerts.Firing) 0 -}}

{{ range $i, $alert := .Alerts.Firing }}

[告警项目]:{{ index $alert.Labels "alertname" }}

[告警实例]:{{ index $alert.Labels "instance" }}

[告警级别]:{{ index $alert.Labels "severity" }}

[告警阀值]:{{ index $alert.Annotations "value" }}

[告警详情]:{{ index $alert.Annotations "description" }}

[触发时间]:{{ (.StartsAt.Add 28800e9).Format "2006-01-02 15:04:05" }}

{{ end }}{{- end }}

{{- if gt (len .Alerts.Resolved) 0 -}}

{{ range $i, $alert := .Alerts.Resolved }}

[项目]:{{ index $alert.Labels "alertname" }}

[实例]:{{ index $alert.Labels "instance" }}

[状态]:恢复正常

[开始]:{{ (.StartsAt.Add 28800e9).Format "2006-01-02 15:04:05" }}

[恢复]:{{ (.EndsAt.Add 28800e9).Format "2006-01-02 15:04:05" }}

{{ end }}{{- end }}

{{- end }}

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: dingding-webhook

namespace: monitoring

spec:

selector:

matchLabels:

app: dingtalk

replicas: 1

template:

metadata:

labels:

app: dingtalk

spec:

restartPolicy: Always

containers:

- name: dingtalk

image: timonwong/prometheus-webhook-dingtalk:v1.4.0

imagePullPolicy: IfNotPresent

args:

- '--web.enable-ui'

- '--web.enable-lifecycle'

- '--config.file=/config/config.yaml'

ports:

- containerPort: 8060

protocol: TCP

volumeMounts:

- name: dingtalk-config

mountPath: "/config"

resources:

limits:

cpu: 100m

memory: 100Mi

requests:

cpu: 100m

memory: 100Mi

volumes:

- name: dingtalk-config

configMap:

name: dingtalk-config

---

apiVersion: v1

kind: Service

metadata:

name: dingding-webhook #(下面的alertmanager-secret.yaml配置中url部分会用到此名称)(- "url": "http://dingding-webhook/dingtalk/webhook1/send")

namespace: monitoring

spec:

ports:

- port: 80

protocol: TCP

targetPort: 8060

selector:

app: dingtalk

sessionAffinity: None[](javascript:void(0)😉

2、修改alertmanager默认的配置文件,增加webhook_configs,直接修改kube-prometheus-master/manifests/alertmanager-secret.yaml文件为以下内容:

[](javascript:void(0)😉

cat alertmanager-secret.yaml

apiVersion: v1

data: {}

kind: Secret

metadata:

name: alertmanager-main

namespace: monitoring

stringData:

alertmanager.yaml: |-

"global":

"resolve_timeout": "5m"

"inhibit_rules":

- "equal":

- "namespace"

- "alertname"

"source_match":

"severity": "critical"

"target_match_re":

"severity": "warning|info"

- "equal":

- "namespace"

- "alertname"

"source_match":

"severity": "warning"

"target_match_re":

"severity": "info"

"receivers":

- "name": "www.amd5.cn"

#- "name": "Watchdog"

#- "name": "Critical"

#- "name": "webhook"

"webhook_configs":

- "url": "http://dingding-webhook/dingtalk/webhook1/send" #(注意使用上面dingtalk-webhook.yaml 配置中service 的名称dingding-webhook)

"send_resolved": true

"route":

"group_by":

- "namespace"

"group_interval": "5m"

"group_wait": "30s"

"receiver": "www.amd5.cn"

"repeat_interval": "12h"

#"routes":

#- "match":

# "alertname": "Watchdog"

# "receiver": "Watchdog"

#- "match":

# "severity": "critical"

# "receiver": "Critical"[](javascript:void(0)😉

所有的yaml文件准备好以后,执行

kubectl apply -f dingding-pvc.yaml

kubectl apply -f dingtalk-webhook.yaml

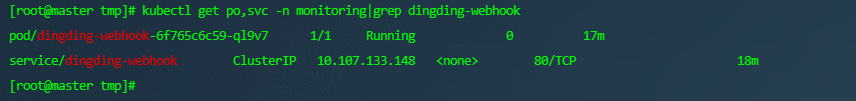

kubectl apply -f alertmanager-secret.yaml查看执行结果

[root@master tmp]# kubectl get po,svc -n monitoring|grep dingding-webhook

pod/dingding-webhook-6f765c6c59-ql9v7 1/1 Running 0 17m

service/dingding-webhook ClusterIP 10.107.133.148 <none> 80/TCP 18m

然后访问alertmanager的地址(把alertmanager.amd5.cn替换为自己的地址)查看配置webhook_configs是否已经生效,http://alertmanager.amd5.cn/#/status。

3、生效以后,我们就添加报警规则,等待触发规则阈值报警测试。

创建文件prometheus-rules.yaml在末尾添加下面的内容,注意缩进

[](javascript:void(0)😉

[root@master tmp]# cat prometheus-rules.yaml

apiVersion: monitoring.coreos.com/v1

kind: PrometheusRule

metadata:

name: prometheus-k8s-rules

namespace: monitoring

spec:

groups:

- name: 主机状态-监控告警

rules:

- alert: 节点内存

expr: (1 - (node_memory_MemAvailable_bytes / (node_memory_MemTotal_bytes))) * 100 > 85

for: 1m

labels:

severity: warning

annotations:

summary: "内存使用率过高!"

description: "节点{{$labels.instance}}内存使用大于85%(目前使用:{{$value}}%)"

- alert: 节点TCP会话

expr: node_netstat_Tcp_CurrEstab > 1000

for: 1m

labels:

severity: warning

annotations:

summary: "TCP_ESTABLISHED过高!"

description: "{{$labels.instance}} TCP_ESTABLISHED连接数大于1000"

- alert: 节点磁盘容量

expr: |

max(

(node_filesystem_size_bytes{fstype=~"ext.?|xfs"} - node_filesystem_free_bytes{fstype=~"ext.?|xfs"})

* 100 /

(node_filesystem_avail_bytes{fstype=~"ext.?|xfs"} + (node_filesystem_size_bytes{fstype=~"ext.?|xfs"} - node_filesystem_free_bytes{fstype=~"ext.?|xfs"}))

) by (instance) > 85

for: 1m

labels:

severity: warning

annotations:

summary: "节点磁盘分区使用率过高!"

description: "{{$labels.instance}} 磁盘分区使用大于80%(目前使用:{{$value}}%)"

- alert: 节点CPU

expr: |

(

100 - (

avg by (instance) (

irate(node_cpu_seconds_total{job=~".*",mode="idle"}[5m])

) * 100

)

) > 85

for: 1m

labels:

severity: warning

annotations:

summary: "节点CPU��用率过高!"

description: "{{$labels.instance}} CPU使用率大于85%(目前使用:{{$value}}%)"

# 以下为添加的报警测试规则

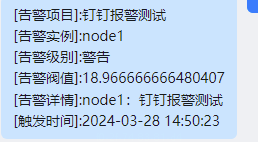

- name: 自定义报警测试

rules:

- alert: '钉钉报警测试'

expr: |

jvm_threads_live > 140

for: 1m

labels:

severity: '警告'

annotations:

summary: "{{ $labels.instance }}: 钉钉报警测试"

description: "{{ $labels.instance }}:钉钉报警测试"

custom: "钉钉报警测试"

value: "{{$value}}"[](javascript:void(0)😉

然后执行命令更新规则

kubectl apply -f prometheus-rules.yaml然后访问prometheus地址http://prometheus.amd5.cn/alerts查看rule生效情况,如下图:

由于我集群里没有配置 java 所以告警策略 改成了 CPU 告警

题外话有空在研究一下 抑制规则

在这个例子中:

source_match用于匹配源告警,即触发抑制的告警。target_match用于匹配目标告警,即被抑制的告警。equal是一个可选字段,它指定源告警和目标告警之间必须匹配的标签,用于进一步细化匹配条件。duration字段指定了抑制操作将持续的时间。即使源告警在此时间段内得到解决,目标告警的通知仍然会被抑制。

编写抑制规则时,你需要确保源告警和目标告警的匹配条件能够准确地描述你想要实现的抑制逻辑。此外,你还需要考虑抑制操作的持续时间和标签匹配,以确保抑制规则不会意外地抑制其他重要的告警通知。

请注意,抑制规则的配置可能因Alertmanager的版本而有所不同。因此,在编写抑制规则时,建议查阅你所使用的Alertmanager版本的官方文档,以获取最准确和最新的配置指南。

最后,编写完抑制规则后,你需要将它们添加到Alertmanager的配置文件中,并重新启动Alertmanager以使配置生效。

[](javascript:void(0)😉

inhibit_rules:

- source_match:

# 定义源告警的匹配条件

alertname: "SourceAlert"

severity: "critical"

target_match:

# 定义目标告警的匹配条件

alertname: "TargetAlert"

environment: "production"

# 可选:定义源告警和目标告警之间必须匹配的标签

equal:

- "instance"

# 抑制操作将持续的时间,即使源告警已经解决

duration: 1h[](javascript:void(0)😉