prometheus

Prometheus

关联Prometheus与Alertmanager

在Prometheus的架构中被划分成两个独立的部分。Prometheus负责产生告警,而Alertmanager负责告警产生后的后续处理。因此Alertmanager部署完成后,需要在Prometheus中设置Alertmanager相关的信息。

编辑Prometheus配置文件prometheus.yml,并添加以下内容

Copy

alerting:

alertmanagers:

- static_configs:

- targets: ['localhost:9093']重启Prometheus服务,成功后,可以从http://192.168.33.10:9090/config查看alerting配置是否生效

Prometheus采集配置

# 全局配置

global:

scrape_interval: 15s # 设置抓取间隔为每15秒。默认是每1分钟。

evaluation_interval: 15s # 每15秒评估一次规则。默认是每1分钟。

# scrape_timeout 设置为全局默认值(10s)。

# Alertmanager 配置

ing:

managers:

- static_configs:

- targets:

# - manager:9093

# 根据全局的 'evaluation_interval' 定期加载并评估规则。

rule_files:

# - "first_rules.yml"

# - "second_rules.yml"

# 包含一个抓取端点的抓取配置:

# 这里指的是 Prometheus 本身。

scrape_configs:

# 作业名称作为标签 `job=<job_name>` 添加到从此配置中抓取的任何时间序列上。

- job_name: 'prometheus'

# metrics_path 默认为 '/metrics'

# scheme 默认为 'http'。

static_configs:

- targets: ['localhost:9090']推送到Alertmanager 配置

# 定义一组具有特定名称的警报规则

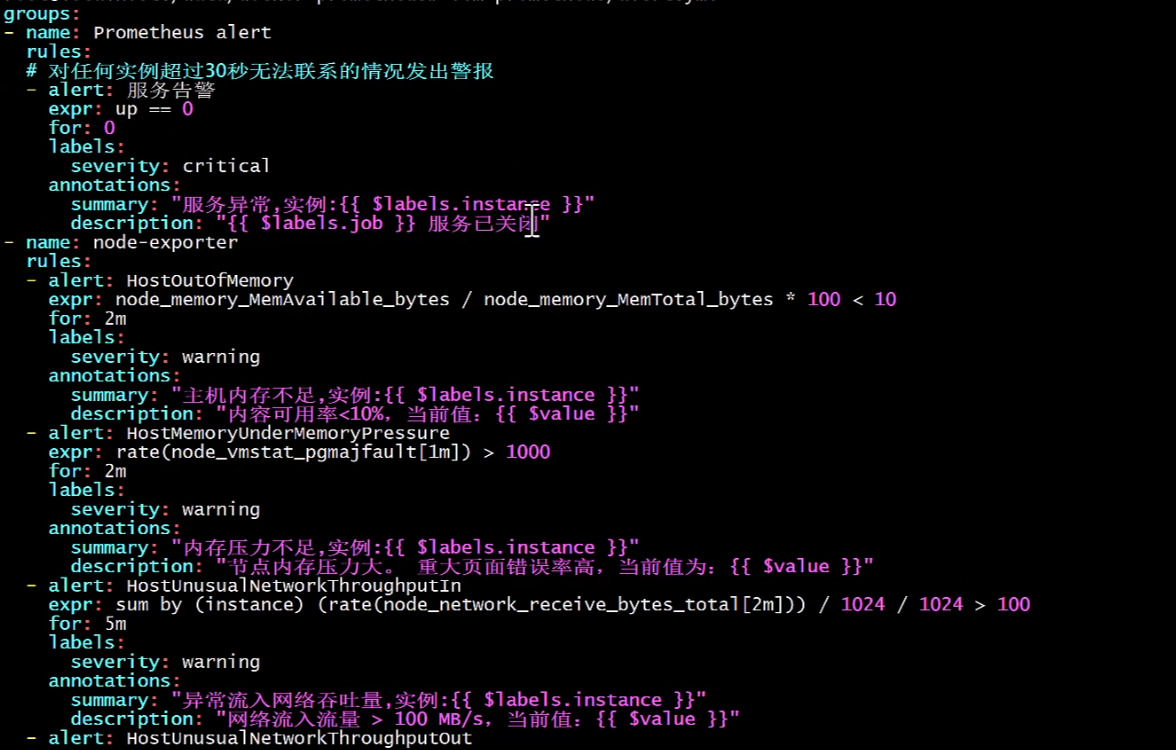

groups:

- name: example

# 在此组中列出的规则

rules:

# 定义一个单独的警报规则

# alert:告警规则的名称

- alert: HighErrorRate

# 用于触发警报的表达式

# expr:基于PromQL表达式告警触发条件,用于计算是否有时间序列满足该条件

expr: job:request_latency_seconds:mean5m{job="myjob"} > 0.5

# 表达式必须为真多长时间才能触发警报

# for:评估等待时间,可选参数。用于表示只有当触发条件持续一段时间后才发送告警。在等待期间新产生告警的状态为pending

for: 10m

# 警报的标签,可用于路由和分组警报

# labels:自定义标签,允许用户指定要附加到告警上的一组附加标签

labels:

severity: page

# 为警报提供额外上下文或详细信息的注释

# annotations:用于指定一组附加信息,比如用于描述告警详细信息的文字等,annotations的内容在告警产生时会一同作为参数发送到Alertmanager

annotations:

# 高请求延迟

summary: High request latency

# 描述信息

description: description info访问Prometheus UIhttp://127.0.0.1:9090/rules可以查看当前以加载的规则文件

http://127.0.0.1:9090/alerts可以查看当前告警的活动状态

Alertmanager启动后可以通过9093端口访问,http://192.168.33.10:9093

general.rules

Rule

alert:Watchdog

expr:vector(1)

labels:

severity: none

annotations:

description: This is an alert meant to ensure that the entire alerting pipeline is functional. This alert is always firing, therefore it should always be firing in Alertmanager and always fire against a receiver. There are integrations with various notification mechanisms that send a notification when this alert is not firing. For example the "DeadMansSnitch" integration in PagerDuty.

runbook_url: https://runbooks.prometheus-operator.dev/runbooks/general/watchdog

summary: An alert that should always be firing to certify that Alertmanager is working properly.

alert:InfoInhibitor

expr:ALERTS{severity="info"} == 1 unless on (namespace) ALERTS{alertname!="InfoInhibitor",alertstate="firing",severity=~"warning|critical"} == 1

labels:

severity: none

annotations:

description: This is an alert that is used to inhibit info alerts. By themselves, the info-level alerts are sometimes very noisy, but they are relevant when combined with other alerts. This alert fires whenever there's a severity="info" alert, and stops firing when another alert with a severity of 'warning' or 'critical' starts firing on the same namespace. This alert should be routed to a null receiver and configured to inhibit alerts with severity="info".

runbook_url: https://runbooks.prometheus-operator.dev/runbooks/general/infoinhibitor

summary: Info-level alert inhibition.kubernetes-resources

Rule

alert:CPUThrottlingHigh

expr:sum by (container, pod, namespace) (increase(container_cpu_cfs_throttled_periods_total{container!=""}[5m])) / sum by (container, pod, namespace) (increase(container_cpu_cfs_periods_total[5m])) > (25 / 100)

for: 15m

labels:

severity: info

annotations:

description: {{ $value | humanizePercentage }} throttling of CPU in namespace {{ $labels.namespace }} for container {{ $labels.container }} in pod {{ $labels.pod }}.

runbook_url: https://runbooks.prometheus-operator.dev/runbooks/kubernetes/cputhrottlinghigh

summary: Processes experience elevated CPU throttling.Alertmanager推送接收方配置

# 全局配置 用于定义一些全局的公共参数

global:

# 定义警报从激活到恢复状态时等待的时间。默认值为5分钟

# 当Alertmanager持续多长时间未接收到告警后标记告警状态为resolved(已解决)。该参数的定义可能会影响到告警恢复通知的接收时间

[ resolve_timeout: <duration> | default = 5m ]

# 设置发送警报邮件的发件人地址

[ smtp_from: <tmpl_string> ]

# 指定SMTP服务器的地址,用于发送邮件

[ smtp_smarthost: <string> ]

# 当连接到SMTP服务器时,客户端自我介绍的身份。默认值为"localhost"

[ smtp_hello: <string> | default = "localhost" ]

# SMTP服务器的认证用户名

[ smtp_auth_username: <string> ]

# SMTP服务器的认证密码

[ smtp_auth_password: <secret> ]

# SMTP认证身份,可与smtp_auth_username不同

[ smtp_auth_identity: <string> ]

# SMTP认证密钥,与smtp_auth_password功能类似

[ smtp_auth_secret: <secret> ]

# 是否需要使用TLS加密邮件传输。默认为true

[ smtp_require_tls: <bool> | default = true ]

# Slack API的URL,用于向Slack发送警报

[ slack_api_url: <secret> ]

# VictorOps API的密钥,用于向VictorOps发送警报

[ victorops_api_key: <secret> ]

# VictorOps API的URL,默认为"https://alert.victorops.com/integrations/generic/20131114/alert/"

[ victorops_api_url: <string> | default = "https://alert.victorops.com/integrations/generic/20131114/alert/" ]

# PagerDuty API的URL,默认为"https://events.pagerduty.com/v2/enqueue"

[ pagerduty_url: <string> | default = "https://events.pagerduty.com/v2/enqueue" ]

# OpsGenie API的密钥,用于向OpsGenie发送警报

[ opsgenie_api_key: <secret> ]

# OpsGenie API的URL,默认为"https://api.opsgenie.com/"

[ opsgenie_api_url: <string> | default = "https://api.opsgenie.com/" ]

# HipChat API的URL,默认为"https://api.hipchat.com/"

[ hipchat_api_url: <string> | default = "https://api.hipchat.com/" ]

# HipChat API的认证令牌

[ hipchat_auth_token: <secret> ]

# 微信企业号API的URL,默认为"https://qyapi.weixin.qq.com/cgi-bin/"

[ wechat_api_url: <string> | default = "https://qyapi.weixin.qq.com/cgi-bin/" ]

# 微信企业号API的密钥

[ wechat_api_secret: <secret> ]

# 微信企业号的CorpID

[ wechat_api_corp_id: <string> ]

# HTTP请求的配置,例如超时、重试等

[ http_config: <http_config> ]

# 警报通知的格式模板 用于定义告警通知时的模板,如HTML模板,邮件模板等

templates:

[ - <filepath> ... ]

# 配置定义了警报如何路由到接收者,可以是复杂的条件语句来决定警报的处理流程 根据标签匹配,确定当前告警应该如何处理

route: <route>

# 列表包含了所有可能接收警报通知的接收者信息 一般配合告警路由使用

receivers:

- <receiver> ...

# 一系列规则,用于抑制特定条件下重复或不必要的警报通知 减少垃圾告警的产生

inhibit_rules:

[ - <inhibit_rule> ... ]内置默认通知模板

https://github.com/prometheus/alertmanager/blob/main/template/default.tmpl

{{ define "__alertmanager" }}Alertmanager{{ end }}

{{ define "__alertmanagerURL" }}{{ .ExternalURL }}/#/alerts?receiver={{ .Receiver | urlquery }}{{ end }}

{{ define "__subject" }}[{{ .Status | toUpper }}{{ if eq .Status "firing" }}:{{ .Alerts.Firing | len }}{{ end }}] {{ .GroupLabels.SortedPairs.Values | join " " }} {{ if gt (len .CommonLabels) (len .GroupLabels) }}({{ with .CommonLabels.Remove .GroupLabels.Names }}{{ .Values | join " " }}{{ end }}){{ end }}{{ end }}

{{ define "__description" }}{{ end }}

{{ define "__text_alert_list" }}{{ range . }}Labels:

{{ range .Labels.SortedPairs }} - {{ .Name }} = {{ .Value }}

{{ end }}Annotations:

{{ range .Annotations.SortedPairs }} - {{ .Name }} = {{ .Value }}

{{ end }}Source: {{ .GeneratorURL }}

{{ end }}{{ end }}

{{ define "__text_alert_list_markdown" }}{{ range . }}

Labels:

{{ range .Labels.SortedPairs }} - {{ .Name }} = {{ .Value }}

{{ end }}

Annotations:

{{ range .Annotations.SortedPairs }} - {{ .Name }} = {{ .Value }}

{{ end }}

Source: {{ .GeneratorURL }}

{{ end }}

{{ end }}

{{ define "slack.default.title" }}{{ template "__subject" . }}{{ end }}

{{ define "slack.default.username" }}{{ template "__alertmanager" . }}{{ end }}

{{ define "slack.default.fallback" }}{{ template "slack.default.title" . }} | {{ template "slack.default.titlelink" . }}{{ end }}

{{ define "slack.default.callbackid" }}{{ end }}

{{ define "slack.default.pretext" }}{{ end }}

{{ define "slack.default.titlelink" }}{{ template "__alertmanagerURL" . }}{{ end }}

{{ define "slack.default.iconemoji" }}{{ end }}

{{ define "slack.default.iconurl" }}{{ end }}

{{ define "slack.default.text" }}{{ end }}

{{ define "slack.default.footer" }}{{ end }}

{{ define "pagerduty.default.description" }}{{ template "__subject" . }}{{ end }}

{{ define "pagerduty.default.client" }}{{ template "__alertmanager" . }}{{ end }}

{{ define "pagerduty.default.clientURL" }}{{ template "__alertmanagerURL" . }}{{ end }}

{{ define "pagerduty.default.instances" }}{{ template "__text_alert_list" . }}{{ end }}

{{ define "opsgenie.default.message" }}{{ template "__subject" . }}{{ end }}

{{ define "opsgenie.default.description" }}{{ .CommonAnnotations.SortedPairs.Values | join " " }}

{{ if gt (len .Alerts.Firing) 0 -}}

Alerts Firing:

{{ template "__text_alert_list" .Alerts.Firing }}

{{- end }}

{{ if gt (len .Alerts.Resolved) 0 -}}

Alerts Resolved:

{{ template "__text_alert_list" .Alerts.Resolved }}

{{- end }}

{{- end }}

{{ define "opsgenie.default.source" }}{{ template "__alertmanagerURL" . }}{{ end }}

{{ define "wechat.default.message" }}{{ template "__subject" . }}

{{ .CommonAnnotations.SortedPairs.Values | join " " }}

{{ if gt (len .Alerts.Firing) 0 -}}

Alerts Firing:

{{ template "__text_alert_list" .Alerts.Firing }}

{{- end }}

{{ if gt (len .Alerts.Resolved) 0 -}}

Alerts Resolved:

{{ template "__text_alert_list" .Alerts.Resolved }}

{{- end }}

AlertmanagerUrl:

{{ template "__alertmanagerURL" . }}

{{- end }}

{{ define "wechat.default.to_user" }}{{ end }}

{{ define "wechat.default.to_party" }}{{ end }}

{{ define "wechat.default.to_tag" }}{{ end }}

{{ define "wechat.default.agent_id" }}{{ end }}

{{ define "victorops.default.state_message" }}{{ .CommonAnnotations.SortedPairs.Values | join " " }}

{{ if gt (len .Alerts.Firing) 0 -}}

Alerts Firing:

{{ template "__text_alert_list" .Alerts.Firing }}

{{- end }}

{{ if gt (len .Alerts.Resolved) 0 -}}

Alerts Resolved:

{{ template "__text_alert_list" .Alerts.Resolved }}

{{- end }}

{{- end }}

{{ define "victorops.default.entity_display_name" }}{{ template "__subject" . }}{{ end }}

{{ define "victorops.default.monitoring_tool" }}{{ template "__alertmanager" . }}{{ end }}

{{ define "pushover.default.title" }}{{ template "__subject" . }}{{ end }}

{{ define "pushover.default.message" }}{{ .CommonAnnotations.SortedPairs.Values | join " " }}

{{ if gt (len .Alerts.Firing) 0 }}

Alerts Firing:

{{ template "__text_alert_list" .Alerts.Firing }}

{{ end }}

{{ if gt (len .Alerts.Resolved) 0 }}

Alerts Resolved:

{{ template "__text_alert_list" .Alerts.Resolved }}

{{ end }}

{{ end }}

{{ define "pushover.default.url" }}{{ template "__alertmanagerURL" . }}{{ end }}

{{ define "sns.default.subject" }}{{ template "__subject" . }}{{ end }}

{{ define "sns.default.message" }}{{ .CommonAnnotations.SortedPairs.Values | join " " }}

{{ if gt (len .Alerts.Firing) 0 }}

Alerts Firing:

{{ template "__text_alert_list" .Alerts.Firing }}

{{ end }}

{{ if gt (len .Alerts.Resolved) 0 }}

Alerts Resolved:

{{ template "__text_alert_list" .Alerts.Resolved }}

{{ end }}

{{ end }}

{{ define "telegram.default.message" }}

{{ if gt (len .Alerts.Firing) 0 }}

Alerts Firing:

{{ template "__text_alert_list" .Alerts.Firing }}

{{ end }}

{{ if gt (len .Alerts.Resolved) 0 }}

Alerts Resolved:

{{ template "__text_alert_list" .Alerts.Resolved }}

{{ end }}

{{ end }}

{{ define "discord.default.title" }}{{ template "__subject" . }}{{ end }}

{{ define "discord.default.message" }}

{{ if gt (len .Alerts.Firing) 0 }}

Alerts Firing:

{{ template "__text_alert_list" .Alerts.Firing }}

{{ end }}

{{ if gt (len .Alerts.Resolved) 0 }}

Alerts Resolved:

{{ template "__text_alert_list" .Alerts.Resolved }}

{{ end }}

{{ end }}

{{ define "webex.default.message" }}{{ .CommonAnnotations.SortedPairs.Values | join " " }}

{{ if gt (len .Alerts.Firing) 0 }}

Alerts Firing:

{{ template "__text_alert_list" .Alerts.Firing }}

{{ end }}

{{ if gt (len .Alerts.Resolved) 0 }}

Alerts Resolved:

{{ template "__text_alert_list" .Alerts.Resolved }}

{{ end }}

{{ end }}

{{ define "msteams.default.summary" }}{{ template "__subject" . }}{{ end }}

{{ define "msteams.default.title" }}{{ template "__subject" . }}{{ end }}

{{ define "msteams.default.text" }}

{{ if gt (len .Alerts.Firing) 0 }}

# Alerts Firing:

{{ template "__text_alert_list_markdown" .Alerts.Firing }}

{{ end }}

{{ if gt (len .Alerts.Resolved) 0 }}

# Alerts Resolved:

{{ template "__text_alert_list_markdown" .Alerts.Resolved }}

{{ end }}

{{ end }}{

"ExternalURL": "AlertManager的外部访问URL,用于提供额外的警报上下文或直接访问警报管理器",

"Status": "当前警报的状态,'firing'表示正在触发,'resolved'表示已解决",

"Receiver": "负责处理此警报的接收器配置的名称",

"GroupLabels": { // 一组公共标签,用于将多个警报分组在一起

"job": "触发警报的监控任务或Job的名称",

"instance": "触发警报的实例或主机的标识"

},

"CommonLabels": { // 所有警报共有的标签,提供了关于警报的通用信息

"severity": "警报的严重程度,例如critical、warning等",

"alertname": "警报的名称,用于识别特定类型的警报"

},

"Alerts": { // 警报的详细信息,分为正在触发的警报和已解决的警报

"Firing": [ // 正在触发的警报列表

{

"Labels": { // 与特定警报关联的标签

"instance": "触发警报的实例或主机的标识",

"job": "触发警报的监控任务或Job的名称",

"severity": "警报的严重程度,例如critical、warning等",

"alertname": "警报的名称,用于识别特定类型的警报"

},

"Annotations": { // 与特定警报关联的注释,提供额外的信息

"summary": "警报的简短总结",

"description": "警报的详细描述"

},

"GeneratorURL": "生成此警报的查询或监控规则的URL,用于进一步调查警报原因"

}

],

"Resolved": [] // 已经解决的警报列表,在此示例中没有已解决的警报

}

}钉钉机器人

ip地址 可以使用 运营商公共出口

https://tool.lu/ip/查看prometheus的alerts: http://192.168.11.61:9090/alerts 查看alertmanager的alerts: http://192.168.11.61:9093/#/alerts

博哥课程

# 监控报警规划修改

kubectl -n monitoring edit PrometheusRule kubernetes-monitoring-rules

# 通过这里可以获取需要创建的报警配置secret名称

# kubectl -n monitoring edit statefulsets.apps alertmanager-main

...

volumes:

- name: config-volume

secret:

defaultMode: 420

secretName: alertmanager-main-generated

...

# 注意事先在配置文件 alertmanager.yaml 里面编辑好收件人等信息 ,再执行下面的命令

kubectl -n monitoring delete secret alertmanager-main

kubectl -n monitoring create secret generic alertmanager-main --from-file=alertmanager.yamlglobal:

resolve_timeout: 10m

templates:

- '/etc/altermanager/config/*.tmpl'

route:

# 例如所有labelkey:labelvalue含cluster=A及altertname=LatencyHigh labelkey的告警都会被归入单一组中

group_by: ['job', 'altername', 'cluster', 'service','severity']

group_wait: 10s

group_interval: 20s

repeat_interval: 1m

# 默认告警通知接收者,凡未被匹配进入各子路由节点的告警均被发送到此接收者

receiver: 'webhook'

# 上述route的配置会被传递给子路由节点,子路由节点进行重新配置才会被覆盖

# 子路由树

routes:

# 该配置选项使用正则表达式来匹配告警的labels,以确定能否进入该子路由树

# match_re和match均用于匹配labelkey为service,labelvalue分别为指定值的告警,被匹配到的告警会将通知发送到对应的receiver

- match_re:

service: ^(foo1|foo2|baz)$

receiver: 'webhook'

# 在带有service标签的告警同时有severity标签时,他可以有自己的子路由,同时具有severity != critical的告警则被发送给接收者team-ops-wechat,对severity == critical的告警则被发送到对应的接收者即team-ops-pager

routes:

- match:

severity: critical

receiver: 'webhook'

# 比如关于数据库服务的告警,如果子路由没有匹配到相应的owner标签,则都默认由team-DB-pager接收

- match:

service: database

receiver: 'webhook'

# 我们也可以先根据标签service:database将数据库服务告警过滤出来,然后进一步将所有同时带labelkey为database

- match:

severity: critical

receiver: 'webhook'

# 抑制规则,当出现critical告警时 忽略warning

inhibit_rules:

- source_match:

severity: 'critical'

target_match:

severity: 'warning'

# Apply inhibition if the alertname is the same.

# equal: ['alertname', 'cluster', 'service']

#

# 收件人配置

receivers:

- name: 'webhook'

webhook_configs:

- url: 'http://alertmanaer-dingtalk-svc.monitoring/b01bdc063/boge/getjson'

send_resolved: trueglobal:

resolve_timeout: 10m

templates:

- '/etc/altermanager/config/*.tmpl'

route:

group_by: ['job', 'altername', 'cluster', 'service','severity']

group_wait: 10s

group_interval: 20s

repeat_interval: 1m

receiver: 'webhook'

routes:

- match_re:

service: ^(foo1|foo2|baz)$

receiver: 'webhook'

routes:

- match:

severity: critical

receiver: 'webhook'

- match:

service: database

receiver: 'webhook'

- match:

severity: critical

receiver: 'webhook'

inhibit_rules:

- source_match:

severity: 'critical'

target_match:

severity: 'warning'

receivers:

- name: 'webhook'

webhook_configs:

- url: 'http://alertmanaer-dingtalk-svc.monitoring/b01bdc063/boge/getjson'

send_resolved: true自改配置

1.关闭看门狗

2.关闭alertname = InfoInhibitor

3.关闭info级别

global:

resolve_timeout: 10m

templates:

- '/etc/altermanager/config/*.tmpl'

route:

# 例如所有labelkey:labelvalue含cluster=A及altertname=LatencyHigh labelkey的告警都会被归入单一组中

group_by: ['job', 'altername', 'cluster', 'service','severity']

group_wait: 10s

group_interval: 20s

repeat_interval: 1m

# 默认告警通知接收者,凡未被匹配进入各子路由节点的告警均被发送到此接收者

receiver: 'webhook'

# 上述route的配置会被传递给子路由节点,子路由节点进行重新配置才会被覆盖

# 子路由树

routes:

- matchers:

- alertname = Watchdog

receiver: Watchdog

- matchers:

- alertname = InfoInhibitor

receiver: null-receiver

# 该配置选项使用正则表达式来匹配告警的labels,以确定能否进入该子路由树

# match_re和match均用于匹配labelkey为service,labelvalue分别为指定值的告警,被匹配到的告警会将通知发送到对应的receiver

- match_re:

service: ^(foo1|foo2|baz)$

receiver: 'webhook'

# 在带有service标签的告警同时有severity标签时,他可以有自己的子路由,同时具有severity != critical的告警则被发送给接收者team-ops-wechat,对severity == critical的告警则被发送到对应的接收者即team-ops-pager

routes:

- match:

severity: critical

receiver: 'webhook'

# 比如关于数据库服务的告警,如果子路由没有匹配到相应的owner标签,则都默认由team-DB-pager接收

- match:

service: database

receiver: 'webhook'

# 我们也可以先根据标签service:database将数据库服务告警过滤出来,然后进一步将所有同时带labelkey为database

- match:

severity: critical

receiver: 'webhook'

# 抑制规则,当出现critical告警时 忽略warning

inhibit_rules:

- equal:

- namespace

- alertname

source_matchers:

- severity = critical

target_matchers:

- severity =~ warning|info

- equal:

- namespace

- alertname

source_matchers:

- severity = warning

target_matchers:

- severity = info

- equal:

- namespace

source_matchers:

- alertname = InfoInhibitor

target_matchers:

- severity = info

# 收件人配置

receivers:

- name: 'webhook'

webhook_configs:

- url: 'http://alertmanaer-dingtalk-svc.monitoring/b01bdc063/boge/getjson'

send_resolved: true

- name: Watchdog

- name: null-receiver[GIN-debug] POST /b01bdc063/boge/getjson --> mycli/libs.MyWebServer.func2 (3 handlers) [GIN-debug] POST /7332f19/prometheus/dingtalk --> mycli/libs.MyWebServer.func3 (3 handlers)

过滤前

[{map[alertname:CPUThrottlingHigh container:kube-rbac-proxy-main namespace:monitoring pod:kube-state-metrics-5ff4fcd9f5-4mkcw prometheus:monitoring/k8s severity:info] map[description:31.25% throttling of CPU in namespace monitoring for container kube-rbac-proxy-main in pod kube-state-metrics-5ff4fcd9f5-4mkcw. runbook_url:https://runbooks.prometheus-operator.dev/runbooks/kubernetes/cputhrottlinghigh summary:Processes experience elevated CPU throttling.] 2024-06-29 12:02:19.944 +0000 UTC 0001-01-01 00:00:00 +0000 UTC}]

过滤后

[{map[alertname:CPUThrottlingHigh container:kube-rbac-proxy-main namespace:monitoring pod:kube-state-metrics-5ff4fcd9f5-4mkcw prometheus:monitoring/k8s severity:info] map[description:31.25% throttling of CPU in namespace monitoring for container kube-rbac-proxy-main in pod kube-state-metrics-5ff4fcd9f5-4mkcw. runbook_url:https://runbooks.prometheus-operator.dev/runbooks/kubernetes/cputhrottlinghigh summary:Processes experience elevated CPU throttling.] 2024-06-29 12:02:19.944 +0000 UTC 0001-01-01 00:00:00 +0000 UTC}]

过滤字符串是: serviceA,DeadMansSnitch

response Status: 200 OK

response Headers: map[Cache-Control:[no-cache] Content-Type:[application/json] Date:[Thu, 04 Jul 2024 06:29:32 GMT] Server:[Tengine]]

[GIN] 2024/07/04 - 14:29:32 | 200 | 916.149521ms | 172.20.84.128 | POST "/7332f19/prometheus/dingtalk"

[{map[alertname:CPUThrottlingHigh container:kube-rbac-proxy-main namespace:monitoring pod:kube-state-metrics-5ff4fcd9f5-4mkcw prometheus:monitoring/k8s severity:info]

map[description:31.25% throttling of CPU in namespace monitoring for container kube-rbac-proxy-main in pod kube-state-metrics-5ff4fcd9f5-4mkcw. runbook_url:https://runbooks.prometheus-operator.dev/runbooks/kubernetes/cputhrottlinghigh summary:Processes experience elevated CPU throttling.]

2024-06-29 12:02:19.944 +0000 UTC 0001-01-01 00:00:00 +0000 UTC}]程序

会把看门狗过滤掉

会把status resolved 过滤掉global:

resolve_timeout: 5m

inhibit_rules:

- equal:

- namespace

- alertname

source_matchers:

- severity = critical

target_matchers:

- severity =~ warning|info

- equal:

- namespace

- alertname

source_matchers:

- severity = warning

target_matchers:

- severity = info

- equal:

- namespace

source_matchers:

- alertname = InfoInhibitor

target_matchers:

- severity = info

receivers:

- name: Default

- name: Watchdog

- name: Critical

- name: null

route:

group_by:

- namespace

group_interval: 5m

group_wait: 30s

receiver: Default

repeat_interval: 12h

routes:

- matchers:

- alertname = Watchdog

receiver: Watchdog

- matchers:

- alertname = InfoInhibitor

receiver: null

- matchers:

- severity = critical

receiver: CriticalAlertmanager 默认告警

1 Watchdog

"alertname": "Watchdog", "prometheus": "monitoring/k8s",

{

"status": "firing",

"labels": {

"alertname": "Watchdog",

"prometheus": "monitoring/k8s",

"severity": "none"

},

"annotations": {

"description": "This is an alert meant to ensure that the entire alerting pipeline is functional.\nThis alert is always firing, therefore it should always be firing in Alertmanager\nand always fire against a receiver. There are integrations with various notification\nmechanisms that send a notification when this alert is not firing. For example the\n\"DeadMansSnitch\" integration in PagerDuty.\n",

"runbook_url": "https://runbooks.prometheus-operator.dev/runbooks/general/watchdog",

"summary": "An alert that should always be firing to certify that Alertmanager is working properly."

},

"startsAt": "2024-06-29T03:49:16.597Z",

"endsAt": "0001-01-01T00:00:00Z",

"generatorURL": "http://prometheus-k8s-0:9090/graph?g0.expr=vector(1)&g0.tab=1",

"fingerprint": "e1749c6acab64267"

}这是一个Prometheus的警报信息,用于监控整个警报管道是否正常工作,具体字段解释如下:

"status": "firing":表示警报当前状态为触发状态,即警报正在被触发。"labels"字段包含警报的标签信息:

"alertname": "Watchdog":警报名称是“Watchdog”,这是一种特殊的警报,旨在确保从Prometheus到Alertmanager再到接收者的整个警报流程是可用的。"prometheus": "monitoring/k8s":这是警报所属的Prometheus实例标识符。"severity": "none":警报的严重性级别,在这里设置为"none",因为Watchdog警报主要是为了检测系统功能,并非指示实际问题。"annotations"包含警报的附加信息:

"description":警报的描述,说明了Watchdog警报的目的和重要性,包括它应该总是处于触发状态以及与各种通知机制的集成情况。"runbook_url":提供了关于如何处理或调查此警报的文档链接,这通常是运行手册(Runbook)的链接。"summary":警报的简短总结,强调了这个警报的重要性在于验证Alertmanager是否正常运作。"startsAt": "2024-06-29T03:49:16.597Z":警报开始时间,以UTC格式给出。"endsAt": "0001-01-01T00:00:00Z":警报结束时间,通常在"status"为"firing"时,此值会被设置为一个不可能的时间点,表示警报尚未结束。"generatorURL":生成警报的查询URL,可以访问Prometheus UI来查看触发警报的表达式或指标。"fingerprint":警报的唯一标识符,用于在多个警报中区分和识别特定警报。综上所述,这个警报是Watchdog警报,它持续触发以确认警报系统(从Prometheus到Alertmanager)的健康状况,如果该警报停止触发,则可能表明警报系统存在配置错误、无效凭据、防火墙问题或其他故障,需要检查Alertmanager的日志以进行诊断。

description: 这是一个旨在确保整个警报管道功能正常的警报。此警报始终处于触发状态,因此它应该始终在Alertmanager中触发,并且总是针对接收者触发。当此警报没有触发时,会通过与多种通知机制的集成发送通知。例如,在PagerDuty中的"DeadMansSnitch"集成。summary: 警报概要说明这是一个应该始终触发的警报,以此来证明Alertmanager是否正常工作。

2 InfoInhibitor

"groupLabels": {

"severity": "none"

},

"commonLabels": {

"prometheus": "monitoring/k8s",

"severity": "none"

},| namespace | pod | container |

|---|---|---|

| monitoring | alertmanager-main-1 | config-reloader |

| monitoring | kube-state-metrics-5ff4fcd9f5-4mkcw | kube-rbac-proxy-main |

| monitoring | alertmanager-main-0 | config-reloader |

| monitoring | alertmanager-main-2 | config-reloader |

| monitoring | prometheus-k8s-0 | config-reloader |

| monitoring | prometheus-k8s-1 | config-reloader |

{

"status": "firing",

"labels": {

"alertname": "InfoInhibitor",

"alertstate": "pending",

"container": "config-reloader",

"namespace": "monitoring",

"pod": "alertmanager-main-0",

"prometheus": "monitoring/k8s",

"severity": "none"

},

"annotations": {

"description": "This is an alert that is used to inhibit info alerts.\nBy themselves, the info-level alerts are sometimes very noisy, but they are relevant when combined with\nother alerts.\nThis alert fires whenever there's a severity=\"info\" alert, and stops firing when another alert with a\nseverity of 'warning' or 'critical' starts firing on the same namespace.\nThis alert should be routed to a null receiver and configured to inhibit alerts with severity=\"info\".\n",

"runbook_url": "https://runbooks.prometheus-operator.dev/runbooks/general/infoinhibitor",

"summary": "Info-level alert inhibition."

},

"startsAt": "2024-06-29T12:02:16.597Z",

"endsAt": "0001-01-01T00:00:00Z",

"generatorURL": "http://prometheus-k8s-0:9090/graph?g0.expr=ALERTS%7Bseverity%3D%22info%22%7D+%3D%3D+1+unless+on+%28namespace%29+ALERTS%7Balertname%21%3D%22InfoInhibitor%22%2Calertstate%3D%22firing%22%2Cseverity%3D~%22warning%7Ccritical%22%7D+%3D%3D+1&g0.tab=1",

"fingerprint": "5e99f88e032fbc60"

}这个警报信息描述了一个名为

InfoInhibitor的警报,其状态为 "firing"(触发)。该警报的目的是抑制info级别的警报。在 Prometheus Operator 的上下文中,

info级别的警报有时会产生大量通知,这可能会造成噪音。然而,当与其他警报结合时,这些信息级别的警报可能变得相关。因此,InfoInhibitor警报会在任何info严重性警报出现时触发,并在相同命名空间上开始触发更严重的warning或critical类型警报时停止触发。此警报被配置为不产生实际影响,仅作为 Alertmanager 缺失功能的一个变通方案。它应该被路由到一个空接收器(null receiver),并配置为抑制所有

severity="info"的警报。这样的配置可以在以下链接找到:Alertmanager Secret Configuration。关于此警报的具体信息:

- 警报名称:InfoInhibitor

- 警报状态:pending(待处理)

- 容器:config-reloader

- 命名空间:monitoring

- Pod:alertmanager-main-0

- Prometheus 实例:monitoring/k8s

- 严重性:none(无)

警报开始时间是2024年6月29日12:02:16.597 UTC,结束时间为默认值,意味着它持续进行中直到被抑制条件满足。

生成警报的URL指向Prometheus的图形界面,显示了触发此抑制警报的查询表达式。指纹(fingerprint)是一个唯一标识符,用于识别特定警报实例。

http://prometheus-k8s-0:9090/graph?g0.expr=ALERTS{severity="info"}+==+1+unless+on+(namespace)+ALERTS{alertname!="InfoInhibitor",alertstate="firing",severity=~"warning|critical"}+==+1&g0.tab=1

ALERTS{severity="info"} == 1

unless on (namespace)

ALERTS{alertname!="InfoInhibitor",alertstate="firing",severity=~"warning|critical"} == 1

- ALERTS{severity="info"} == 1:这部分的查询表达式检查是否有任何警报当前处于活动状态且其严重性等级为 "info"。如果存在这样的警报,那么表达式的值会是 1,否则是 0。

- unless on (namespace):

unless关键字用于创建条件逻辑,在这里,它连接了两个子查询表达式。on (namespace)表示两个表达式之间的关联点是在 "namespace" 这个标签上。这意味着第一个表达式的结果(即有 info 级别警报的命名空间)将与第二个表达式的结果进行对比。- ALERTS{alertname!="InfoInhibitor", alertstate="firing", severity=~"warning|critical"} == 1:这个子查询表达式查找在命名空间内是否存在除了 InfoInhibitor 以外的其他警报,这些警报的状态为 "firing" 并且严重性等级为 "warning" 或者 "critical"。如果存在这样的警报,表达式的值将是 1。

将这些部分组合起来,整个查询表达式的含义是:在任何命名空间中,如果有 "info" 级别的警报活动,但是没有其他更严重("warning" 或 "critical")的警报活动,则 InfoInhibitor 警报会被触发。换句话说,InfoInhibitor 警报在有 "info" 级别警报但没有更高级别警报同时存在的情况下保持活跃。

最后,

&g0.tab=1是一个附加参数,用于在 Prometheus 的图形界面中直接打开表达式查询的标签页

https://github.com/prometheus-operator/kube-prometheus/blob/main/manifests/alertmanager-secret.yaml

apiVersion: v1

kind: Secret

metadata:

labels:

app.kubernetes.io/component: alert-router

app.kubernetes.io/instance: main

app.kubernetes.io/name: alertmanager

app.kubernetes.io/part-of: kube-prometheus

app.kubernetes.io/version: 0.27.0

name: alertmanager-main

namespace: monitoring

stringData:

alertmanager.yaml: |-

"global":

"resolve_timeout": "5m"

"inhibit_rules":

- "equal":

- "namespace"

- "alertname"

"source_matchers":

- "severity = critical"

"target_matchers":

- "severity =~ warning|info"

- "equal":

- "namespace"

- "alertname"

"source_matchers":

- "severity = warning"

"target_matchers":

- "severity = info"

- "equal":

- "namespace"

"source_matchers":

- "alertname = InfoInhibitor"

"target_matchers":

- "severity = info"

"receivers":

- "name": "Default"

- "name": "Watchdog"

- "name": "Critical"

- "name": "null"

"route":

"group_by":

- "namespace"

"group_interval": "5m"

"group_wait": "30s"

"receiver": "Default"

"repeat_interval": "12h"

"routes":

- "matchers":

- "alertname = Watchdog"

"receiver": "Watchdog"

- "matchers":

- "alertname = InfoInhibitor"

"receiver": "null"

- "matchers":

- "severity = critical"

"receiver": "Critical"

type: Opaqueglobal:

resolve_timeout: 5m

inhibit_rules:

- equal:

- namespace

- alertname

source_matchers:

- severity = critical

target_matchers:

- severity =~ warning|info

- equal:

- namespace

- alertname

source_matchers:

- severity = warning

target_matchers:

- severity = info

- equal:

- namespace

source_matchers:

- alertname = InfoInhibitor

target_matchers:

- severity = info

receivers:

- name: Default

- name: Watchdog

- name: Critical

- name: null

route:

group_by:

- namespace

group_interval: 5m

group_wait: 30s

receiver: Default

repeat_interval: 12h

routes:

- matchers:

- alertname = Watchdog

receiver: Watchdog

- matchers:

- alertname = InfoInhibitor

receiver: null

- matchers:

- severity = critical

receiver: Critical- source_match:

severity: 'critical'

target_match:

severity =~ warning|info

- source_match:

severity: 'warning'

target_match:

severity = info

- equal:

- namespace

- alertname

source_matchers:

- severity = critical

target_matchers:

- severity =~ warning|info

- equal:

- namespace

- alertname

source_matchers:

- severity = warning

target_matchers:

- severity = info3 CPUThrottlingHigh

{

"receiver": "webhook",

"status": "firing",

"alerts": [{

"status": "firing",

"labels": {

"alertname": "CPUThrottlingHigh",

"container": "kube-rbac-proxy-main",

"namespace": "monitoring",

"pod": "kube-state-metrics-5ff4fcd9f5-4mkcw",

"prometheus": "monitoring/k8s",

"severity": "info"

},

"annotations": {

"description": "31.25% throttling of CPU in namespace monitoring for container kube-rbac-proxy-main in pod kube-state-metrics-5ff4fcd9f5-4mkcw.",

"runbook_url": "https://runbooks.prometheus-operator.dev/runbooks/kubernetes/cputhrottlinghigh",

"summary": "Processes experience elevated CPU throttling."

},

"startsAt": "2024-06-29T12:02:19.944Z",

"endsAt": "0001-01-01T00:00:00Z",

"generatorURL": "http://prometheus-k8s-0:9090/graph?g0.expr=sum+by+%28container%2C+pod%2C+namespace%29+%28increase%28container_cpu_cfs_throttled_periods_total%7Bcontainer%21%3D%22%22%7D%5B5m%5D%29%29+%2F+sum+by+%28container%2C+pod%2C+namespace%29+%28increase%28container_cpu_cfs_periods_total%5B5m%5D%29%29+%3E+%2825+%2F+100%29&g0.tab=1",

"fingerprint": "b4acb9eafacb59c4"

}],

"groupLabels": {

"severity": "info"

},

"commonLabels": {

"alertname": "CPUThrottlingHigh",

"container": "kube-rbac-proxy-main",

"namespace": "monitoring",

"pod": "kube-state-metrics-5ff4fcd9f5-4mkcw",

"prometheus": "monitoring/k8s",

"severity": "info"

},

"commonAnnotations": {

"description": "31.25% throttling of CPU in namespace monitoring for container kube-rbac-proxy-main in pod kube-state-metrics-5ff4fcd9f5-4mkcw.",

"runbook_url": "https://runbooks.prometheus-operator.dev/runbooks/kubernetes/cputhrottlinghigh",

"summary": "Processes experience elevated CPU throttling."

},

"externalURL": "http://alertmanager-main-1:9093",

"version": "4",

"groupKey": "{}:{severity="info"}",

"truncatedAlerts": 0

}告警的名称是"CPUThrottlingHigh",表示某个容器中的CPU节流(throttling)过高。以下是对这个告警信息的详细解释:

- 告警接收者(receiver):webhook

- 告警状态(status):firing,表示正在触发告警

- 告警源(s):包含一个告警源对象,其中包含了以下信息:

- 状态(status):firing,表示正在触发告警

- 标签(labels):包含了告警相关的元数据,如容器名称、命名空间、Pod名称等

- 注释(annotations):包含了告警的描述、处理手册URL和摘要等信息

- 开始时间(startsAt):告警开始的时间

- 结束时间(endsAt):告警结束的时间,这里是默认值,表示告警尚未结束

- 生成器URL(generatorURL):指向Prometheus查询界面的URL,用于查看告警相关的监控数据

- 指纹(fingerprint):告警的唯一标识符

- 分组标签(groupLabels):表示告警所属的分组,这里根据严重性(severity)进行分组

- 公共标签(commonLabels):表示告警的一些公共元数据,如名称、容器、命名空间等

- 公共注释(commonAnnotations):表示告警的一些公共描述信息,如描述、处理手册URL和摘要等

- 外部URL(externalURL):表示告警管理系统的外部访问地址

- 版本(version):表示告警信息的版本号

- 组键(groupKey):表示告警所属分组的键值对,这里根据严重性(severity)进行分组

- 截断告警数(truncatedAlerts):表示被截断的告警数量,这里是0,表示没有告警被截断

综上所述,这个告警信息表示在"monitoring"命名空间下的"kube-rbac-proxy-main"容器中,CPU节流达到了31.25%,可能导致进程经历较高的CPU节流。告警的处理手册URL为"https://runbooks.prometheus-operator.dev/runbooks/kubernetes/cputhrottlinghigh",可以从这里获取更多关于如何处理这种告警的信息。

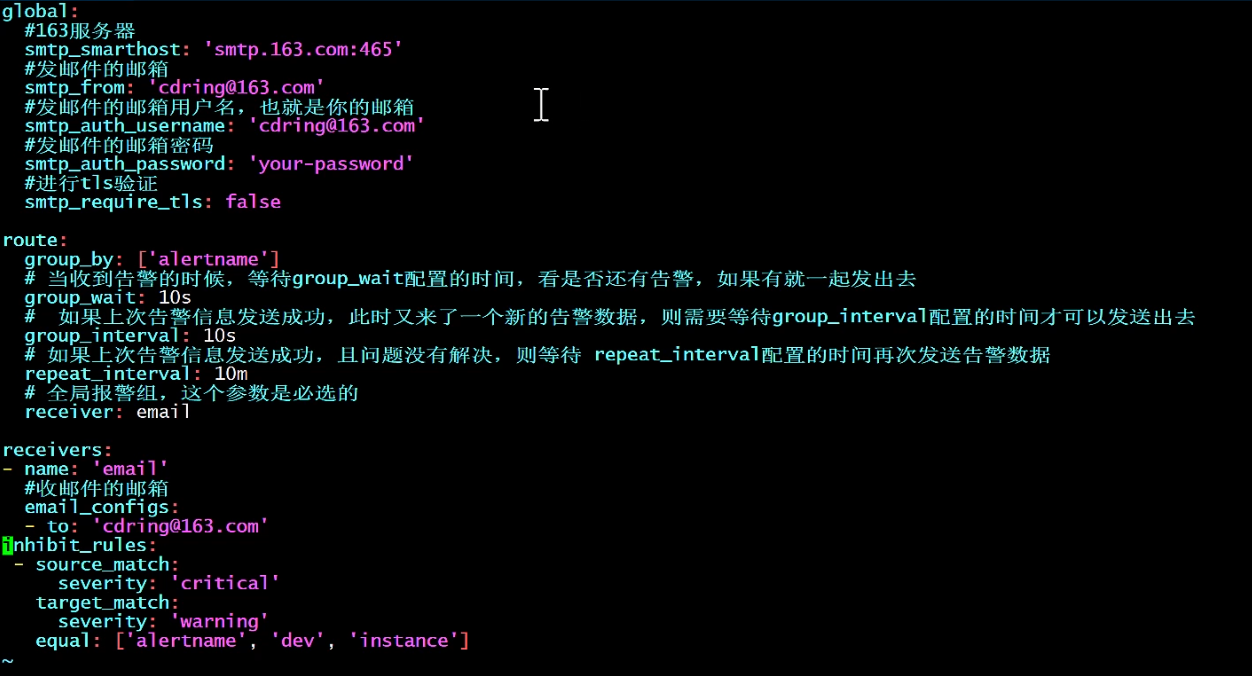

配置

1

# 配置全局的邮件发送服务器信息

global:

# 163服务器地址和端口

smtp_smarthost: 'smtp.163.com:465'

# 发送邮件的邮箱地址

smtp_from: 'cdring@163.com'

# 发送邮件的用户名(即您的邮箱)

smtp_auth_username: 'cdring@163.com'

# 进行tls验证

smtp_auth_password: 'your-password'

smtp_require_tls: false

# 设置告警路由规则

route:

# 当收到告警的时候,等待group_wait配置的时间,看是否有还有告警,如果有就一起发出去

group_by: ['alertname']

group_wait: 10s

# 如果上次告警信息发送成功,此时又来了一个新的告警数据,则需要等待group_interval配置的时间才可以发送出去

group_interval: 10m

# 如果上次告警信息发送成功,且问题没有解决,则等待 repeat_interval 配置的时间再次发送告警数据

repeat_interval: 10m

receiver: email

# 设置接收者

receivers:

- name: 'email'

# 收到告警时发送邮件至该地址

to: 'cdring@163.com'

# 抑制规则设置

inhibit_rules:

# 源匹配条件

source_match:

severity: 'critical'

# 目标匹配条件

target_match:

severity: 'warning'

# 只有当源和目标匹配项完全相同时才抑制告警

equal: ['alertname', 'dev', 'instance']2

3 博哥原版

# global块配置下的配置选项在本配置文件内的所有配置项下可见

global:

# 在Alertmanager内管理的每一条告警均有两种状态: "resolved"或者"firing". 在altermanager首次发送告警通知后, 该告警会一直处于firing状态,设置resolve_timeout可以指定处于firing状态的告警间隔多长时间会被设置为resolved状态, 在设置为resolved状态的告警后,altermanager不会再发送firing的告警通知.

resolve_timeout: 1h

# 邮件告警配置

smtp_smarthost: 'smtp.exmail.qq.com:25'

smtp_from: 'dukuan@xxx.com'

smtp_auth_username: 'dukuan@xxx.com'

smtp_auth_password: 'DKxxx'

# HipChat告警配置

# hipchat_auth_token: '123456789'

# hipchat_auth_url: 'https://hipchat.foobar.org/'

# wechat

wechat_api_url: 'https://qyapi.weixin.qq.com/cgi-bin/'

wechat_api_secret: 'JJ'

wechat_api_corp_id: 'ww'

# 告警通知模板

templates:

- '/etc/alertmanager/config/*.tmpl'

# route: 根路由,该模块用于该根路由下的节点及子路由routes的定义. 子树节点如果不对相关配置进行配置,则默认会从父路由树继承该配置选项。每一条告警都要进入route,即要求配置选项group_by的值能够匹配到每一条告警的至少一个labelkey(即通过POST请求向altermanager服务接口所发送告警的labels项所携带的<labelname>),告警进入到route后,将会根据子路由routes节点中的配置项match_re或者match来确定能进入该子路由节点的告警(由在match_re或者match下配置的labelkey: labelvalue是否为告警labels的子集决定,是的话则会进入该子路由节点,否则不能接收进入该子路由节点).

route:

# 例如所有labelkey:labelvalue含cluster=A及altertname=LatencyHigh labelkey的告警都会被归入单一组中

group_by: ['job', 'altername', 'cluster', 'service','severity']

# 若一组新的告警产生,则会等group_wait后再发送通知,该功能主要用于当告警在很短时间内接连产生时,在group_wait内合并为单一的告警后再发送

group_wait: 30s

# 再次告警时间间隔

group_interval: 5m

# 如果一条告警通知已成功发送,且在间隔repeat_interval后,该告警仍然未被设置为resolved,则会再次发送该告警通知

repeat_interval: 12h

# 默认告警通知接收者,凡未被匹配进入各子路由节点的告警均被发送到此接收者

receiver: 'wechat'

# 上述route的配置会被传递给子路由节点,子路由节点进行重新配置才会被覆盖

# 子路由树

routes:

# 该配置选项使用正则表达式来匹配告警的labels,以确定能否进入该子路由树

# match_re和match均用于匹配labelkey为service,labelvalue分别为指定值的告警,被匹配到的告警会将通知发送到对应的receiver

- match_re:

service: ^(foo1|foo2|baz)$

receiver: 'wechat'

# 在带有service标签的告警同时有severity标签时,他可以有自己的子路由,同时具有severity != critical的告警则被发送给接收者team-ops-mails,对severity == critical的告警则被发送到对应的接收者即team-ops-pager

routes:

- match:

severity: critical

receiver: 'wechat'

# 比如关于数据库服务的告警,如果子路由没有匹配到相应的owner标签,则都默认由team-DB-pager接收

- match:

service: database

receiver: 'wechat'

# 我们也可以先根据标签service:database将数据库服务告警过滤出来,然后进一步将所有同时带labelkey为database

- match:

severity: critical

receiver: 'wechat'

# 抑制规则,当出现critical告警时 忽略warning

inhibit_rules:

- source_match:

severity: 'critical'

target_match:

severity: 'warning'

# Apply inhibition if the alertname is the same.

# equal: ['alertname', 'cluster', 'service']

#

# 收件人配置

receivers:

- name: 'team-ops-mails'

email_configs:

- to: 'dukuan@xxx.com'

- name: 'wechat'

wechat_configs:

- send_resolved: true

corp_id: 'ww'

api_secret: 'JJ'

to_tag: '1'

agent_id: '1000002'

api_url: 'https://qyapi.weixin.qq.com/cgi-bin/'

message: '{{ template "wechat.default.message" . }}'

#- name: 'team-X-pager'

# email_configs:

# - to: 'team-X+alerts-critical@example.org'

# pagerduty_configs:

# - service_key: <team-X-key>

#

#- name: 'team-Y-mails'

# email_configs:

# - to: 'team-Y+alerts@example.org'

#

#- name: 'team-Y-pager'

# pagerduty_configs:

# - service_key: <team-Y-key>

#

#- name: 'team-DB-pager'

# pagerduty_configs:

# - service_key: <team-DB-key>

#

#- name: 'team-X-hipchat'

# hipchat_configs:

# - auth_token: <auth_token>

# room_id: 85

# message_format: html

# notify: true4 个人博客

global:

# 经过此时间后,如果尚未更新告警,则将告警声明为已恢复。(即prometheus没有向alertmanager发送告警了)

resolve_timeout: 5m

# 配置发送邮件信息

smtp_smarthost: 'smtp.qq.com:465'

smtp_from: '742899387@qq.com'

smtp_auth_username: '742899387@qq.com'

smtp_auth_password: 'password'

smtp_require_tls: false

# 读取告警通知模板的目录。

templates:

- '/etc/alertmanager/template/*.tmpl'

# 所有报警都会进入到这个根路由下,可以根据根路由下的子路由设置报警分发策略

route:

# 先解释一下分组,分组就是将多条告警信息聚合成一条发送,这样就不会收到连续的报警了。

# 将传入的告警按标签分组(标签在prometheus中的rules中定义),例如:

# 接收到的告警信息里面有许多具有cluster=A 和 alertname=LatencyHigh的标签,这些个告警将被分为一个组。

#

# 如果不想使用分组,可以这样写group_by: [...]

group_by: ['alertname', 'cluster', 'service']

# 第一组告警发送通知需要等待的时间,等待可以确保有足够的时间为同一分组获取多个告警,然后一起触发这个告警信息。

group_wait: 30s

# 告警组第一次发送通知后, 后续有新的告警进入告警组, 等待多长时间再发送通知

group_interval: 5m

# 告警组中没有新告警加入时, 每过多久发送一次告警

repeat_interval: 3h

# 这里先说一下,告警发送是需要指定接收器的,接收器在receivers中配置,接收器可以是email、webhook、pagerduty、wechat等等。一个接收器可以有多种发送方式。

# 指定默认的接收器

receiver: team-X-mails

# 下面配置的是子路由,子路由的属性继承于根路由(即上面的配置),在子路由中可以覆盖根路由的配置

# 下面是子路由的配置

routes:

# 使用正则的方式匹配告警标签

- match_re:

# 这里可以匹配出标签含有service=foo1或service=foo2或service=baz的告警

service: ^(foo1|foo2|baz)$

# 指定接收器为team-X-mails

receiver: team-X-mails

# 这里配置的是子路由的子路由,当满足父路由的的匹配时,这条子路由会进一步匹配出severity=critical的告警,并使用team-X-pager接收器发送告警,没有匹配到的告警会由父路由进行处理。

routes:

- match:

severity: critical

receiver: team-X-pager

# 这里也是一条子路由,会匹配出标签含有service=files的告警,并使用team-Y-mails接收器发送告警

- match:

service: files

receiver: team-Y-mails

# 这里配置的是子路由的子路由,当满足父路由的的匹配时,这条子路由会进一步匹配出severity=critical的告警,并使用team-Y-pager接收器发送告警,没有匹配到的会由父路由进行处理。

routes:

- match:

severity: critical

receiver: team-Y-pager

# 该路由处理来自数据库服务的所有警报。如果没有团队来处理,则默认为数据库团队。

- match:

# 首先匹配标签service=database

service: database

# 指定接收器

receiver: team-DB-pager

# 根据受影响的数据库对告警进行分组

group_by: [alertname, cluster, database]

routes:

- match:

owner: team-X

receiver: team-X-pager

# 告警是否继续匹配后续的同级路由节点,默认false,下面如果也可以匹配成功,会向两种接收器都发送告警信息(猜测。。。)

continue: true

- match:

owner: team-Y

receiver: team-Y-pager

# 下面是关于inhibit(抑制)的配置,先说一下抑制是什么:抑制规则允许在另一个警报正在触发的情况下使一组告警静音。其实可以理解为告警依赖。比如一台数据库服务器掉电了,会导致db监控告警、网络告警等等,可以配置抑制规则如果服务器本身down了,那么其他的报警就不会被发送出来。

inhibit_rules:

#下面配置的含义:当有多条告警在告警组里时,并且他们的标签alertname,cluster,service都相等,如果severity: 'critical'的告警产生了,那么就会抑制severity: 'warning'的告警。

- source_match: # 源告警(我理解是根据这个报警来抑制target_match中匹配的告警)

severity: 'critical' # 标签匹配满足severity=critical的告警作为源告警

target_match: # 目标告警(被抑制的告警)

severity: 'warning' # 告警必须满足标签匹配severity=warning才会被抑制。

equal: ['alertname', 'cluster', 'service'] # 必须在源告警和目标告警中具有相等值的标签才能使抑制生效。(即源告警和目标告警中这三个标签的值相等'alertname', 'cluster', 'service')

# 下面配置的是接收器

receivers:

# 接收器的名称、通过邮件的方式发送、

- name: 'team-X-mails'

email_configs:

# 发送给哪些人

- to: 'team-X+alerts@example.org'

# 是否通知已解决的警报

send_resolved: true

# 接收器的名称、通过邮件和pagerduty的方式发送、发送给哪些人,指定pagerduty的service_key

- name: 'team-X-pager'

email_configs:

- to: 'team-X+alerts-critical@example.org'

pagerduty_configs:

- service_key: <team-X-key>

# 接收器的名称、通过邮件的方式发送、发送给哪些人

- name: 'team-Y-mails'

email_configs:

- to: 'team-Y+alerts@example.org'

# 接收器的名称、通过pagerduty的方式发送、指定pagerduty的service_key

- name: 'team-Y-pager'

pagerduty_configs:

- service_key: <team-Y-key>

# 一个接收器配置多种发送方式

- name: 'ops'

webhook_configs:

- url: 'http://prometheus-webhook-dingtalk.kube-ops.svc.cluster.local:8060/dingtalk/webhook1/send'

send_resolved: true

email_configs:

- to: '742899387@qq.com'

send_resolved: true

- to: 'soulchild@soulchild.cn'

send_resolved: true回复

【1. 钉钉机器人】

如果想用钉钉webhook机器人测试但是自己本地没有公网IP的可以使用运营商网络公共出口ip地址作为白名单,具体查询可以访问ip138.com查询(可能不够安全,但是token没泄露自己用来测试问题不大)【2. 告警】

先使用/b01bdc063/boge/getjson接口接收告警,我的会一直持续收到:Watchdog、CPUThrottlingHigh、InfoInhibitor这三类告警。

告警条件、模板可在https://prometheus.boge.com/rules查看

【2.1 Watchdog】

{

"status": "firing",

"labels": {

"alertname": "Watchdog",

"prometheus": "monitoring/k8s",

"severity": "none"

},

"annotations": {

"description": "This is an alert meant to ensure that the entire alerting pipeline is functional.\nThis alert is always firing, therefore it should always be firing in Alertmanager\nand always fire against a receiver. There are integrations with various notification\nmechanisms that send a notification when this alert is not firing. For example the\n\"DeadMansSnitch\" integration in PagerDuty.\n",

"runbook_url": "https://runbooks.prometheus-operator.dev/runbooks/general/watchdog",

"summary": "An alert that should always be firing to certify that Alertmanager is working properly."

},

"startsAt": "2024-06-29T03:49:16.597Z",

"endsAt": "0001-01-01T00:00:00Z",

"generatorURL": "http://prometheus-k8s-0:9090/graph?g0.expr=vector%281%29\u0026g0.tab=1",

"fingerprint": "e1749c6acab64267"

}

【2.2 InfoInhibitor】

{

"status": "firing",

"labels": {

"alertname": "InfoInhibitor",

"alertstate": "firing",

"container": "config-reloader",

"namespace": "monitoring",

"pod": "alertmanager-main-1",

"prometheus": "monitoring/k8s",

"severity": "none"

},

"annotations": {

"description": "This is an alert that is used to inhibit info alerts.\nBy themselves, the info-level alerts are sometimes very noisy, but they are relevant when combined with\nother alerts.\nThis alert fires whenever there's a severity=\"info\" alert, and stops firing when another alert with a\nseverity of 'warning' or 'critical' starts firing on the same namespace.\nThis alert should be routed to a null receiver and configured to inhibit alerts with severity=\"info\".\n",

"runbook_url": "https://runbooks.prometheus-operator.dev/runbooks/general/infoinhibitor",

"summary": "Info-level alert inhibition."

},

"startsAt": "2024-06-29T11:35:16.597Z",

"endsAt": "0001-01-01T00:00:00Z",

"generatorURL": "http://prometheus-k8s-0:9090/graph?g0.expr=ALERTS%7Bseverity%3D%22info%22%7D+%3D%3D+1+unless+on+%28namespace%29+ALERTS%7Balertname%21%3D%22InfoInhibitor%22%2Calertstate%3D%22firing%22%2Cseverity%3D~%22warning%7Ccritical%22%7D+%3D%3D+1\u0026g0.tab=1",

"fingerprint": "fd0310eb1c236cd9"

}

【2.3 InfoInhibitor】

{

"status": "firing",

"labels": {

"alertname": "CPUThrottlingHigh",

"container": "config-reloader",

"namespace": "monitoring",

"pod": "alertmanager-main-1",

"prometheus": "monitoring/k8s",

"severity": "info"

},

"annotations": {

"description": "35% throttling of CPU in namespace monitoring for container config-reloader in pod alertmanager-main-1.",

"runbook_url": "https://runbooks.prometheus-operator.dev/runbooks/kubernetes/cputhrottlinghigh",

"summary": "Processes experience elevated CPU throttling."

},

"startsAt": "2024-06-29T11:34:49.944Z",

"endsAt": "0001-01-01T00:00:00Z",

"generatorURL": "http://prometheus-k8s-0:9090/graph?g0.expr=sum+by+%28container%2C+pod%2C+namespace%29+%28increase%28container_cpu_cfs_throttled_periods_total%7Bcontainer%21%3D%22%22%7D%5B5m%5D%29%29+%2F+sum+by+%28container%2C+pod%2C+namespace%29+%28increase%28container_cpu_cfs_periods_total%5B5m%5D%29%29+%3E+%2825+%2F+100%29\u0026g0.tab=1",

"fingerprint": "1d92e83e5b4125ec"

}

kubectl -n monitoring edit PrometheusRule kubernetes-monitoring-rules

/7332f19/prometheus/dingtalk【3.过滤清洗告警】

【3.1 Watchdog的过滤】

在博哥的日志清洗工具中过滤掉了。在容器alertmanaer-webhook中的日志服务启动参数中的"serviceA,DeadMansSnitch"是负责在接受到每个alert的annotations.description字段中匹配是否存在"serviceA"或“DeadMansSnitch"。其中DeadMansSnitch则是存在名为Watchdog的alert里的description字段里。另一个"serviceA"应该是一个示例。

---

kind: Deployment

metadata:

name: alertmanaer-dingtalk-dp

spec:

template:

spec:

containers:

- name: alertmanaer-webhook

image: harbor.boge.com/product/alertmanaer-webhook:1.0

args:

- web

- "https://oapi.dingtalk.com/robot/send?access_token=xxxxxxxxxxxxxxxxxxxxxxx"

- "9999"

- "serviceA,DeadMansSnitch"

---

过滤方案二(默认配置文件有,但是博哥课程去掉了):

route:

routes:

- matchers:

- alertname = Watchdog

receiver: 'Watchdog'【3.2 InfoInhibitor和CPUThrottlingHigh的过滤】

当有 severity="info" 的警报发生时,InfoInhibitor 会被触发,提示要抑制 severity="info" 的警报。这个警报应该被配置为路由到一个“null receiver”,也就是不会发送任何通知的接收器,这样即使 InfoInhibitor 被触发,也不会有任何实际的通知发送出去。

而CPUThrottlingHigh的告警级别就是severity="info",导致告警InfoInhibitor也频繁出现

InfoInhibitor 这个警报的主要用途是在 Prometheus 监控系统中抑制或阻止信息级别(severity="info")的警报。信息级别的警报通常用来提供一些运行时的状态或信息,但这些警报可能会非常频繁,产生大量的通知,这被称为“噪音”,可能对运维团队造成干扰,尤其是在处理更为重要的警告和关键警报时。

可以添加下面路由和抑制规则进行过滤(默认配置文件有,但是博哥课程去掉了):

---

route:

routes:

- matchers:

- alertname = InfoInhibitor

receiver: 'null-receivers'

inhibit_rules:

- equal:

- namespace

source_matchers:

- alertname = InfoInhibitor

target_matchers:

- severity = info

receivers:

- name: null-receivers

---

抑制规则:(alertname = InfoInhibitor)活动时,阻止所有信息级别的警报(severity = info)

理由规则:把InfoInhibitor路由到一个空的接收者(名字不能为null)思考:当InfoInhibitor为resolved时呢

3.CPUThrottlingHigh的过滤

CPUThrottlingHigh的告警级别就是severity="info",在上一个规则抑制一共3个配置

prometheus rules

kubectl -n monitoring edit PrometheusRule kubernetes-monitoring-rulesprometheus-->alertmanager

alertmanager-->webhook

# my global config

global:

scrape_interval: 15s # Set the scrape interval to every 15 seconds. Default is every 1 minute.

evaluation_interval: 15s # Evaluate rules every 15 seconds. The default is every 1 minute.

# scrape_timeout is set to the global default (10s).

# Alertmanager configuration

alerting:

alertmanagers:

- static_configs:

- targets:

# - alertmanager:9093

# Load rules once and periodically evaluate them according to the global 'evaluation_interval'.

rule_files:

# - "first_rules.yml"

# - "second_rules.yml"

# A scrape configuration containing exactly one endpoint to scrape:

# Here it's Prometheus itself.

scrape_configs:

# The job name is added as a label `job=<job_name>` to any timeseries scraped from this config.

- job_name: 'prometheus'

# metrics_path defaults to '/metrics'

# scheme defaults to 'http'.

static_configs:

- targets: ['localhost:9090']job_name就是servicemonitor

再根据rule进行推送

Dea

思考:多久push一次到alertmanager

global:

scrape_interval: 30s

scrape_timeout: 10s

evaluation_interval: 30s

external_labels:

prometheus: monitoring/k8s

prometheus_replica: prometheus-k8s-0

scrape_interval: 这个参数定义了 Prometheus 从目标服务(如应用程序、数据库等)抓取监控数据的时间间隔。如果监控数据中包含了警报触发条件,那么这些警报会在每次抓取后被 Prometheus 推送给 Alertmanager。

evaluation_interval: 这个参数定义了 Prometheus 对监控规则进行评估的时间间隔。监控规则定义了如何根据监控数据生成警报。如果警报规则匹配,Prometheus 会将警报推送到 Alertmanager。

alerting: alertmanagers: static_configs: targets: 这个配置指定了 Alertmanager 的地址,告诉 Prometheus 将警报推送到哪里。

通常情况下,Prometheus 会根据 scrape_interval 和 evaluation_interval 的设置来判定何时有新的警报需要推送到 Alertmanager。例如,如果 scrape_interval 设置为 1 分钟,evaluation_interval 设置为 1 分钟,那么每分钟 Prometheus 将抓取一次监控数据,并且每分钟评估一次警报规则,生成需要推送给 Alertmanager 的警报。

for 15m

告警设置的持续时间为15分钟,意味着只有当上述条件连续满足15分钟,,告警才会真正发出。这个告警的严重级别被标记为infokubectl -n monitoring get PrometheusRule kube-prometheus-rules -o yaml > kube-prometheus-rules.yaml

kubectl -n monitoring edit PrometheusRule kube-prometheus-rules- alert: '恒真报警测试'

expr: 1 == 1

for: 1s

labels:

severity: critical

annotations:

summary: "恒真报警测试"

description: "恒真报警测试"

groups:

- name: '自定义报警测试'

rules:

- alert: '恒真报警测试'

annotations:

description: '恒真报警测试'

summary: '恒真报警测试'

expr: 1 == 1

for: 1s

labels:

severity: critical groups:

- name: general.rules

rules:

- alert: TargetDown

annotations:

description: '{{ printf "%.4g" $value }}% of the {{ $labels.job }}/{{ $labels.service

}} targets in {{ $labels.namespace }} namespace are down.'

runbook_url: https://runbooks.prometheus-operator.dev/runbooks/general/targetdown

summary: One or more targets are unreachable.

expr: 100 * (count(up == 0) BY (cluster, job, namespace, service) / count(up)

BY (cluster, job, namespace, service)) > 10

for: 10m

labels:

severity: warning待测试

alertmanager页面

http://192.168.33.10:9093

10.68.199.109:9093

# vim alertmanager-ingress.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: alertmanager

spec:

rules:

- host: alertmanager.boge.com

http:

paths:

- backend:

service:

name: alertmanager-main

port:

number: 9093

path: /

pathType: Prefix# kubectl -n monitoring apply -f alertmanager-ingress.yamlrule备份

apiVersion: monitoring.coreos.com/v1

kind: PrometheusRule

metadata:

labels:

role: alert-rules

prometheus: k8s

namespace: monitoring

name: test-rules

namespace: monitoring

spec:

groups:

- name: test

rules:

- alert: test-alert-critical

annotations:

summary: test-alert-critical-summary

description: test-alert-critical-description

expr: |

vector(1)

for: 5s

labels:

severity: critical

- alert: test-alert-info

annotations:

summary: test-alert-info-summary

description: test-alert-info-description

expr: |

vector(1)

for: 5s

labels:

severity: info

- alert: test-alert-warning

annotations:

summary: test-alert-warning-summary

description: test-alert-warning-description

expr: |

vector(1)

for: 5s

labels:

severity: warning

- name: demo

rules:

- alert: nodeDiskUsage

annotations:

description: |

节点 {{$labels.instance }}

挂载目录 {{ $labels.mountpoint }}

当前可用空间 {{ printf "%.2f" $value }}%

summary: |

挂载目录可用空间低于 50%

expr: |

node_filesystem_avail_bytes{fstype!="",job="node-exporter"} /

node_filesystem_size_bytes{fstype!="",job="node-exporter"} * 100 < 70

for: 10s

labels:

severity: info参考

https://www.cnblogs.com/c-moon/p/16797218.html#31%E5%88%9B%E5%BB%BA%E6%8A%A5%E8%AD%A6%E8%A7%84%E5%88%99

https://zhuanlan.zhihu.com/p/646747402

原 https://www.soulchild.cn/post/2073/

https://cloud.tencent.com/developer/article/2129822

https://blog.csdn.net/hancoder/article/details/121703904

https://blog.csdn.net/mingongge/article/details/134067518

https://cakepanit.com/forward/dc57d8c5.html