第03关 部署K8s

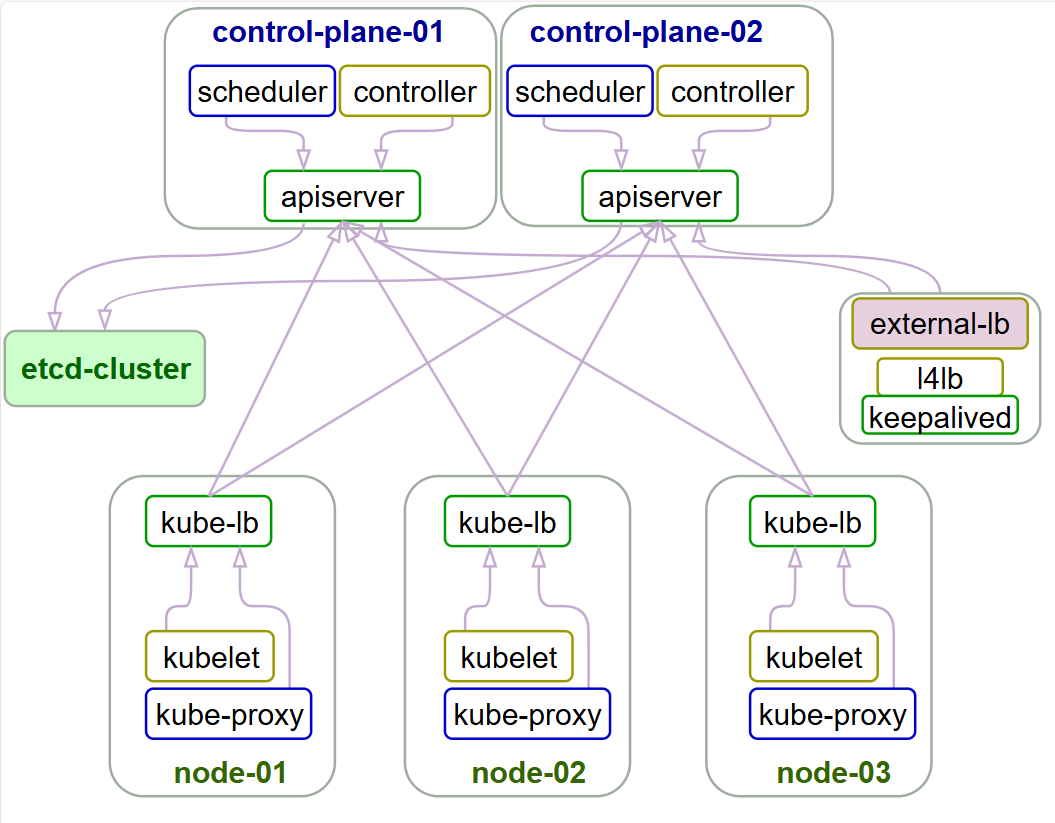

架构规划

节点数为奇数

奇数的原因是防止资源的浪费

k8s的一致性算法RAFT,要求集群需要数量

大于(n/2)的正常主节点才能提供服务(n为主节点数)

因此3个主节点有1个节点的容错率,而4个主节点也只有1个节点的容错率

三个或五个也是平衡的考虑

3或5则是因为1个没有容错率,7个主节或更多将导致确定集群成员和仲裁的开销加大,不建议这样做

脑裂现象?

这是在Elasticsearch、ZooKeeper、k8s集群都会出现的现象

集群中的Master或Leader节点往往是通过选举产生的。

在网络正常的情况下,可以顺利的选举出Leader。但当两个机房之间的网络通信出现故障时,选举机制就有可能在不同的网络分区中选出两个Leader。当网络恢复时,这两个Leader该如何处理数据同步?又该听谁的?这也就出现了“脑裂”现象

下面是此次虚拟机集群安装前的IP等信息规划(完全模拟一个中小型企业K8S集群)

| IP | hostname | role | resource |

|---|---|---|---|

| 192.168.10.201 | node-1 | master/work node | 2c/4g(ingress-nginx) |

| 192.168.10.202 | node-2 | master/work node | 2c/4g(harbor?) |

| 192.168.10.203 | node-3 | work node | 2c/4g |

| 192.168.10.204 | node-4 | work node | 2c/4g |

| 192.168.10.205 | node-5 | work node | 2c/4g |

这里采用开源项目easzlab/kubeasz,以二进制安装的方式,符合企业级稳定运行的要求,非常优秀的一个开源项目,亲眼见证了star数从0到现在最新的9.6k的增长过程,版本也在不停地迭代更新,基本是跟着K8S的更新节奏来。

博哥在实际工作中,在全球各区域(国内、北美、欧洲、东南亚),各个主流云厂商(阿里云、华为云、腾讯云、百度云、火山云、移动云、AWS、Google cloud等),均自建过生产K8S集群提供业务服务能力,用的就是这个优秀的K8S安装开源项目,稳定运行时间长的集群有4年左右,期间没出现过大问题很稳定。

- 注意1:确保各节点时区设置一致、时间同步。 如果你的环境没有提供NTP 时间同步,推荐集成安装chrony

高可用集群所需节点配置如下

| 角色 | 数量 | 描述 |

|---|---|---|

| 部署节点 | 1 | 运行ansible/ezctl命令,一般复用第一个master节点 |

| etcd节点 | 3 | 注意etcd集群需要1,3,5,...奇数个节点,一般复用master节点 |

| master节点 | 2 | 高可用集群至少2个master节点 |

| node节点 | n | 运行应用负载的节点,可根据需要提升机器配置/增加节点数 |

机器配置:

- master节点:4c/8g内存/50g硬盘

- worker节点:建议8c/32g内存/200g硬盘以上

注意:默认配置下容器运行时和kubelet会占用/var的磁盘空间,如果磁盘分区特殊,可以设置config.yml中的容器运行时和kubelet数据目录:CONTAINERD_STORAGE_DIR DOCKER_STORAGE_DIR KUBELET_ROOT_DIR

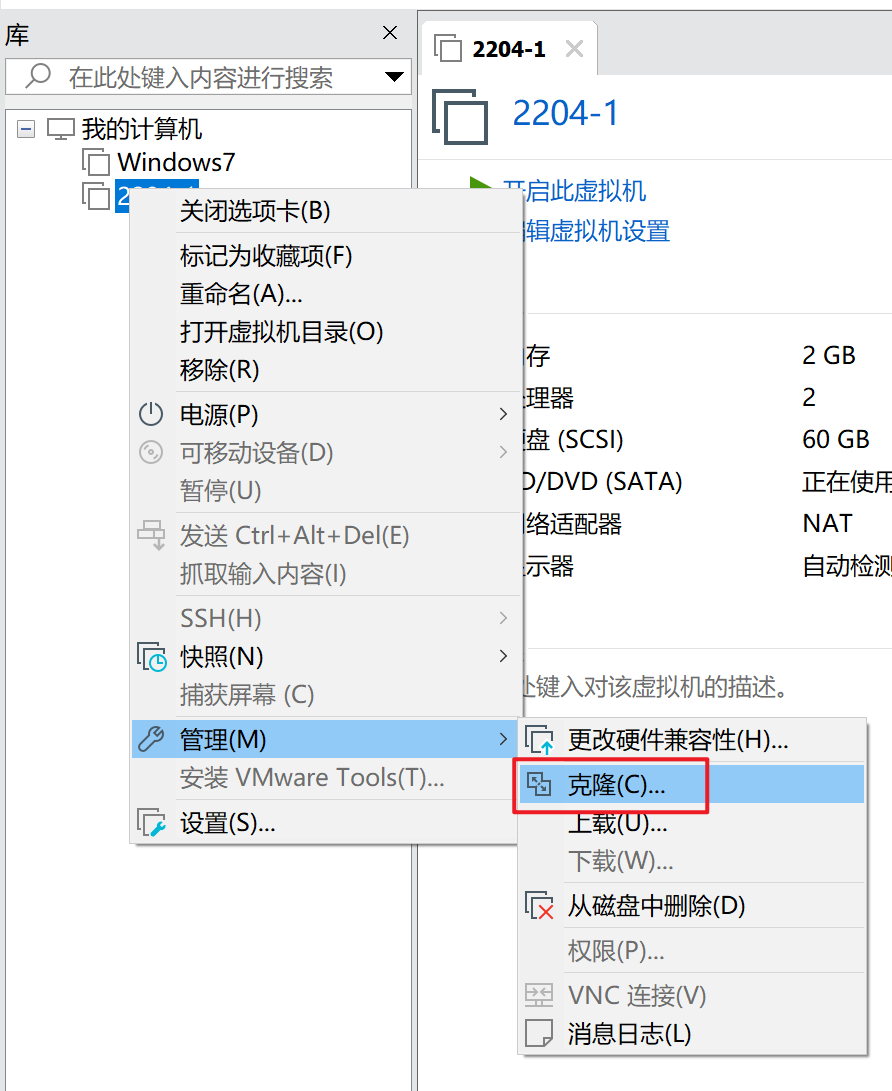

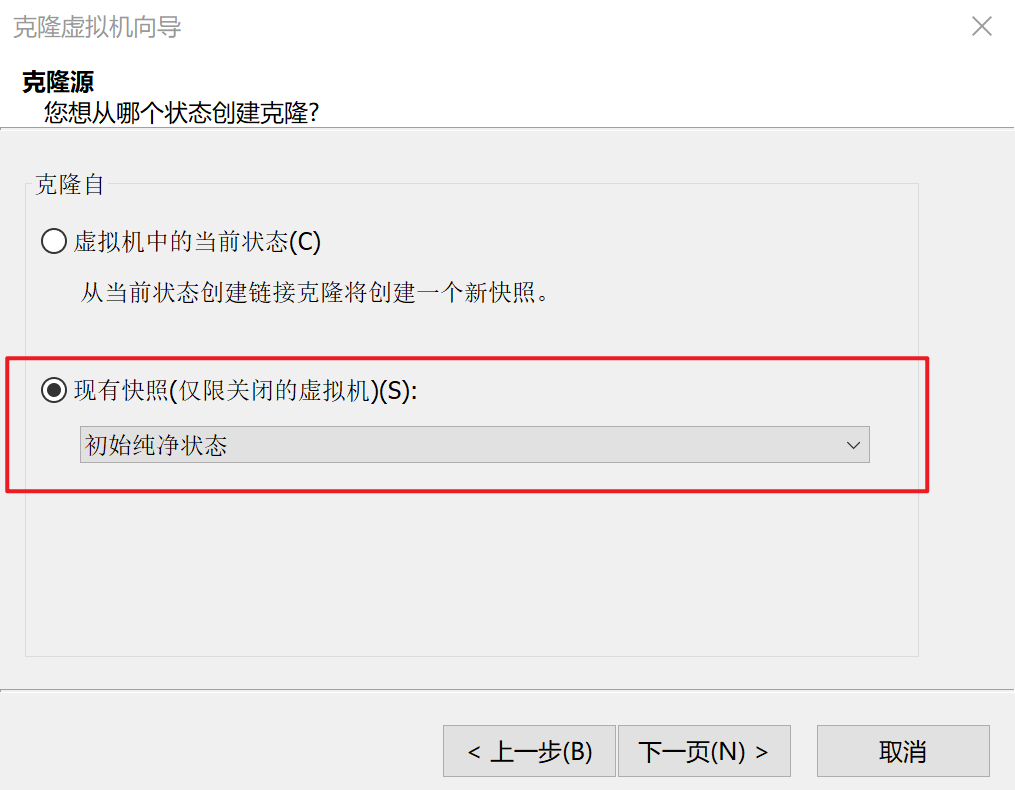

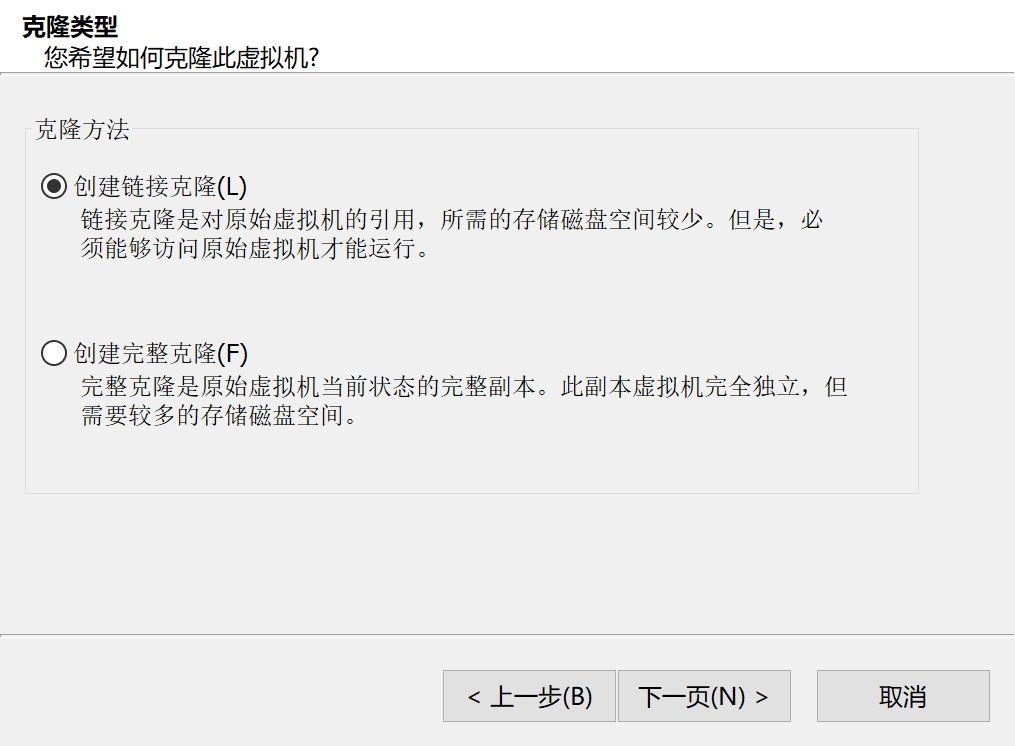

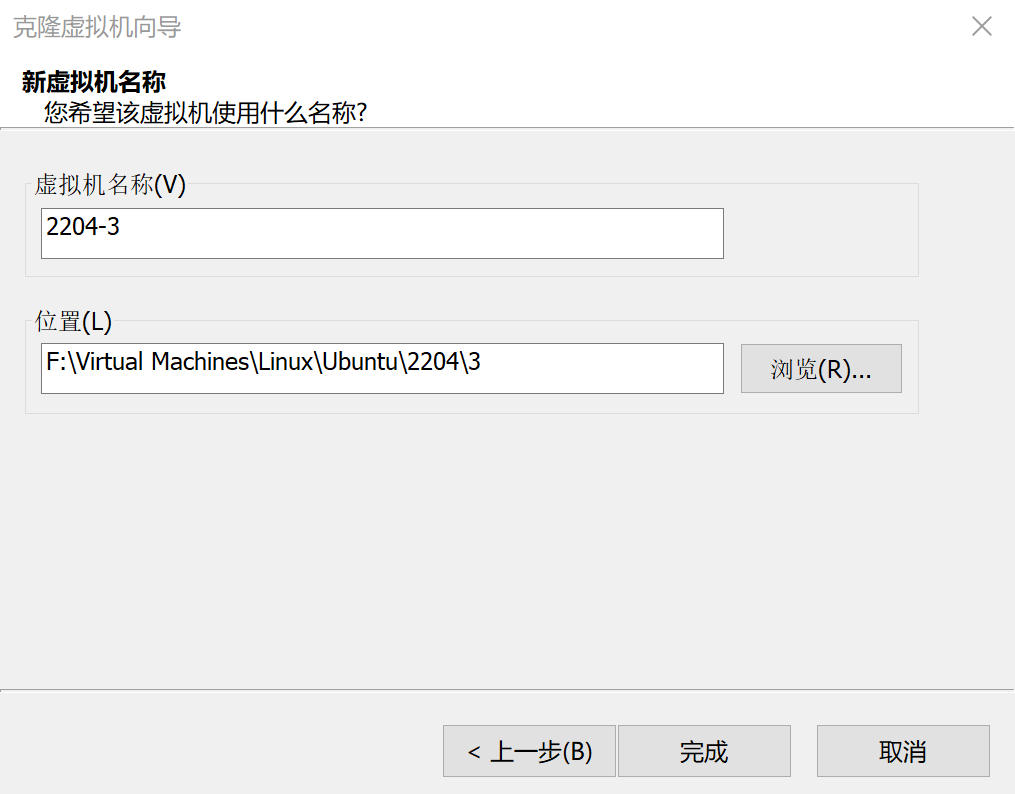

克隆虚拟机节点

克隆

虚拟机->管理->克隆

选择快照

链接克隆

重命名

设置hostname

启动并登录每一个节点

# 201节点设置为node-1,202节点设置为node-2,以此类推

hostnamectl set-hostname node-1修改ip地址

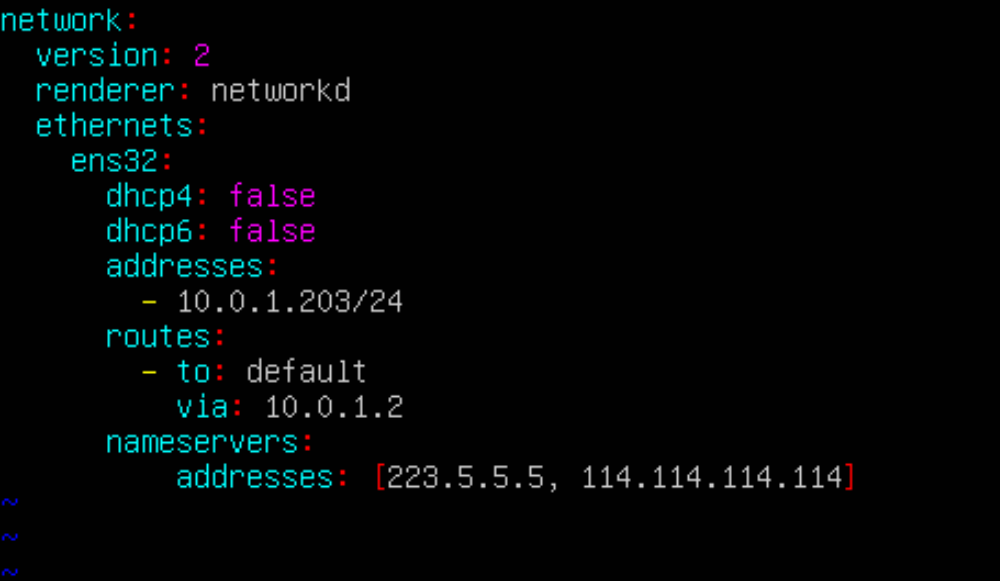

修改每一个节点的ip

# 编辑配置

vim /etc/netplan/00-installer-config.yaml

# 应用配置

netplan apply

生产环境建议创建数据盘

# 只有一个盘

root@node-1:~# lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTS

sda 8:0 0 60G 0 disk

├─sda1 8:1 0 1M 0 part

├─sda2 8:2 0 2G 0 part /boot

└─sda3 8:3 0 58G 0 part

└─ubuntu--vg-ubuntu--lv 253:0 0 29G 0 lvm /

sr0 11:0 1 1024M 0 rom自行打包本地安装包

使用kubeasz离线安装 k8s集群需要下载四个部分:

- kubeasz 项目代码

- 二进制文件(k8s、etcd、containerd等组件)

- 容器镜像文件(calico、coredns、metrics-server等容器镜像)

- 系统软件安装包(ipset、libseccomp2等,仅无法使用本地yum/apt源时需要)

离线文件准备

https://github.com/easzlab/kubeasz/releases

在一台能够访问互联网的服务器上执行,这里可以跟着前面教程克隆出一台虚拟机来操作

下载工具脚本ezdown

推荐版本对照

Kubernetes 1.22 1.23 1.24 1.25 1.26 1.27 1.28 1.29 1.30 kubeasz 3.1.1 3.2.0 3.6.2 3.6.2 3.6.2 3.6.2 3.6.2 3.6.3 3.6.4 从kubeasz 3.6.2版本开始,默认最新版本kubeasz兼容支持安装最新的三个k8s大版本。具体安装说明如下:

(如果/etc/kubeasz/bin 目录下已经有kube* 文件,需要先删除 rm -f /etc/kubeasz/bin/kube*)

安装 k8s v1.28: 使用 kubeasz 3.6.2,执行./ezdown -D 默认下载即可

安装 k8s v1.27: 使用 kubeasz 3.6.2,执行./ezdown -D -k v1.27.5 下载

安装 k8s v1.26: 使用 kubeasz 3.6.2,执行./ezdown -D -k v1.26.8 下载

安装 k8s v1.25: 使用 kubeasz 3.6.2,执行./ezdown -D -k v1.25.13 下载

安装 k8s v1.24: 使用 kubeasz 3.6.2,执行./ezdown -D -k v1.24.17 下载

更多具体支持Kubernetes前往Hub查看 https://hub.docker.com/r/easzlab/kubeasz-k8s-bin/tags

export release=3.6.4

wget https://github.com/easzlab/kubeasz/releases/download/${release}/ezdown

chmod +x ./ezdown使用工具脚本下载安装包

使用工具脚本下载(更多关于ezdown的参数,运行./ezdown 查看)kubeasz代码、二进制、默认容器镜像命令

# ./ezdown

Usage: ezdown [options] [args]

option:

-C stop&clean all local containers

-D download default binaries/images into "/etc/kubeasz"

-P <OS> download system packages of the OS (ubuntu_22,debian_11,...)

-R download Registry(harbor) offline installer

-S start kubeasz in a container

-X <opt> download extra images

-d <ver> set docker-ce version, default "26.1.3"

-e <ver> set kubeasz-ext-bin version, default "1.10.1"

-k <ver> set kubeasz-k8s-bin version, default "v1.30.1"

-m <str> set docker registry mirrors, default "CN"(used in Mainland,China)

-z <ver> set kubeasz version, default "3.6.4"

用法:ezdown [选项] [参数]

选项:

-C 停止并清理所有本地容器

-D 下载默认的二进制文件/镜像到 "/etc/kubeasz"

-P <OS> 下载操作系统的系统包(ubuntu_22,debian_11,...)

-R 下载注册表(harbor)的离线安装程序

-S 在容器中启动 kubeasz

-X <opt> 下载额外的镜像

-d <ver> 设置 docker-ce 版本,默认 "26.1.3"

-e <ver> 设置 kubeasz-ext-bin 版本,默认 "1.10.1"

-k <ver> 设置 kubeasz-k8s-bin 版本,默认 "v1.30.1"

-m <str> 设置 docker 注册表镜像,默认 "CN"(在中国大陆使用)

-z <ver> 设置 kubeasz 版本,默认 "3.6.4"修改工具脚本的docker源(原本的已失效)

修改 ezdown 里面 202行 registry-mirrors 的内容

旧的:

"registry-mirrors": [

"https://docker.nju.edu.cn/",

"https://kuamavit.mirror.aliyuncs.com"

],替换成能用的镜像源:

"registry-mirrors": [

"https://hub.uuuadc.top",

"https://docker.1panel.live"

],修改kubeasz支持k8s版本对应规则

原有模式每个k8s大版本都有推荐对应的kubeasz版本,这样做会导致kubeasz版本碎片化,追踪问题很麻烦,而且也影响普通用户安装体验。从kubeasz 3.6.2版本开始,默认最新版本kubeasz兼容支持安装最新的三个k8s大版本。具体安装说明如下:

(如果/etc/kubeasz/bin 目录下已经有kube* 文件,需要先删除 rm -f /etc/kubeasz/bin/kube*)

安装 k8s v1.29: 使用 kubeasz 3.6.3,执行./ezdown -D 默认下载即可

安装 k8s v1.28: 使用 kubeasz 3.6.2,执行./ezdown -D -k v1.28.5 下载

安装 k8s v1.27: 使用 kubeasz 3.6.2,执行./ezdown -D -k v1.27.9 下载

安装 k8s v1.26: 使用 kubeasz 3.6.2,执行./ezdown -D -k v1.26.12 下载# 国内环境

./ezdown -D -k v1.27.14关于博哥教程kubeasz和k8s版本

| kubeasz | easzlab/kubeasz-k8s-bin | k8s | |

|---|---|---|---|

| 第一节课2023-10-15 | 3.6.2 2023-09-04 | v1.27.5 2023-10-03 | v1.27.5 2023-08-24 |

| 下一个版本 | 3.6.3 2023-12-31 | v1.27.6 2023-10-03 | v1.27.6 2023-09-13 |

| 最新-2024-07-28 | 3.6.4 2024-05-23 | v1.27.14 2024-05-18 | v1.27.16 2024-07-14 |

[可选]如果需要更多组件,请下载额外容器镜像(cilium,flannel,prometheus等)

./ezdown -X flannel

./ezdown -X prometheus

...下载离线系统包 (适用于无法使用yum/apt仓库情形)

# 如果操作系统是ubuntu 22.04

./ezdown -P ubuntu_22上述脚本运行成功后,所有文件(kubeasz代码、二进制、离线镜像)均已整理好放入目录/etc/kubeasz

/etc/kubeasz包含 kubeasz 版本为 ${release} 的发布代码/etc/kubeasz/bin包含 k8s/etcd/docker/cni 等二进制文件/etc/kubeasz/down包含集群安装时需要的离线容器镜像/etc/kubeasz/down/packages包含集群安装时需要的系统基础软件

root@node1:/etc/kubeasz# ll

-rw-rw-r-- 1 root root 20304 May 22 23:09 ansible.cfg

drwxr-xr-x 5 root root 4096 Jul 28 11:32 bin/

drwxrwxr-x 8 root root 4096 Jun 23 23:08 docs/

drwxr-xr-x 4 root root 4096 Jul 28 11:47 down/

drwxrwxr-x 2 root root 4096 Jun 23 23:08 example/

-rwxrwxr-x 1 root root 26507 May 22 23:09 ezctl*

-rwxrwxr-x 1 root root 32390 May 22 23:09 ezdown*

drwxrwxr-x 4 root root 4096 Jun 23 23:08 .github/

-rw-rw-r-- 1 root root 301 May 22 23:09 .gitignore

drwxrwxr-x 10 root root 4096 Jun 23 23:08 manifests/

drwxrwxr-x 2 root root 4096 Jun 23 23:08 pics/

drwxrwxr-x 2 root root 4096 Jun 23 23:08 playbooks/

-rw-rw-r-- 1 root root 6349 May 22 23:09 README.md

drwxrwxr-x 22 root root 4096 Jun 23 23:08 roles/

drwxrwxr-x 2 root root 4096 Jun 23 23:08 tools/# 直接打包离线安装包

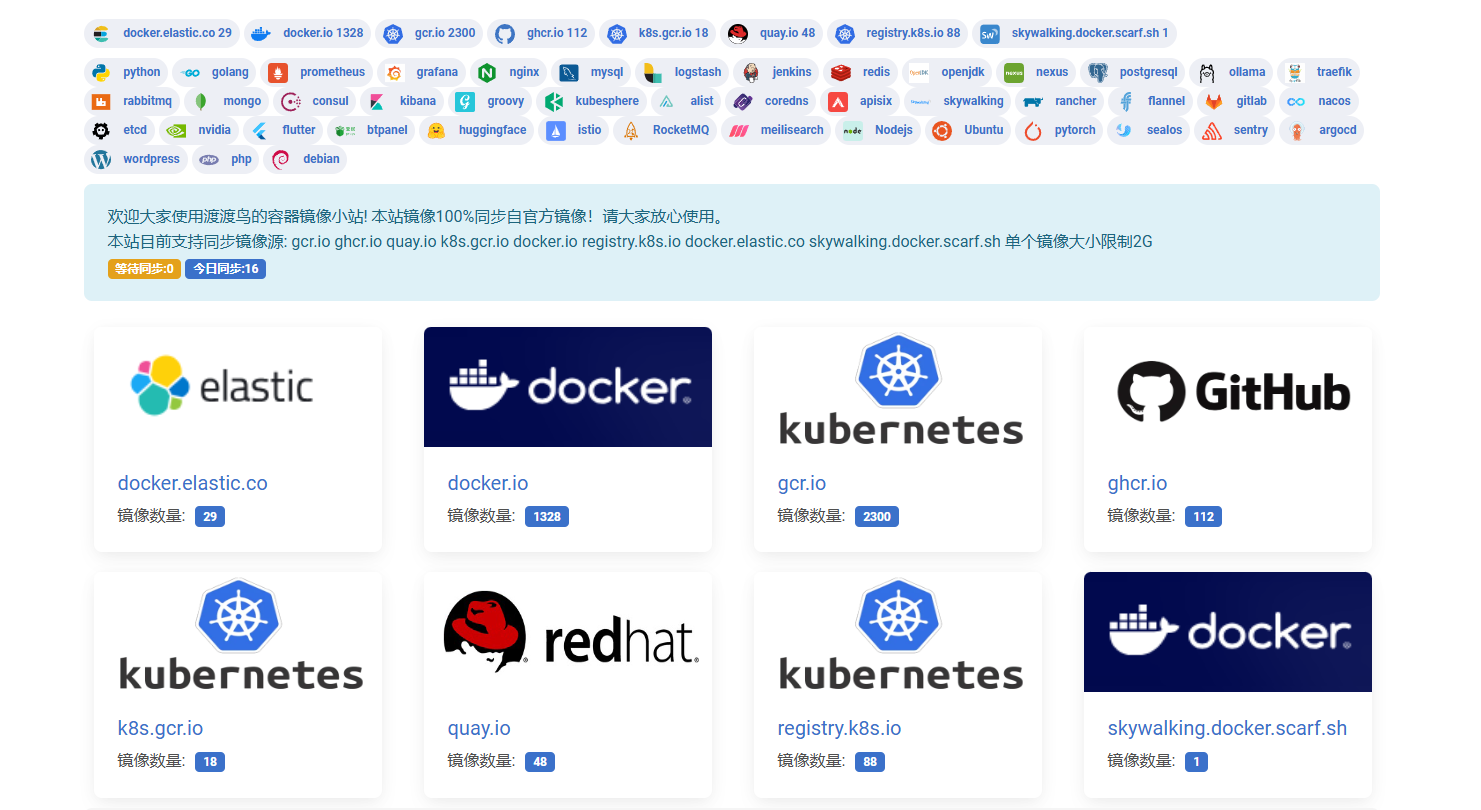

tar zcvf kubeasz-ubuntu-22.04.4-v1.27.14.tar.gz /etc/kubeasz镜像源替换

由于网络环境原因,直接打包的离线安装包使用的还是失效的镜像源,因此需要修改。

docker改,其他暂时不变。

镜像源参考:

https://docker.aityp.com

https://github.com/DaoCloud/public-image-mirror

镜像源配置位置目前发现:

ezdown

"registry-mirrors": [

"https://docker.nju.edu.cn/",

"https://kuamavit.mirror.aliyuncs.com"

]kubeasz/roles/containerd/templates/config.toml.j2

{% if ENABLE_MIRROR_REGISTRY %}

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]

endpoint = ["https://docker.nju.edu.cn/", "https://kuamavit.mirror.aliyuncs.com"]

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."gcr.io"]

endpoint = ["https://gcr.nju.edu.cn"]

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."k8s.gcr.io"]

endpoint = ["https://gcr.nju.edu.cn/google-containers/"]

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."quay.io"]

endpoint = ["https://quay.nju.edu.cn"]

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."ghcr.io"]

endpoint = ["https://ghcr.nju.edu.cn"]

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."nvcr.io"]

endpoint = ["https://ngc.nju.edu.cn"]

{% endif %}kubeasz/roles/docker/templates/daemon.json.j2

{% if ENABLE_MIRROR_REGISTRY %}

"registry-mirrors": [

"https://docker.nju.edu.cn/",

"https://kuamavit.mirror.aliyuncs.com"

],

{% endif %}kubeasz/tools/imgutils

function pull_any_image() {

F=$(echo "$1"|awk -F/ '{print NF}')

case "$F" in

1)

HUB="docker.nju.edu.cn"

GRP="library"

IMG="$1"

;;

2)

F1=$(echo "$1"|awk -F/ '{print $1}')

F2=$(echo "$1"|awk -F/ '{print $2}')

if [[ "$F1" == k8s.gcr.io ]];then

HUB="gcr.nju.edu.cn"

GRP="google-containers"

IMG="$F2"

else

HUB="docker.nju.edu.cn"

GRP="$F1"

IMG="$F2"

fi

;;

3)

F1=$(echo "$1"|awk -F/ '{print $1}')

F2=$(echo "$1"|awk -F/ '{print $2}')

F3=$(echo "$1"|awk -F/ '{print $3}')

if [[ "$F1" == quay.io ]];then

HUB="quay.nju.edu.cn"

elif [[ "$F1" == gcr.io ]];then

HUB="gcr.nju.edu.cn"把https://docker.nju.edu.cn和https://kuamavit.mirror.aliyuncs.com替换成可以正常使用的镜像源

# 打包修改镜像源后的离线安装包

tar zcvf kubeasz-ubuntu-22.04.4-v1.27.14.tar.gz /etc/kubeasz南京大学的docker源失效,其他目前还能用(截至20240812)

| 源站 | 镜像源 | 说明 |

|---|---|---|

| docker.io | docker.nju.edu.cn | Docker Hub |

| gcr.io | gcr.nju.edu.cn | Google Container Registry |

| k8s.gcr.io | gcr.nju.edu.cn/google-containers | Google Container Registry |

| quay.io | quay.nju.edu.cn | Quay Container Registry |

| ghcr.io | ghcr.nju.edu.cn | Github Container Registry |

| nvcr.io | ngc.nju.edu.cn | NVIDIA NGC Catalog |

| 源站 | 替换为 | 备注 |

|---|---|---|

| cr.l5d.io | l5d.m.daocloud.io | 将废弃请使用添加前缀的方式 |

| docker.elastic.co | elastic.m.daocloud.io | |

| docker.io | docker.m.daocloud.io | |

| gcr.io | gcr.m.daocloud.io | |

| ghcr.io | ghcr.m.daocloud.io | |

| k8s.gcr.io | k8s-gcr.m.daocloud.io | k8s.gcr.io 已被迁移到 registry.k8s.io |

| registry.k8s.io | k8s.m.daocloud.io | |

| mcr.microsoft.com | mcr.m.daocloud.io | |

| nvcr.io | nvcr.m.daocloud.io | |

| quay.io | quay.m.daocloud.io | |

| registry.jujucharms.com | jujucharms.m.daocloud.io | 将废弃请使用添加前缀的方式 |

| rocks.canonical.com | rocks-canonical.m.daocloud.io | 将废弃请使用添加前缀的方式 |

离线安装步骤

(未封装为脚本,手动操作)

上述下载完成后,把/etc/kubeasz整个目录复制到目标离线服务器相同目录,然后在离线服务器/etc/kubeasz目录下执行:

- 离线安装 docker,检查本地文件,正常会提示所有文件已经下载完成,并上传到本地私有镜像仓库

./ezdown -D

./ezdown -X flannel

./ezdown -X prometheus

...- 启动 kubeasz 容器

./ezdown -S- 设置参数允许离线安装系统软件包

sed -i 's/^INSTALL_SOURCE.*$/INSTALL_SOURCE: "offline"/g' /etc/kubeasz/example/config.yml- 举例安装单节点集群,参考 https://github.com/easzlab/kubeasz/blob/master/docs/setup/quickStart.md

source ~/.bashrc

dk ezctl start-aio

# 或者执行 docker exec -it kubeasz ezctl start-aio- 多节点集群,进入kubeasz 容器内

docker exec -it kubeasz bash,参考https://github.com/easzlab/kubeasz/blob/master/docs/setup/00-planning_and_overall_intro.md 进行集群规划和设置后使用./ezctl 命令安装

执行命令日志

# 下载1.27.16

# ./ezdown -D -k v1.27.16

2024-07-28 10:25:14 INFO Action begin: download_all

2024-07-28 10:25:14 INFO downloading docker binaries, arch:x86_64, version:26.1.3

--2024-07-28 10:25:14-- https://mirrors.tuna.tsinghua.edu.cn/docker-ce/linux/static/stable/x86_64/docker-26.1.3.tgz

Resolving mirrors.tuna.tsinghua.edu.cn (mirrors.tuna.tsinghua.edu.cn)... 101.6.15.130, 2402:f000:1:400::2

Connecting to mirrors.tuna.tsinghua.edu.cn (mirrors.tuna.tsinghua.edu.cn)|101.6.15.130|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 73739359 (70M) [application/octet-stream]

Saving to: ‘docker-26.1.3.tgz’

docker-26.1.3.tgz 100%[=======================>] 70.32M 13.7MB/s in 5.7s

2024-07-28 10:25:20 (12.4 MB/s) - ‘docker-26.1.3.tgz’ saved [73739359/73739359]

Unit docker.service could not be found.

2024-07-28 10:25:22 DEBUG generate docker service file

2024-07-28 10:25:22 DEBUG generate docker config: /etc/docker/daemon.json

2024-07-28 10:25:22 DEBUG prepare register mirror for CN

2024-07-28 10:25:22 DEBUG enable and start docker

Created symlink /etc/systemd/system/multi-user.target.wants/docker.service → /etc/systemd/system/docker.service.

2024-07-28 10:25:26 INFO downloading kubeasz: 3.6.4

3.6.4: Pulling from easzlab/kubeasz

f56be85fc22e: Pull complete

ea5757f4b3f8: Pull complete

bd0557c686d8: Pull complete

37d4153ce1d0: Pull complete

b39eb9b4269d: Pull complete

a3cff94972c7: Pull complete

8ffa66b74e06: Pull complete

Digest: sha256:1c3e8fb1f05e40236f09e81575d4222c6289e411d9c0c6320883ffd46d5a1515

Status: Downloaded newer image for easzlab/kubeasz:3.6.4

docker.io/easzlab/kubeasz:3.6.4

2024-07-28 10:27:01 DEBUG run a temporary container

3107e7e74c80143102462973374ed68b1075451d1308b4c1bbd937c8e4e9e804

2024-07-28 10:27:02 DEBUG cp kubeasz code from the temporary container

Successfully copied 2.92MB to /etc/kubeasz

2024-07-28 10:27:03 DEBUG stop&remove temporary container

temp_easz

2024-07-28 10:27:03 INFO downloading kubernetes: v1.27.16 binaries

Error response from daemon: manifest for easzlab/kubeasz-k8s-bin:v1.27.16 not found: manifest unknown: manifest unknown

2024-07-28 10:27:33 ERROR Action failed: download_all# ./ezdown -D -k v1.27.14

2024-07-28 11:29:26 INFO Action begin: download_all

2024-07-28 11:29:26 INFO downloading docker binaries, arch:x86_64, version:26.1.3

--2024-07-28 11:29:26-- https://mirrors.tuna.tsinghua.edu.cn/docker-ce/linux/static/stable/x86_64/docker-26.1.3.tgz

Resolving mirrors.tuna.tsinghua.edu.cn (mirrors.tuna.tsinghua.edu.cn)... 101.6.15.130, 2402:f000:1:400::2

Connecting to mirrors.tuna.tsinghua.edu.cn (mirrors.tuna.tsinghua.edu.cn)|101.6.15.130|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 73739359 (70M) [application/octet-stream]

Saving to: ‘docker-26.1.3.tgz’

docker-26.1.3.tgz 100%[=================================================================================================================>] 70.32M 13.3MB/s in 5.7s

2024-07-28 11:29:32 (12.4 MB/s) - ‘docker-26.1.3.tgz’ saved [73739359/73739359]

Unit docker.service could not be found.

2024-07-28 11:29:34 DEBUG generate docker service file

2024-07-28 11:29:34 DEBUG generate docker config: /etc/docker/daemon.json

2024-07-28 11:29:34 DEBUG prepare register mirror for CN

2024-07-28 11:29:34 DEBUG enable and start docker

Created symlink /etc/systemd/system/multi-user.target.wants/docker.service → /etc/systemd/system/docker.service.

2024-07-28 11:29:38 INFO downloading kubeasz: 3.6.4

3.6.4: Pulling from easzlab/kubeasz

f56be85fc22e: Pull complete

ea5757f4b3f8: Pull complete

bd0557c686d8: Pull complete

37d4153ce1d0: Pull complete

b39eb9b4269d: Pull complete

a3cff94972c7: Pull complete

8ffa66b74e06: Pull complete

Digest: sha256:1c3e8fb1f05e40236f09e81575d4222c6289e411d9c0c6320883ffd46d5a1515

Status: Downloaded newer image for easzlab/kubeasz:3.6.4

docker.io/easzlab/kubeasz:3.6.4

2024-07-28 11:30:05 DEBUG run a temporary container

43554b2b51b746cb0f064986d635220a65ce7391e472bf05b42e63786aba8b65

2024-07-28 11:30:06 DEBUG cp kubeasz code from the temporary container

Successfully copied 2.92MB to /etc/kubeasz

2024-07-28 11:30:06 DEBUG stop&remove temporary container

temp_easz

2024-07-28 11:30:07 INFO downloading kubernetes: v1.27.14 binaries

v1.27.14: Pulling from easzlab/kubeasz-k8s-bin

1b7ca6aea1dd: Pull complete

0a37f6a8311a: Pull complete

e4dc70fab48d: Pull complete

Digest: sha256:e5e2230b00791d38f1e5c8f78296836391d061e12751eafed6d0a4169abd8689

Status: Downloaded newer image for easzlab/kubeasz-k8s-bin:v1.27.14

docker.io/easzlab/kubeasz-k8s-bin:v1.27.14

2024-07-28 11:31:27 DEBUG run a temporary container

ffe9efbcf230e71cc1c79d6736ea0600ebf1aea813074a4fdc49419933beeaed

2024-07-28 11:31:29 DEBUG cp k8s binaries

Successfully copied 492MB to /etc/kubeasz/k8s_bin_tmp

2024-07-28 11:31:32 DEBUG stop&remove temporary container

temp_k8s_bin

2024-07-28 11:31:34 INFO downloading extral binaries kubeasz-ext-bin:1.10.1

1.10.1: Pulling from easzlab/kubeasz-ext-bin

a88dc8b54e91: Pull complete

2e4cdc4ff889: Pull complete

4f1309bcd55b: Pull complete

405e2cacea4b: Pull complete

f66a3892a16e: Pull complete

6fcebe3ba87d: Pull complete

22467cec432a: Pull complete

2aaef6060a22: Pull complete

Digest: sha256:c821f0e7a8ea93459ad543f9c12e91fbb7e4d5c1105ff856d2a2e07633ead56e

Status: Downloaded newer image for easzlab/kubeasz-ext-bin:1.10.1

docker.io/easzlab/kubeasz-ext-bin:1.10.1

2024-07-28 11:32:20 DEBUG run a temporary container

c450e5da0d3b14bca14232994a0fca3dea93e7f7f884503d7bb75726f25c9ce6

2024-07-28 11:32:23 DEBUG cp extral binaries

Successfully copied 716MB to /etc/kubeasz/extra_bin_tmp

2024-07-28 11:32:27 DEBUG stop&remove temporary container

temp_ext_bin

2: Pulling from library/registry

930bdd4d222e: Pull complete

a15309931e05: Pull complete

6263fb9c821f: Pull complete

86c1d3af3872: Pull complete

a37b1bf6a96f: Pull complete

Digest: sha256:12120425f07de11a1b899e418d4b0ea174c8d4d572d45bdb640f93bc7ca06a3d

Status: Downloaded newer image for registry:2

docker.io/library/registry:2

2024-07-28 11:32:38 INFO start local registry ...

03b2d1453369fd7ed6ec2165d4f6a9ddd87b4f7d12f14678d0efd8acfc52e098

2024-07-28 11:32:38 INFO download default images, then upload to the local registry

v3.26.4: Pulling from calico/cni

2a2cc8873d88: Pull complete

f689a1b6ffc9: Pull complete

222ddc102977: Pull complete

bb231ec660e2: Pull complete

c274814db7a5: Pull complete

c04ab43d8c14: Pull complete

56e4809beb2c: Pull complete

82a9d7b9ead4: Pull complete

2e8423cc9523: Pull complete

dbb2b79785d1: Pull complete

15e4b2899800: Pull complete

4f4fb700ef54: Pull complete

Digest: sha256:7c5895c5d6ed3266bcd405fbcdbb078ca484688673c3479f0f18bf072d58c242

Status: Downloaded newer image for calico/cni:v3.26.4

docker.io/calico/cni:v3.26.4

v3.26.4: Pulling from calico/kube-controllers

312c81d49b31: Pull complete

21f1655e08ac: Pull complete

807fead6050f: Pull complete

1abfcfa9d8cd: Pull complete

9398ffacf522: Pull complete

3379ce07ff21: Pull complete

f5745fd91cba: Pull complete

b2d1ec87e4a2: Pull complete

9ebe38a91c19: Pull complete

d92a41934dc3: Pull complete

7427cd509920: Pull complete

1726ce00d070: Pull complete

dcd892b22925: Pull complete

8b58b0d1e6a1: Pull complete

Digest: sha256:5fce14b4dfcd63f1a4663176be4f236600b410cd896d054f56291c566292c86e

Status: Downloaded newer image for calico/kube-controllers:v3.26.4

docker.io/calico/kube-controllers:v3.26.4

v3.26.4: Pulling from calico/node

c596d07e602a: Pull complete

9ae8e7f0c0b3: Pull complete

Digest: sha256:a8b77a5f27b167501465f7f5fb7601c44af4df8dccd1c7201363bbb301d1fe40

Status: Downloaded newer image for calico/node:v3.26.4

docker.io/calico/node:v3.26.4

The push refers to repository [easzlab.io.local:5000/calico/cni]

5f70bf18a086: Pushed

7dff43aa1268: Pushed

14fdc63b97b8: Pushed

ae844ae009c7: Pushed

3d2540981e86: Pushed

5743eb3b1640: Pushed

6c2e5970601b: Pushed

50fa5e13eb34: Pushed

468901d6015e: Pushed

e4dea417b6a9: Pushed

fbe0fc515554: Pushed

8a287df44e83: Pushed

v3.26.4: digest: sha256:3540aa94aea8fcd41edd8490a82847bbf6a9a52215f0550c27e196441d234f57 size: 2823

The push refers to repository [easzlab.io.local:5000/calico/kube-controllers]

15e2f86dd9c8: Pushed

6de775fe835c: Pushed

2bf7b670d125: Pushed

c40c18a1888a: Pushed

f65cfcb50057: Pushed

999a8e768b19: Pushed

04873e012646: Pushed

73e66a55b78b: Pushed

aff2e5741039: Pushed

69fff1fdf097: Pushed

1fe60555ee28: Pushed

1e3024c01822: Pushed

ff28c98ce459: Pushed

2235e9b55c14: Pushed

v3.26.4: digest: sha256:b7625323054de4420ba27761d4120ad300d3aa7e0109c8bc41a24ca4bcdd3471 size: 3240

The push refers to repository [easzlab.io.local:5000/calico/node]

c0eef34472c4: Pushed

f4270759c5ec: Pushed

v3.26.4: digest: sha256:0b242b133d70518988a5a36c1401ee4f37bf937743ecceafd242bd821b6645c6 size: 737

1.11.1: Pulling from coredns/coredns

dd5ad9c9c29f: Pull complete

960043b8858c: Pull complete

b4ca4c215f48: Pull complete

eebb06941f3e: Pull complete

02cd68c0cbf6: Pull complete

d3c894b5b2b0: Pull complete

b40161cd83fc: Pull complete

46ba3f23f1d3: Pull complete

4fa131a1b726: Pull complete

860aeecad371: Pull complete

c54d895c1975: Pull complete

Digest: sha256:1eeb4c7316bacb1d4c8ead65571cd92dd21e27359f0d4917f1a5822a73b75db1

Status: Downloaded newer image for coredns/coredns:1.11.1

docker.io/coredns/coredns:1.11.1

The push refers to repository [easzlab.io.local:5000/coredns/coredns]

545a68d51bc4: Pushed

aec96fc6d10e: Pushed

4cb10dd2545b: Pushed

d2d7ec0f6756: Pushed

1a73b54f556b: Pushed

e624a5370eca: Pushed

d52f02c6501c: Pushed

ff5700ec5418: Pushed

7bea6b893187: Pushed

6fbdf253bbc2: Pushed

e023e0e48e6e: Pushed

1.11.1: digest: sha256:2169b3b96af988cf69d7dd69efbcc59433eb027320eb185c6110e0850b997870 size: 2612

1.22.28: Pulling from easzlab/k8s-dns-node-cache

2cbc221dc464: Pull complete

b0eea46bf369: Pull complete

Digest: sha256:be9a4f1472d3ec28bf056ded288977d2de3118ab59e3807c1965774c6bffa34e

Status: Downloaded newer image for easzlab/k8s-dns-node-cache:1.22.28

docker.io/easzlab/k8s-dns-node-cache:1.22.28

The push refers to repository [easzlab.io.local:5000/easzlab/k8s-dns-node-cache]

c5a3f9e40d77: Pushed

045aaba570c8: Pushed

1.22.28: digest: sha256:0cff3060c804e0532a15b29d09f5730082bee441ccb97c42a0452e250895e575 size: 741

v2.7.0: Pulling from kubernetesui/dashboard

ee3247c7e545: Pull complete

8e052fd7e2d0: Pull complete

Digest: sha256:2e500d29e9d5f4a086b908eb8dfe7ecac57d2ab09d65b24f588b1d449841ef93

Status: Downloaded newer image for kubernetesui/dashboard:v2.7.0

docker.io/kubernetesui/dashboard:v2.7.0

The push refers to repository [easzlab.io.local:5000/kubernetesui/dashboard]

c88361932af5: Pushed

bd8a70623766: Pushed

v2.7.0: digest: sha256:ef134f101e8a4e96806d0dd839c87c7f76b87b496377422d20a65418178ec289 size: 736

v1.0.8: Pulling from kubernetesui/metrics-scraper

978be80e3ee3: Pull complete

5866d2c04d96: Pull complete

Digest: sha256:76049887f07a0476dc93efc2d3569b9529bf982b22d29f356092ce206e98765c

Status: Downloaded newer image for kubernetesui/metrics-scraper:v1.0.8

docker.io/kubernetesui/metrics-scraper:v1.0.8

The push refers to repository [easzlab.io.local:5000/kubernetesui/metrics-scraper]

bcec7eb9e567: Pushed

d01384fea991: Pushed

v1.0.8: digest: sha256:43227e8286fd379ee0415a5e2156a9439c4056807e3caa38e1dd413b0644807a size: 736

v0.7.1: Pulling from easzlab/metrics-server

6b16ad2aede1: Pull complete

fe5ca62666f0: Pull complete

be1681d2fb7c: Pull complete

b6824ed73363: Pull complete

7c12895b777b: Pull complete

33e068de2649: Pull complete

5664b15f108b: Pull complete

27be814a09eb: Pull complete

4aa0ea1413d3: Pull complete

9ef7d74bdfdf: Pull complete

9112d77ee5b1: Pull complete

cbe74302e2ab: Pull complete

Digest: sha256:9951a91d2ee6a3c089d62eaf361c09db03d3f40b1b8c056044e1e2c67c505b10

Status: Downloaded newer image for easzlab/metrics-server:v0.7.1

docker.io/easzlab/metrics-server:v0.7.1

The push refers to repository [easzlab.io.local:5000/easzlab/metrics-server]

364b5bcc996c: Pushed

2388d21e8e2b: Pushed

c048279a7d9f: Pushed

1a73b54f556b: Mounted from coredns/coredns

2a92d6ac9e4f: Pushed

bbb6cacb8c82: Pushed

ac805962e479: Pushed

af5aa97ebe6c: Pushed

4d049f83d9cf: Pushed

1df9699731f7: Pushed

6fbdf253bbc2: Mounted from coredns/coredns

70c35736547b: Pushed

v0.7.1: digest: sha256:e2c9932796c51446272fdef3c689b1c0059b250c080647bc09d7c4e9e25ecad0 size: 2814

3.9: Pulling from easzlab/pause

61fec91190a0: Pull complete

Digest: sha256:d5fee2a95eaaefc3a0b8a914601b685e4170cb870ac319ac5a9bfb7938389852

Status: Downloaded newer image for easzlab/pause:3.9

docker.io/easzlab/pause:3.9

The push refers to repository [easzlab.io.local:5000/easzlab/pause]

e3e5579ddd43: Pushed

3.9: digest: sha256:3ec9d4ec5512356b5e77b13fddac2e9016e7aba17dd295ae23c94b2b901813de size: 527

2024-07-28 11:36:04 INFO Action successed: download_all# ./ezdown -P ubuntu_22

2024-07-28 11:46:52 INFO Action begin: get_sys_pkg ubuntu_22

2024-07-28 11:46:52 INFO downloading system packages kubeasz-sys-pkg:1.0.1_ubuntu_22

1.0.1_ubuntu_22: Pulling from easzlab/kubeasz-sys-pkg

a88dc8b54e91: Already exists

b35273e79b74: Pull complete

Digest: sha256:6986537773f2535aaf7f1079fd917d673149244ce5f96cdf69a2926436736236

Status: Downloaded newer image for easzlab/kubeasz-sys-pkg:1.0.1_ubuntu_22

docker.io/easzlab/kubeasz-sys-pkg:1.0.1_ubuntu_22

2024-07-28 11:47:00 DEBUG run a temporary container

a42c5d010a7017d328869b9f88157c7d26cf320b3e1b5b7f6862fd1d0f965098

2024-07-28 11:47:01 DEBUG cp system packages

Successfully copied 9.73MB to /etc/kubeasz/down

2024-07-28 11:47:01 DEBUG stop&remove temporary container

temp_sys_pkg

2024-07-28 11:47:01 INFO Action successed: get_sys_pkg ubuntu_22封装安装脚本

完整离线安装文件:

kubeasz-ubuntu-22.04.3-v1.27.5.tar.gz

k8s_install_new.sh

get-pip.py

ezdown数据盘挂载

建议用两块盘一个用做系统运行一个

k8s上日志及容器数据存独立磁盘步骤(参考阿里云的)

# 创建下面4个目录

/var/lib/container/{kubelet,docker,nfs_dir}

/nfs_dir

mkdir -p /var/lib/container/{kubelet,docker,nfs_dir} /var/lib/{kubelet,docker} /nfs_dir

# 不分区直接格式化数据盘,假设数据盘是/dev/vdb

mkfs.ext4 /dev/vdb

# 然后编辑 /etc/fstab,添加如下内容:

/dev/vdb /var/lib/container/ ext4 defaults 0 0

/var/lib/container/kubelet /var/lib/kubelet none defaults,bind 0 0

/var/lib/container/docker /var/lib/docker none defaults,bind 0 0

/var/lib/container/nfs_dir /nfs_dir none defaults,bind 0 0

# 刷新生效挂载

mount -a/dev/vdb设备文件(磁盘)将此分区挂载到 /var/lib/container/ 目录

源目录/var/lib/container/kubelet绑定挂载到/var/lib/kubelet

源目录/var/lib/container/docker绑定挂载到/var/lib/docker

源目录/var/lib/container/nfs_dir绑定挂载到/nfs_dir

# 查看发行版信息

cat /etc/os-release脚本内容

#!/bin/bash

# auther: boge

# descriptions: the shell scripts will use ansible to deploy K8S at binary for siample

# docker-tag

# curl -s -S "https://registry.hub.docker.com/v2/repositories/easzlab/kubeasz-k8s-bin/tags/" | jq '."results"[]["name"]' |sort -rn

# github: https://github.com/easzlab/kubeasz

#########################################################################

# 此脚本安装过的操作系统 CentOS/RedHat 7, Ubuntu 16.04/18.04/20.04/22.04

#########################################################################

echo "记得先把数据盘挂载弄好,已经弄好直接回车,否则ctrl+c终止脚本.(Remember to mount the data disk first, and press Enter directly, otherwise ctrl+c terminates the script.)"

# xxxxxx无意义,暂停用

read -p "" xxxxxx

# 传参检测

[ $# -ne 7 ] && echo -e "Usage: $0 rootpasswd netnum nethosts cri cni k8s-cluster-name\nExample: bash $0 rootPassword 10.0.1 201\ 202\ 203\ 204 [containerd|docker] [calico|flannel|cilium] boge.com test-cn\n" && exit 11

# 变量定义

# kubeasz版本

export release=3.6.2 # 支持k8s多版本使用,定义下面k8s_ver变量版本范围: 1.28.1 v1.27.5 v1.26.8 v1.25.13 v1.24.17

# Kubernetes版本

export k8s_ver=v1.27.5 # | docker-tag tags easzlab/kubeasz-k8s-bin 注意: k8s 版本 >= 1.24 时,仅支持 containerd

# bash k8s_install_new.sh bogeit 10.0.1 201\ 202\ 203\ 204 containerd calico boge.com test-cn

rootpasswd=$1

# ip前缀网络部分

netnum=$2

# ip主机位

nethosts=$3

# 容器运行时

cri=$4

# 网络

cni=$5

domainName=$6

# 集群名称

clustername=$7

# 如果当前目录存在符合模式 kubeasz*.tar.gz 的文件

# -1 用于指定单列输出

# -v 选项用于按照版本号(数字和字符的顺序)对文件名进行自然排序 例如 file-1.0.tar.gz、file-1.1.tar.gz

if ls -1v ./kubeasz*.tar.gz &>/dev/null; then

# 则将 ls -1v ./kubeasz*.tar.gz 的输出(按版本排序的文件列表)赋值给变量 `software_packet`。

software_packet="$(ls -1v ./kubeasz*.tar.gz)"

else

# 如果没有符合模式 `kubeasz*.tar.gz` 的文件,

# 将变量 `software_packet` 设置为空字符串。

software_packet=""

fi

pwd="/etc/kubeasz"

# deploy机器升级软件库

# 判断系统中是否存在/etc/redhat-release文件。该文件存在于基于Red Hat的Linux发行版(如CentOS、RHEL)中,标识系统是Red Hat系

if cat /etc/redhat-release &>/dev/null;then

yum update -y

else

# 先更新软件包列表,然后自动升级已安装的软件包,接着进行系统级别的升级,最后如果有任何执行失败,则尝试修复安装中断或依赖关系不完整的软件包

apt-get update && apt-get upgrade -y && apt-get dist-upgrade -y

[ $? -ne 0 ] && apt-get -yf install

fi

# deploy机器检测python环境

# 输出版本号

python2 -V &>/dev/null

# $? 上一个命令的退出状态码(即返回值) not equal 不等于 0

if [ $? -ne 0 ];then

# 判断系统是redhat系还是debian系

if cat /etc/redhat-release &>/dev/null;then

yum install gcc openssl-devel bzip2-devel

wget https://www.python.org/ftp/python/2.7.16/Python-2.7.16.tgz

tar xzf Python-2.7.16.tgz

cd Python-2.7.16

./configure --enable-optimizations

make altinstall

ln -s /usr/bin/python2.7 /usr/bin/python

cd -

else

apt-get install -y python2.7 && ln -s /usr/bin/python2.7 /usr/bin/python

fi

fi

python3 -V &>/dev/null

if [ $? -ne 0 ];then

if cat /etc/redhat-release &>/dev/null;then

yum install python3 -y

else

apt-get install -y python3

fi

fi

# deploy机器设置pip安装加速源

# 在集群名称中判断是否存在"cn"来决定加速

# -i:忽略大小写。

# -w:匹配整个单词,确保只匹配 "cn",而不是像 "cnn" 这样的部分匹配。

# -E:使用扩展的正则表达式。

# 如果 grep 命令成功匹配到字符串 "cn",则返回真值(0),这时条件判断 if ... then ... fi 成立

if `echo $clustername |grep -iwE cn &>/dev/null`; then

mkdir ~/.pip

# 不能缩进,不然写入的文本也会缩进

cat > ~/.pip/pip.conf <<CB

[global]

index-url = https://mirrors.aliyun.com/pypi/simple

[install]

trusted-host=mirrors.aliyun.com

CB

fi

# deploy机器安装相应软件包

# 如果 python 命令不存在(which python 返回非零退出状态码),则执行 || 后面的命令。创建一个名为/usr/bin/python的符号链接,指向python2.7 的路径

which python || ln -svf `which python2.7` /usr/bin/python

if cat /etc/redhat-release &>/dev/null; then

# 如果检测到是Red Hat系列的操作系统

yum install git epel-release python-pip sshpass -y

# 使用yum包管理器安装git、epel-release、python-pip和sshpass软件包,-y选项表示自动回答yes

[ -f ./get-pip.py ] && python ./get-pip.py || {

# 如果当前目录下存在get-pip.py文件,则使用python安装该文件,否则执行以下命令:

wget https://bootstrap.pypa.io/pip/2.7/get-pip.py && python get-pip.py

# 使用wget下载Python pip工具的安装脚本,然后使用python安装该脚本

}

else

# 如果不是Red Hat系列的操作系统

if grep -Ew '20.04|22.04' /etc/issue &>/dev/null; then

# 如果检测到Ubuntu 20.04或22.04版本

apt-get install sshpass -y

# 使用apt-get包管理器安装sshpass软件包,-y选项表示自动回答yes

else

# 对于其他版本的Ubuntu或Debian操作系统

apt-get install python-pip sshpass -y

# 使用apt-get包管理器安装python-pip和sshpass软件包,-y选项表示自动回答yes

fi

[ -f ./get-pip.py ] && python ./get-pip.py || {

# 如果当前目录下存在get-pip.py文件,则使用python安装该文件,否则执行以下命令:

wget https://bootstrap.pypa.io/pip/2.7/get-pip.py && python get-pip.py

# 使用wget下载Python pip工具的安装脚本,然后使用python安装该脚本

}

fi

# 更新 pip 工具,限制版本号不超过 21.0

python -m pip install --upgrade "pip < 21.0"

which pip || ln -svf `which pip` /usr/bin/pip

pip -V

# 安装或者更新 Python 的 setuptools 库,它是 Python 的一个重要工具,用于管理和安装 Python 包

pip install setuptools -U

# 安装两个 Python 包:ansible 和 netaddr。--no-cache-dir 参数表示在安装时不使用缓存,可以确保安装过程中不会使用缓存的旧版本。

pip install --no-cache-dir ansible netaddr

# 在deploy机器做其他node的ssh免密操作

# netnum :ip前缀网络部分

# nethosts主机号 201\ 202\ 203\ 204

# 循环遍历变量 nethosts 中的主机名

for host in `echo "${nethosts}"`

do

echo "============ ${netnum}.${host} ===========";

# 检查当前用户是否为 root

# USER 变量通常是一个预定义的环境变量,用于表示当前登录用户的用户名。这个变量在用户登录时由系统自动赋值

if [[ ${USER} == 'root' ]];then

# 如果是 root 用户,检查是否存在 RSA 密钥文件,如果不存在则生成新的密钥对

[ ! -f /${USER}/.ssh/id_rsa ] &&\

ssh-keygen -t rsa -P '' -f /${USER}/.ssh/id_rsa

else

# 如果是普通用户,检查是否存在 RSA 密钥文件,如果不存在则生成新的密钥对

[ ! -f /home/${USER}/.ssh/id_rsa ] &&\

ssh-keygen -t rsa -P '' -f /home/${USER}/.ssh/id_rsa

fi

# 使用 sshpass 和 ssh-copy-id 命令将公钥复制到目标主机

# sshpass: 这是一个工具,用于提供 SSH 密码给 ssh 命令,使得可以在脚本中自动化执行 SSH 操作而不需要手动输入密码。

# ssh-copy-id: 这个命令用于将本地机器上的公钥(通常是 ~/.ssh/id_rsa.pub)追加到远程主机的 ~/.ssh/authorized_keys 文件中,从而允许无密码登录。

# -o StrictHostKeyChecking=no: 这个选项用于在首次连接时关闭 SSH 主机密钥检查,避免了首次连接时的手动确认,使得自动化脚本不会因为主机密钥未知而中断。

sshpass -p ${rootpasswd} ssh-copy-id -o StrictHostKeyChecking=no ${USER}@${netnum}.${host}

# 对其他节点进行软件更新

if cat /etc/redhat-release &>/dev/null;then

ssh -o StrictHostKeyChecking=no ${USER}@${netnum}.${host} "yum update -y"

else

ssh -o StrictHostKeyChecking=no ${USER}@${netnum}.${host} "apt-get update && apt-get upgrade -y && apt-get dist-upgrade -y"

[ $? -ne 0 ] && ssh -o StrictHostKeyChecking=no ${USER}@${netnum}.${host} "apt-get -yf install"

fi

done

# deploy机器下载k8s二进制安装脚本(注:这里下载可能会因网络原因失败,可以多尝试运行该脚本几次)

# 国内环境 ./ezdown -D

# 海外环境 ./ezdown -D -m standard

# 【可选】./ezdown -X flannel、./ezdown -X prometheus下载额外容器镜像(cilium,flannel,prometheus等)

# 【可选】 ./ezdown -P 下载离线系统包 (适用于无法使用yum/apt仓库情形)

# 上述脚本运行成功后,所有文件(kubeasz代码、二进制、离线镜像)均已整理好放入目录/etc/kubeasz

# /etc/kubeasz 包含 kubeasz 版本为 ${release} 的发布代码

# /etc/kubeasz/bin 包含 k8s/etcd/docker/cni 等二进制文件

# /etc/kubeasz/down 包含集群安装时需要的离线容器镜像

# /etc/kubeasz/down/packages 包含集群安装时需要的系统基础软件

if [[ ${software_packet} == '' ]];then

# 软件包变量为空

if [[ ! -f ./ezdown ]];then

curl -C- -fLO --retry 3 https://github.com/easzlab/kubeasz/releases/download/${release}/ezdown

fi

# 使用工具脚本下载

# 在 ${pwd}/ezdown 文件中找到以 K8S_BIN_VER= 开头的行,将其后的内容替换为 ${k8s_ver} 变量的值

sed -ri "s+^(K8S_BIN_VER=).*$+\1${k8s_ver}+g" ezdown

chmod +x ./ezdown

# ubuntu_22 to download package of Ubuntu 22.04

./ezdown -D && ./ezdown -P ubuntu_22 && ./ezdown -X

else

# 已经有本地安装软件包了

tar xvf ${software_packet} -C /etc/

# 在 ${pwd}/ezdown 文件中找到以 K8S_BIN_VER= 开头的行,将其后的内容替换为 ${k8s_ver} 变量的值

sed -ri "s+^(K8S_BIN_VER=).*$+\1${k8s_ver}+g" ${pwd}/ezdown

# 将 ${pwd}/ezctl 和 ${pwd}/ezdown 两个文件的执行权限设置为可执行

chmod +x ${pwd}/{ezctl,ezdown}

# 将当前目录下的 ezdown 文件的执行权限设置为可执行

chmod +x ./ezdown

./ezdown -D # 离线安装 docker,检查本地文件,正常会提示所有文件已经下载完成,并上传到本地私有镜像仓库

./ezdown -S # 启动 kubeasz 容器

fi

# 初始化一个名为$clustername的k8s集群配置

CLUSTER_NAME="$clustername"

${pwd}/ezctl new ${CLUSTER_NAME}

if [[ $? -ne 0 ]];then

echo "cluster name [${CLUSTER_NAME}] was exist in ${pwd}/clusters/${CLUSTER_NAME}."

exit 1

fi

# 本地软件安装包software_packet不为空

if [[ ${software_packet} != '' ]];then

# 设置参数,启用离线安装

# 离线安装文档:https://github.com/easzlab/kubeasz/blob/3.6.2/docs/setup/offline_install.md

sed -i 's/^INSTALL_SOURCE.*$/INSTALL_SOURCE: "offline"/g' ${pwd}/clusters/${CLUSTER_NAME}/config.yml

fi

# to check ansible service

ansible all -m ping

#---------------------------------------------------------------------------------------------------

#修改二进制安装脚本配置 config.yml

sed -ri "s+^(CLUSTER_NAME:).*$+\1 \"${CLUSTER_NAME}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

## k8s上日志及容器数据存独立磁盘步骤(参考阿里云的)

mkdir -p /var/lib/container/{kubelet,docker,nfs_dir} /var/lib/{kubelet,docker} /nfs_dir

# 放一块硬盘做数据盘

## 不用fdisk分区,直接格式化数据盘 mkfs.ext4 /dev/vdb,按下面添加到fstab后,再mount -a刷新挂载(blkid /dev/sdx)

## cat /etc/fstab

# UUID=105fa8ff-bacd-491f-a6d0-f99865afc3d6 / ext4 defaults 1 1

# /dev/vdb /var/lib/container/ ext4 defaults 0 0

# /var/lib/container/kubelet /var/lib/kubelet none defaults,bind 0 0

# /var/lib/container/docker /var/lib/docker none defaults,bind 0 0

# /var/lib/container/nfs_dir /nfs_dir none defaults,bind 0 0

# /dev/vdb设备文件(磁盘)将此分区挂载到 /var/lib/container/ 目录

# 源目录/var/lib/container/kubelet绑定挂载到/var/lib/kubelet

# 源目录/var/lib/container/docker绑定挂载到/var/lib/docker

# 源目录/var/lib/container/nfs_dir绑定挂载到/nfs_dir

## tree -L 1 /var/lib/container

# /var/lib/container

# ├── docker

# ├── kubelet

# └── lost+found

## tree -L 1 /var/lib/container

# /var/lib/container

# ├── docker

# ├── kubelet

# └── nfs_dir

# docker data dir

DOCKER_STORAGE_DIR="/var/lib/container/docker"

# s/正则表达式/替换文本/g 查找以 STORAGE_DIR: 开头的行,并将整行替换为 STORAGE_DIR: "${DOCKER_STORAGE_DIR}"

sed -ri "s+^(STORAGE_DIR:).*$+STORAGE_DIR: \"${DOCKER_STORAGE_DIR}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# containerd data dir

CONTAINERD_STORAGE_DIR="/var/lib/container/containerd"

# config.yml 文件中,查找所有以 "STORAGE_DIR:" 开头的行。替换为 "STORAGE_DIR: " 加上变量 ${CONTAINERD_STORAGE_DIR} 的值

sed -ri "s+^(STORAGE_DIR:).*$+STORAGE_DIR: \"${CONTAINERD_STORAGE_DIR}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# kubelet logs dir

KUBELET_ROOT_DIR="/var/lib/container/kubelet"

sed -ri "s+^(KUBELET_ROOT_DIR:).*$+KUBELET_ROOT_DIR: \"${KUBELET_ROOT_DIR}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

if [[ $clustername != 'aws' ]]; then

# 如果 $clustername 变量的值不等于 'aws',则执行下面的代码块

# docker aliyun repo

REG_MIRRORS="https://pqbap4ya.mirror.aliyuncs.com"

# 替换匹配到的 "REG_MIRRORS:" 行

sed -ri "s+^REG_MIRRORS:.*$+REG_MIRRORS: \'[\"${REG_MIRRORS}\"]\'+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

fi

# [docker]信任的HTTP仓库

# 2024-0726 未发现127.0.0.1/8

sed -ri "s+127.0.0.1/8+${netnum}.0/24+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# REG_MIRRORS和127.0.0.1/8在旧版本kubeasz/roles/docker/defaults/main.yml下。3.0版本后取消配置了

# disable dashboard auto install

sed -ri "s+^(dashboard_install:).*$+\1 \"no\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# 融合配置准备(按示例部署命令这里会生成test-cnk8s.boge.com这个域名,部署脚本会基于这个域名签证书,优势是后面访问kube-apiserver,可以基于此域名解析任意IP来访问,灵活性更高)

CLUSEER_WEBSITE="${CLUSTER_NAME}k8s.${domainName}"

# 使用 grep 命令来查找包含 ^MASTER_CERT_HOSTS: 行的行号。-w 选项确保整个词匹配,-n 输出行号。然后通过 awk -F: '{print $1}' 提取冒号前的部分(即行号)

lb_num=$(grep -wn '^MASTER_CERT_HOSTS:' ${pwd}/clusters/${CLUSTER_NAME}/config.yml |awk -F: '{print $1}')

# 计算行号 计算 lb_num 加 1 的结果,并将其赋值给 lb_num1

lb_num1=$(expr ${lb_num} + 1)

lb_num2=$(expr ${lb_num} + 2)

# 使用 sed 命令替换第 lb_num1 行的内容,将整行替换为 - "${CLUSEER_WEBSITE}"

sed -ri "${lb_num1}s+.*$+ - "${CLUSEER_WEBSITE}"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# 使用 sed 命令,但是这次是在第 lb_num2 行前添加一个 # 字符来注释掉该行

sed -ri "${lb_num2}s+(.*)$+#\1+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# MASTER_CERT_HOSTS:

# - test-cnk8s.boge.com

# # - "k8s.easzlab.io"

# #- "www.test.com"

# node节点最大pod 数

MAX_PODS="120"

sed -ri "s+^(MAX_PODS:).*$+\1 ${MAX_PODS}+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# calico 自建机房都在二层网络可以设置 CALICO_IPV4POOL_IPIP=“off”,以提高网络性能; 公有云上VPC在三层网络,需设置CALICO_IPV4POOL_IPIP: "Always"开启ipip隧道

# 自建机房的二层网络,设备之间通过交换机等二层网络设备进行通信。这种情况下,设备之间的通信主要依赖于MAC地址,并且它们通常处于同一个广播域内。在二层网络环境中,你可以直接将物理服务器或者虚拟化主机连接到同一交换机或交换机堆栈上,实现快速的数据传输。

# 而公有云中的虚拟私有云(VPC)则通常工作在三层网络模式下。这意味着云服务提供商为用户分配了私有IP地址空间,并且允许用户在其VPC内部署虚拟机或其他资源。在三层网络中,通信基于IP地址和路由表来完成,这使得VPC可以跨越多个物理位置并且支持更灵活的网络拓扑结构。例如,你可以在不同的可用区或者地域之间建立VPC,并通过路由规则实现它们之间的通信。

# 自建机房:公司内部的服务器集群通过交换机连接,形成一个局域网,数据在局域网内快速传输,不出公司网络。

# 公有云VPC:公司在AWS上创建一个VPC,配置多个子网和路由规则,允许在不同区域的虚拟机之间通过IP地址通信,并与外部网络进行安全的连接。

# 注释了,没有开启自建机房模式

#sed -ri "s+^(CALICO_IPV4POOL_IPIP:).*$+\1 \"off\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# 修改二进制安装脚本配置 hosts

# clean old ip

# 删除

sed -ri '/192.168.1.1/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

sed -ri '/192.168.1.2/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

sed -ri '/192.168.1.3/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

sed -ri '/192.168.1.4/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

sed -ri '/192.168.1.5/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

# 输入准备创建ETCD集群的主机位

echo "enter etcd hosts here (example: 203 202 201) ↓"

echo "请输入要创建ETCD集群的主机位(example: 203 202 201) ↓"

read -p "" ipnums

for ipnum in `echo ${ipnums}`

do

echo $netnum.$ipnum

# 匹配行的后面添加ip地址

sed -i "/\[etcd/a $netnum.$ipnum" ${pwd}/clusters/${CLUSTER_NAME}/hosts

done

# [etcd]

# 10.0.1.201

# 10.0.1.202

# 10.0.1.203

# 输入准备创建KUBE-MASTER集群的主机位

echo "enter kube-master hosts here (example: 202 201) ↓"

echo "请输入要创建K8S集群master节点主机位(example: 202 201) ↓"

read -p "" ipnums

for ipnum in `echo ${ipnums}`

do

echo $netnum.$ipnum

sed -i "/\[kube_master/a $netnum.$ipnum" ${pwd}/clusters/${CLUSTER_NAME}/hosts

done

# [kube_master]

# 10.0.1.201

# 10.0.1.202

# 输入准备创建KUBE-NODE集群的主机位

echo "enter kube-node hosts here (example: 204 203) ↓"

echo "请输入要创建的K8S集群node节点主机位 (example: 204 203) ↓"

read -p "" ipnums

for ipnum in `echo ${ipnums}`

do

echo $netnum.$ipnum

sed -i "/\[kube_node/a $netnum.$ipnum" ${pwd}/clusters/${CLUSTER_NAME}/hosts

done

# 配置容器网络接口CNI

case ${cni} in

flannel)

sed -ri "s+^CLUSTER_NETWORK=.*$+CLUSTER_NETWORK=\"${cni}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

;;

calico)

sed -ri "s+^CLUSTER_NETWORK=.*$+CLUSTER_NETWORK=\"${cni}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

;;

cilium)

sed -ri "s+^CLUSTER_NETWORK=.*$+CLUSTER_NETWORK=\"${cni}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

;;

*)

# 确保是有效的CNI值

echo "cni need be flannel or calico or cilium."

echo "cni 配置错误,请确保是 flannel 、 calico 或者 cilium"

exit 11

esac

# 配置K8S的ETCD数据备份的定时任务

# https://github.com/easzlab/kubeasz/blob/master/docs/op/cluster_restore.md

if cat /etc/redhat-release &>/dev/null;then

if ! grep -w '94.backup.yml' /var/spool/cron/root &>/dev/null;then echo "00 00 * * * /usr/local/bin/ansible-playbook -i /etc/kubeasz/clusters/${CLUSTER_NAME}/hosts -e @/etc/kubeasz/clusters/${CLUSTER_NAME}/config.yml /etc/kubeasz/playbooks/94.backup.yml &> /dev/null; find /etc/kubeasz/clusters/${CLUSTER_NAME}/backup/ -type f -name '*.db' -mtime +3|xargs rm -f" >> /var/spool/cron/root;else echo exists ;fi

chown root.crontab /var/spool/cron/root

chmod 600 /var/spool/cron/root

rm -f /var/run/cron.reboot

service crond restart

else

# (检查备份任务是否存在)在/var/spool/cron/crontabs/root文件中查找是否有包含单词94.backup.yml的行

# grep没有找到匹配项,则返回值为1,!将其转换为0,表示条件为真

if ! grep -w '94.backup.yml' /var/spool/cron/crontabs/root &> /dev/null; then

echo "00 00 * * * /usr/local/bin/ansible-playbook -i /etc/kubeasz/clusters/\${CLUSTER_NAME}/hosts -e @/etc/kubeasz/clusters/\${CLUSTER_NAME}/config.yml /etc/kubeasz/playbooks/94.backup.yml &> /dev/null; find /etc/kubeasz/clusters/\${CLUSTER_NAME}/backup/ -type f -name '*.db' -mtime +3 | xargs rm -f" >> /var/spool/cron/crontabs/root

else

echo "exists"

fi

# 将/var/spool/cron/crontabs/root文件的所有者改为root,所属组改为crontab

chown root.crontab /var/spool/cron/crontabs/root

# 将/var/spool/cron/crontabs/root文件的权限设置为只有所有者(root用户)有读写权限,其他用户没有任何权限

chmod 600 /var/spool/cron/crontabs/root

rm -f /var/run/crond.reboot

service cron restart

fi

#---------------------------------------------------------------------------------------------------

# 准备开始安装了

# 删除无关文件

rm -rf ${pwd}/{dockerfiles,docs,.gitignore,pics,dockerfiles} &&\

find ${pwd}/ -name '*.md'|xargs rm -f

# read -p "Enter to continue deploy k8s to all nodes >>>" YesNobbb

read -p "按下任意键继续部署k8s到全部节点 >>>"

# now start deploy k8s cluster

# 01 prepare to prepare CA/certs & kubeconfig & other system settings

# 02 etcd to setup the etcd cluster

# 03 container-runtime to setup the container runtime(docker or containerd)

# 04 kube-master to setup the master nodes

# 05 kube-node to setup the worker nodes

# 06 network to setup the network plugin

# 07 cluster-addon to setup other useful plugins

# 90 all to run 01~07 all at once

# 10 ex-lb to install external loadbalance for accessing k8s from outside

# 11 harbor to install a new harbor server or to integrate with an existed one

# 去到/etc/kubeasz目录下

cd ${pwd}/

# to prepare CA/certs & kubeconfig & other system settings

${pwd}/ezctl setup ${CLUSTER_NAME} 01

sleep 1

# to setup the etcd cluster

${pwd}/ezctl setup ${CLUSTER_NAME} 02

sleep 1

# 配置并安装容器运行时CRI

# to setup the container runtime(docker or containerd)

case ${cri} in

containerd)

sed -ri "s+^CONTAINER_RUNTIME=.*$+CONTAINER_RUNTIME=\"${cri}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

${pwd}/ezctl setup ${CLUSTER_NAME} 03

;;

docker)

sed -ri "s+^CONTAINER_RUNTIME=.*$+CONTAINER_RUNTIME=\"${cri}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

${pwd}/ezctl setup ${CLUSTER_NAME} 03

;;

*)

echo "cri need be containerd or docker."

echo "cni 配置错误,请确保是 containerd 或者 docker"

exit 11

esac

sleep 1

# to setup the master nodes

${pwd}/ezctl setup ${CLUSTER_NAME} 04

sleep 1

# to setup the worker nodes

${pwd}/ezctl setup ${CLUSTER_NAME} 05

sleep 1

# to setup the network plugin(flannel、calico...)

${pwd}/ezctl setup ${CLUSTER_NAME} 06

sleep 1

# to setup other useful plugins(metrics-server、coredns...)

${pwd}/ezctl setup ${CLUSTER_NAME} 07

sleep 1

# [可选]对集群所有节点进行操作系统层面的安全加固 https://github.com/dev-sec/ansible-os-hardening

#ansible-playbook roles/os-harden/os-harden.yml

#sleep 1

#cd `dirname ${software_packet:-/tmp}`

k8s_bin_path='/opt/kube/bin'

echo "------------------------- k8s version list ---------------------------"

${k8s_bin_path}/kubectl version

echo

echo "------------------------- All Healthy status check -------------------"

${k8s_bin_path}/kubectl get componentstatus

echo

echo "------------------------- k8s cluster info list ----------------------"

${k8s_bin_path}/kubectl cluster-info

echo

echo "------------------------- k8s all nodes list -------------------------"

${k8s_bin_path}/kubectl get node -o wide

echo

echo "------------------------- k8s all-namespaces's pods list ------------"

${k8s_bin_path}/kubectl get pod --all-namespaces

echo

echo "------------------------- k8s all-namespaces's service network ------"

${k8s_bin_path}/kubectl get svc --all-namespaces

echo

echo "------------------------- k8s welcome for you -----------------------"

echo

# you can use k alias kubectl to siample

# 为kubectl命令设置一个别名k。 complete是bash的一个内置命令,用于定义命令行自动补全的规则。-F __start_kubectl指定了一个函数__start_kubectl,这个函数是kubectl命令提供的,用于实现自动补全功能

echo "alias k=kubectl && complete -F __start_kubectl k" >> ~/.bashrc

# get dashboard url

${k8s_bin_path}/kubectl cluster-info|grep dashboard|awk '{print $NF}'|tee -a /root/k8s_results

# get login token

${k8s_bin_path}/kubectl -n kube-system describe secret $(${k8s_bin_path}/kubectl -n kube-system get secret | grep admin-user | awk '{print $1}')|grep 'token:'|awk '{print $NF}'|tee -a /root/k8s_results

echo

echo "you can look again dashboard and token info at >>> /root/k8s_results <<<"

echo "你可以在文件中查看仪表板和令牌信息 >>> /root/k8s_results <<<"

echo ">>>>>>>>>>>>>>>>> You need to excute command [ reboot ] to restart all nodes <<<<<<<<<<<<<<<<<<<<"

echo ">>>>>>>>>>>>>>>>> 你需要执行命令 [ reboot ] 来重启所有节点 <<<<<<<<<<<<<<<<<<<<"

#find / -type f -name "kubeasz*.tar.gz" -o -name "k8s_install_new.sh"|xargs rm -f脚本解释

在 kubeasz 2x 版本,多节点高可用集群安装可以使用2种方式

- 1.按照本文步骤先规划准备,预先配置节点信息后,直接安装多节点高可用集群

- 2.先部署单节点集群 AllinOne部署,然后通过 节点添加 扩容成高可用集群

本教程封装的脚本是属于预先配置节点信息,直接部署多节点集群

传入参数

# bash k8s_install_new.sh bogeit 10.0.1 201\ 202\ 203\ 204 containerd calico boge.com test-cn

rootpasswd=$1

# ip前缀网络部分

netnum=$2

# ip主机位

nethosts=$3

# 容器运行时

cri=$4

# 网络

cni=$5

domainName=$6

# 集群名称

clustername=$7获取目录下的离线安装包(kubeasz*.tar.gz)名称

if ls -1v ./kubeasz*.tar.gz &>/dev/null; then

# 则将 ls -1v ./kubeasz*.tar.gz 的输出(按版本排序的文件列表)赋值给变量 `software_packet`。

software_packet="$(ls -1v ./kubeasz*.tar.gz)"

else

# 如果没有符合模式 `kubeasz*.tar.gz` 的文件,

# 将变量 `software_packet` 设置为空字符串。

software_packet=""

fi-1 用于指定单列输出

-v 选项用于按照版本号(数字和字符的顺序)对文件名进行自然排序 例如 file-1.0.tar.gz、file-1.1.tar.gz

升级部署机器的软件

确保安装iptables(重要)

if cat /etc/redhat-release &>/dev/null;then

yum update -y

else

# 先更新软件包列表,然后自动升级已安装的软件包,接着进行系统级别的升级,最后如果有任何执行失败,则尝试修复安装中断或依赖关系不完整的软件包

apt-get update && apt-get -y install iptables && apt-get upgrade -y && apt-get dist-upgrade -y

[ $? -ne 0 ] && apt-get -yf install

fi判断系统中是否存在/etc/redhat-release文件。该文件存在于基于Red Hat的Linux发行版(如CentOS、RHEL)中,标识系统是Red Hat系

配置部署机器的Python环境

安装python2并链接

# 输出版本号

python2 -V &>/dev/null

# $? 上一个命令的退出状态码(即返回值) not equal 不等于 0

if [ $? -ne 0 ];then

# 判断系统是redhat系还是debian系

if cat /etc/redhat-release &>/dev/null;then

yum install gcc openssl-devel bzip2-devel

wget https://www.python.org/ftp/python/2.7.16/Python-2.7.16.tgz

tar xzf Python-2.7.16.tgz

cd Python-2.7.16

./configure --enable-optimizations

make altinstall

ln -s /usr/bin/python2.7 /usr/bin/python

cd -

else

apt-get install -y python2.7 && ln -s /usr/bin/python2.7 /usr/bin/python

fi

fi安装python3

python3 -V &>/dev/null

if [ $? -ne 0 ];then

if cat /etc/redhat-release &>/dev/null;then

yum install python3 -y

else

apt-get install -y python3

fi

fi设置pip国内加速源

# 如果 grep 命令在集群名称成功匹配到字符串 "cn",则返回真值(0),这时条件判断 if ... then ... fi 成立

if `echo $clustername |grep -iwE cn &>/dev/null`; then

mkdir ~/.pip

# 不能缩进,不然写入的文本也会缩进

cat > ~/.pip/pip.conf <<CB

[global]

index-url = https://mirrors.aliyun.com/pypi/simple

[install]

trusted-host=mirrors.aliyun.com

CB

fi在集群名称中判断是否存在"cn"来决定加速 -i:忽略大小写。 -w:匹配整个单词,确保只匹配 "cn",而不是像 "cnn" 这样的部分匹配。 -E:使用扩展的正则表达式。

安装pip工具和免密工具

# 链接python2.7

which python || ln -svf `which python2.7` /usr/bin/python

if cat /etc/redhat-release &>/dev/null; then

# 如果检测到是Red Hat系列的操作系统

yum install git epel-release python-pip sshpass -y

# 使用yum包管理器安装git、epel-release、python-pip和sshpass软件包,-y选项表示自动回答yes

[ -f ./get-pip.py ] && python ./get-pip.py || {

# 如果当前目录下存在get-pip.py文件,则使用python安装该文件,否则执行以下命令:

wget https://bootstrap.pypa.io/pip/2.7/get-pip.py && python get-pip.py

# 使用wget下载Python pip工具的安装脚本,然后使用python安装该脚本

}

else

# 如果不是Red Hat系列的操作系统

if grep -Ew '20.04|22.04' /etc/issue &>/dev/null; then

# 如果检测到Ubuntu 20.04或22.04版本

apt-get install sshpass -y

# 使用apt-get包管理器安装sshpass软件包,-y选项表示自动回答yes

else

# 对于其他版本的Ubuntu或Debian操作系统

apt-get install python-pip sshpass -y

# 使用apt-get包管理器安装python-pip和sshpass软件包,-y选项表示自动回答yes

fi

[ -f ./get-pip.py ] && python ./get-pip.py || {

# 如果当前目录下存在get-pip.py文件,则使用python安装该文件,否则执行以下命令:

wget https://bootstrap.pypa.io/pip/2.7/get-pip.py && python get-pip.py

# 使用wget下载Python pip工具的安装脚本,然后使用python安装该脚本

}

fi更新 pip

python -m pip install --upgrade "pip < 21.0"链接pip

which pip || ln -svf which pip /usr/bin/pip安装ansible自动化工具

pip -V

# 安装或者更新 Python 的 setuptools 库,它是 Python 的一个重要工具,用于管理和安装 Python 包

pip install setuptools -U

# 安装两个 Python 包:ansible 和 netaddr。--no-cache-dir 参数表示在安装时不使用缓存,可以确保安装过程中不会使用缓存的旧版本。

pip install --no-cache-dir ansible netaddr对每台机器做免密操作并更新软件

# netnum :ip前缀网络部分

# nethosts主机号 201\ 202\ 203\ 204

# 循环遍历变量 nethosts 中的主机名

for host in `echo "${nethosts}"`

do

echo "============ ${netnum}.${host} ===========";

# 检查当前用户是否为 root

# USER 变量通常是一个预定义的环境变量,用于表示当前登录用户的用户名。这个变量在用户登录时由系统自动赋值

if [[ ${USER} == 'root' ]];then

# 如果是 root 用户,检查是否存在 RSA 密钥文件,如果不存在则生成新的密钥对

[ ! -f /${USER}/.ssh/id_rsa ] &&\

ssh-keygen -t rsa -P '' -f /${USER}/.ssh/id_rsa

else

# 如果是普通用户,检查是否存在 RSA 密钥文件,如果不存在则生成新的密钥对

[ ! -f /home/${USER}/.ssh/id_rsa ] &&\

ssh-keygen -t rsa -P '' -f /home/${USER}/.ssh/id_rsa

fi

# 使用 sshpass 和 ssh-copy-id 命令将公钥复制到目标主机

# sshpass: 这是一个工具,用于提供 SSH 密码给 ssh 命令,使得可以在脚本中自动化执行 SSH 操作而不需要手动输入密码。

# ssh-copy-id: 这个命令用于将本地机器上的公钥(通常是 ~/.ssh/id_rsa.pub)追加到远程主机的 ~/.ssh/authorized_keys 文件中,从而允许无密码登录。

# -o StrictHostKeyChecking=no: 这个选项用于在首次连接时关闭 SSH 主机密钥检查,避免了首次连接时的手动确认,使得自动化脚本不会因为主机密钥未知而中断。

sshpass -p ${rootpasswd} ssh-copy-id -o StrictHostKeyChecking=no ${USER}@${netnum}.${host}

# 对其他节点进行软件更新

if cat /etc/redhat-release &>/dev/null;then

ssh -o StrictHostKeyChecking=no ${USER}@${netnum}.${host} "yum update -y"

else

ssh -o StrictHostKeyChecking=no ${USER}@${netnum}.${host} "apt-get update && apt-get upgrade -y && apt-get dist-upgrade -y"

[ $? -ne 0 ] && ssh -o StrictHostKeyChecking=no ${USER}@${netnum}.${host} "apt-get -yf install"

fi

done解压离线安装包或下载安装包

if [[ ${software_packet} == '' ]];then

# 软件包变量为空

if [[ ! -f ./ezdown ]];then

curl -C- -fLO --retry 3 https://github.com/easzlab/kubeasz/releases/download/${release}/ezdown

fi

# 使用工具脚本下载

# 在 ${pwd}/ezdown 文件中找到以 K8S_BIN_VER= 开头的行,将其后的内容替换为 ${k8s_ver} 变量的值

sed -ri "s+^(K8S_BIN_VER=).*$+\1${k8s_ver}+g" ezdown

chmod +x ./ezdown

# ubuntu_22 to download package of Ubuntu 22.04

./ezdown -D && ./ezdown -P ubuntu_22 && ./ezdown -X

else

# 已经有本地安装软件包了

tar xvf ${software_packet} -C /etc/

# 在 ${pwd}/ezdown 文件中找到以 K8S_BIN_VER= 开头的行,将其后的内容替换为 ${k8s_ver} 变量的值

sed -ri "s+^(K8S_BIN_VER=).*$+\1${k8s_ver}+g" ${pwd}/ezdown

# 将 ${pwd}/ezctl 和 ${pwd}/ezdown 两个文件的执行权限设置为可执行

chmod +x ${pwd}/{ezctl,ezdown}

# 将当前目录下的 ezdown 文件的执行权限设置为可执行

chmod +x ./ezdown

./ezdown -D # 离线安装 docker,检查本地文件,正常会提示所有文件已经下载完成,并上传到本地私有镜像仓库

./ezdown -S # 启动 kubeasz 容器

fi初始化k8s集群配置

ezctl new ${CLUSTER_NAME} 以名称'集群'开始一个新的k8s部署

CLUSTER_NAME="$clustername"

${pwd}/ezctl new ${CLUSTER_NAME}

if [[ $? -ne 0 ]];then

echo "集群名 [${CLUSTER_NAME}] 已存在于 ${pwd}/clusters/${CLUSTER_NAME}目录下。"

echo "cluster name [${CLUSTER_NAME}] was exist in ${pwd}/clusters/${CLUSTER_NAME}."

exit 1

fi启用离线安装k8s配置

# 本地软件安装包software_packet不为空

if [[ ${software_packet} != '' ]];then

# 设置参数,启用离线安装

# 离线安装文档:https://github.com/easzlab/kubeasz/blob/3.6.2/docs/setup/offline_install.md

sed -i 's/^INSTALL_SOURCE.*$/INSTALL_SOURCE: "offline"/g' ${pwd}/clusters/${CLUSTER_NAME}/config.yml

fi检查 ansible 服务

ansible all -m ping修改集群配置文件config.yml

个性化集群参数配置

kubeasz创建集群主要在以下两个地方进行配置:(假设集群名xxxx)

clusters/xxxx/hosts 文件(模板在example/hosts.multi-node):集群主要节点定义和主要参数配置、全局变量

clusters/xxxx/config.yml(模板在examples/config.yml):其他参数配置或者部分组件附加参数

clusters/xxxx/hosts (ansible hosts)

如集群规划与安装概览中介绍,主要包括集群节点定义和集群范围的主要参数配置

尽量保持配置简单灵活

尽量保持配置项稳定

常用设置项:

修改容器运行时: CONTAINER_RUNTIME="containerd"

修改集群网络插件:CLUSTER_NETWORK="calico"

修改容器网络地址:CLUSTER_CIDR="192.168.0.0/16"

修改NodePort范围:NODE_PORT_RANGE="30000-32767"

clusters/xxxx/config.yml

主要包括集群某个具体组件的个性化配置,具体组件的配置项可能会不断增加;可以在不做任何配置更改情况下使用默认值创建集群

根据实际需要配置 k8s 集群,常用举例

配置使用离线安装系统包:INSTALL_SOURCE: "offline" (需要ezdown -P 下载离线系统软件)

配置CA证书以及其签发证书的有效期

配置 apiserver 支持公网域名:MASTER_CERT_HOSTS

配置 cluster-addon 组件安装

...修改集群名

sed -ri "s+^(CLUSTER_NAME:).*$+\1 \"${CLUSTER_NAME}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml配置数据路径

mkdir -p /var/lib/container/{kubelet,docker,nfs_dir} /var/lib/{kubelet,docker} /nfs_dir

# docker data dir

DOCKER_STORAGE_DIR="/var/lib/container/docker"

# s/正则表达式/替换文本/g 查找以 STORAGE_DIR: 开头的行,并将整行替换为 STORAGE_DIR: "${DOCKER_STORAGE_DIR}"

sed -ri "s+^(STORAGE_DIR:).*$+STORAGE_DIR: \"${DOCKER_STORAGE_DIR}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# containerd data dir

CONTAINERD_STORAGE_DIR="/var/lib/container/containerd"

# config.yml 文件中,查找所有以 "STORAGE_DIR:" 开头的行。替换为 "STORAGE_DIR: " 加上变量 ${CONTAINERD_STORAGE_DIR} 的值

sed -ri "s+^(STORAGE_DIR:).*$+STORAGE_DIR: \"${CONTAINERD_STORAGE_DIR}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# kubelet logs dir

KUBELET_ROOT_DIR="/var/lib/container/kubelet"

sed -ri "s+^(KUBELET_ROOT_DIR:).*$+KUBELET_ROOT_DIR: \"${KUBELET_ROOT_DIR}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml开头挂载的数据盘

/dev/vdb设备文件(磁盘)将此分区挂载到 /var/lib/container/ 目录

源目录/var/lib/container/kubelet绑定挂载到/var/lib/kubelet

源目录/var/lib/container/docker绑定挂载到/var/lib/docker

源目录/var/lib/container/nfs_dir绑定挂载到/nfs_dir

添加国内镜像源和本地信任仓库

if [[ $clustername != 'aws' ]]; then

# 如果 $clustername 变量的值不等于 'aws',则执行下面的代码块

# docker aliyun repo

REG_MIRRORS="https://pqbap4ya.mirror.aliyuncs.com"

# 替换匹配到的 "REG_MIRRORS:" 行

sed -ri "s+^REG_MIRRORS:.*$+REG_MIRRORS: \'[\"${REG_MIRRORS}\"]\'+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

fi

# [docker]信任的HTTP仓库

# 2024-0726 未发现127.0.0.1/8

sed -ri "s+127.0.0.1/8+${netnum}.0/24+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml关闭dashboard面板的自动安装

# disable dashboard auto install

sed -ri "s+^(dashboard_install:).*$+\1 \"no\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml配置 apiserver 支持公网域名

# 融合配置准备(按示例部署命令这里会生成test-cnk8s.boge.com这个域名,部署脚本会基于这个域名签证书,优势是后面访问kube-apiserver,可以基于此域名解析任意IP来访问,灵活性更高)

CLUSEER_WEBSITE="${CLUSTER_NAME}k8s.${domainName}"# 使用 grep 命令来查找包含 ^MASTER_CERT_HOSTS: 行的行号。-w 选项确保整个词匹配,-n 输出行号。然后通过 awk -F: '{print $1}' 提取冒号前的部分(即行号)

lb_num=$(grep -wn '^MASTER_CERT_HOSTS:' ${pwd}/clusters/${CLUSTER_NAME}/config.yml |awk -F: '{print $1}')

# 计算行号 计算 lb_num 加 1 的结果,并将其赋值给 lb_num1

lb_num1=$(expr ${lb_num} + 1)

lb_num2=$(expr ${lb_num} + 2)

# 使用 sed 命令替换第 lb_num1 行的内容,将整行替换为 - "${CLUSEER_WEBSITE}"

sed -ri "${lb_num1}s+.*$+ - "${CLUSEER_WEBSITE}"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# 使用 sed 命令,但是这次是在第 lb_num2 行前添加一个 # 字符来注释掉该行

sed -ri "${lb_num2}s+(.*)$+#\1+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml效果如下:

MASTER_CERT_HOSTS: - test-cnk8s.boge.com # - "k8s.easzlab.io" #- "www.test.com"

node节点最大pod 数

MAX_PODS="120"

sed -ri "s+^(MAX_PODS:).*$+\1 ${MAX_PODS}+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml[calico] IPIP隧道模式

[calico] IPIP隧道模式可选项有: [Always, CrossSubnet, Never],跨子网可以配置为Always与CrossSubnet(公有云建议使用always比较省事,其他的话需要修改各自公有云的网络配置,具体可以参考各个公有云说明)

其次CrossSubnet为隧道+BGP路由混合模式可以提升网络性能,同子网配置为Never即可.

# 默认

CALICO_IPV4POOL_IPIP: "Always"# calico 自建机房都在二层网络可以设置 CALICO_IPV4POOL_IPIP=“off”,以提高网络性能; 公有云上VPC在三层网络,需设置CALICO_IPV4POOL_IPIP: "Always"开启ipip隧道

# 自建机房的二层网络,设备之间通过交换机等二层网络设备进行通信。这种情况下,设备之间的通信主要依赖于MAC地址,并且它们通常处于同一个广播域内。在二层网络环境中,你可以直接将物理服务器或者虚拟化主机连接到同一交换机或交换机堆栈上,实现快速的数据传输。

# 而公有云中的虚拟私有云(VPC)则通常工作在三层网络模式下。这意味着云服务提供商为用户分配了私有IP地址空间,并且允许用户在其VPC内部署虚拟机或其他资源。在三层网络中,通信基于IP地址和路由表来完成,这使得VPC可以跨越多个物理位置并且支持更灵活的网络拓扑结构。例如,你可以在不同的可用区或者地域之间建立VPC,并通过路由规则实现它们之间的通信。

# 自建机房:公司内部的服务器集群通过交换机连接,形成一个局域网,数据在局域网内快速传输,不出公司网络。

# 公有云VPC:公司在AWS上创建一个VPC,配置多个子网和路由规则,允许在不同区域的虚拟机之间通过IP地址通信,并与外部网络进行安全的连接。

# 注释了,没有开启自建机房模式

#sed -ri "s+^(CALICO_IPV4POOL_IPIP:).*$+\1 \"off\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml修改集群节点定义配置文件hosts

部分默认配置:

[etcd]

192.168.1.1

192.168.1.2

192.168.1.3

[kube_master]

192.168.1.1 k8s_nodename='master-01'

192.168.1.2 k8s_nodename='master-02'

192.168.1.3 k8s_nodename='master-03'

[kube_node]

192.168.1.4 k8s_nodename='worker-01'

192.168.1.5 k8s_nodename='worker-02'删除旧配置

# 删除行 clean old ip

sed -ri '/192.168.1.1/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

sed -ri '/192.168.1.2/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

sed -ri '/192.168.1.3/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

sed -ri '/192.168.1.4/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

sed -ri '/192.168.1.5/d' ${pwd}/clusters/${CLUSTER_NAME}/hostsETCD集群节点

echo "enter etcd hosts here (example: 203 202 201) ↓"

echo "请输入要创建ETCD集群的主机位(example: 203 202 201) ↓"

read -p "" ipnums

for ipnum in `echo ${ipnums}`

do

echo $netnum.$ipnum

# 匹配行的后面添加ip地址

sed -i "/\[etcd/a $netnum.$ipnum" ${pwd}/clusters/${CLUSTER_NAME}/hosts

done结果:

[etcd] 10.0.1.201 10.0.1.202 10.0.1.203

k8s主节点

echo "enter kube-master hosts here (example: 202 201) ↓"

echo "请输入要创建K8S集群master节点主机位(example: 202 201) ↓"

read -p "" ipnums

for ipnum in `echo ${ipnums}`

do

echo $netnum.$ipnum

sed -i "/\[kube_master/a $netnum.$ipnum" ${pwd}/clusters/${CLUSTER_NAME}/hosts

done结果:

[kube_master] 10.0.1.201 10.0.1.202

k8s工作节点

echo "enter kube-node hosts here (example: 204 203) ↓"

echo "请输入要创建的K8S集群node节点主机位 (example: 204 203) ↓"

read -p "" ipnums

for ipnum in `echo ${ipnums}`

do

echo $netnum.$ipnum

sed -i "/\[kube_node/a $netnum.$ipnum" ${pwd}/clusters/${CLUSTER_NAME}/hosts

done结果:

[kube_node] 10.0.1.203 10.0.1.204

网络插件

# 配置容器网络接口CNI

case ${cni} in

flannel)

sed -ri "s+^CLUSTER_NETWORK=.*$+CLUSTER_NETWORK=\"${cni}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

;;

calico)

sed -ri "s+^CLUSTER_NETWORK=.*$+CLUSTER_NETWORK=\"${cni}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

;;

cilium)

sed -ri "s+^CLUSTER_NETWORK=.*$+CLUSTER_NETWORK=\"${cni}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

;;

*)

# 确保是有效的CNI值

echo "cni need be flannel or calico or cilium."

echo "cni 配置错误,请确保是 flannel 、 calico 或者 cilium"

exit 11

esacCLUSTER_NETWORK="calico"定时备份ETCD数据库

# 配置K8S的ETCD数据备份的定时任务

# https://github.com/easzlab/kubeasz/blob/master/docs/op/cluster_restore.md

if cat /etc/redhat-release &>/dev/null;then

if ! grep -w '94.backup.yml' /var/spool/cron/root &>/dev/null;then echo "00 00 * * * /usr/local/bin/ansible-playbook -i /etc/kubeasz/clusters/${CLUSTER_NAME}/hosts -e @/etc/kubeasz/clusters/${CLUSTER_NAME}/config.yml /etc/kubeasz/playbooks/94.backup.yml &> /dev/null; find /etc/kubeasz/clusters/${CLUSTER_NAME}/backup/ -type f -name '*.db' -mtime +3|xargs rm -f" >> /var/spool/cron/root;else echo exists ;fi

chown root.crontab /var/spool/cron/root

chmod 600 /var/spool/cron/root

rm -f /var/run/cron.reboot

service crond restart

else

# (检查备份任务是否存在)在/var/spool/cron/crontabs/root文件中查找是否有包含单词94.backup.yml的行

# grep没有找到匹配项,则返回值为1,!将其转换为0,表示条件为真

if ! grep -w '94.backup.yml' /var/spool/cron/crontabs/root &> /dev/null; then

echo "00 00 * * * /usr/local/bin/ansible-playbook -i /etc/kubeasz/clusters/\${CLUSTER_NAME}/hosts -e @/etc/kubeasz/clusters/\${CLUSTER_NAME}/config.yml /etc/kubeasz/playbooks/94.backup.yml &> /dev/null; find /etc/kubeasz/clusters/\${CLUSTER_NAME}/backup/ -type f -name '*.db' -mtime +3 | xargs rm -f" >> /var/spool/cron/crontabs/root

else

echo "exists"

fi

# 将/var/spool/cron/crontabs/root文件的所有者改为root,所属组改为crontab

chown root.crontab /var/spool/cron/crontabs/root

# 将/var/spool/cron/crontabs/root文件的权限设置为只有所有者(root用户)有读写权限,其他用户没有任何权限

chmod 600 /var/spool/cron/crontabs/root

rm -f /var/run/crond.reboot

service cron restart

fi开始部署

# 删除无关文件

rm -rf ${pwd}/{dockerfiles,docs,.gitignore,pics,dockerfiles} &&\

find ${pwd}/ -name '*.md'|xargs rm -f# now start deploy k8s cluster

# 01 prepare to prepare CA/certs & kubeconfig & other system settings

# 02 etcd to setup the etcd cluster

# 03 container-runtime to setup the container runtime(docker or containerd)

# 04 kube-master to setup the master nodes

# 05 kube-node to setup the worker nodes

# 06 network to setup the network plugin

# 07 cluster-addon to setup other useful plugins

# 90 all to run 01~07 all at once

# 10 ex-lb to install external loadbalance for accessing k8s from outside

# 11 harbor to install a new harbor server or to integrate with an existed one创建证书

# 去到/etc/kubeasz目录下

cd ${pwd}/

# to prepare CA/certs & kubeconfig & other system settings

${pwd}/ezctl setup ${CLUSTER_NAME} 01

sleep 1安装ETCD

# to setup the etcd cluster

${pwd}/ezctl setup ${CLUSTER_NAME} 02

sleep 1配置容器运行时

# 配置并安装容器运行时CRI

# to setup the container runtime(docker or containerd)

case ${cri} in

containerd)

sed -ri "s+^CONTAINER_RUNTIME=.*$+CONTAINER_RUNTIME=\"${cri}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

${pwd}/ezctl setup ${CLUSTER_NAME} 03

;;

docker)

sed -ri "s+^CONTAINER_RUNTIME=.*$+CONTAINER_RUNTIME=\"${cri}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

${pwd}/ezctl setup ${CLUSTER_NAME} 03

;;

*)

echo "cri need be containerd or docker."

echo "cni 配置错误,请确保是 containerd 或者 docker"

exit 11

esac

sleep 1安装主节点

# to setup the master nodes

${pwd}/ezctl setup ${CLUSTER_NAME} 04

sleep 1安装工作节点

# to setup the worker nodes

${pwd}/ezctl setup ${CLUSTER_NAME} 05

sleep 1安装网络插件

# to setup the network plugin(flannel、calico...)

${pwd}/ezctl setup ${CLUSTER_NAME} 06

sleep 1安装主要组件

# to setup other useful plugins(metrics-server、coredns...)

${pwd}/ezctl setup ${CLUSTER_NAME} 07

sleep 1[可选]对集群所有节点进行操作系统层面的安全加固

# [可选]对集群所有节点进行操作系统层面的安全加固 https://github.com/dev-sec/ansible-os-hardening

#ansible-playbook roles/os-harden/os-harden.yml

#sleep 1

#cd `dirname ${software_packet:-/tmp}`部署结束输出k8s状态

k8s_bin_path='/opt/kube/bin'

echo "------------------------- k8s version list ---------------------------"

${k8s_bin_path}/kubectl version

echo

echo "------------------------- All Healthy status check -------------------"

${k8s_bin_path}/kubectl get componentstatus

echo

echo "------------------------- k8s cluster info list ----------------------"

${k8s_bin_path}/kubectl cluster-info

echo

echo "------------------------- k8s all nodes list -------------------------"

${k8s_bin_path}/kubectl get node -o wide

echo

echo "------------------------- k8s all-namespaces's pods list ------------"

${k8s_bin_path}/kubectl get pod --all-namespaces

echo

echo "------------------------- k8s all-namespaces's service network ------"

${k8s_bin_path}/kubectl get svc --all-namespaces

echo

echo "------------------------- k8s welcome for you -----------------------"

echo设置kubectl命令别名

# you can use k alias kubectl to siample

# 为kubectl命令设置一个别名k。 complete是bash的一个内置命令,用于定义命令行自动补全的规则。-F __start_kubectl指定了一个函数__start_kubectl,这个函数是kubectl命令提供的,用于实现自动补全功能

echo "alias k=kubectl && complete -F __start_kubectl k" >> ~/.bashrc面板登录信息

# get dashboard url

${k8s_bin_path}/kubectl cluster-info|grep dashboard|awk '{print $NF}'|tee -a /root/k8s_results

# get login token

${k8s_bin_path}/kubectl -n kube-system describe secret $(${k8s_bin_path}/kubectl -n kube-system get secret | grep admin-user | awk '{print $1}')|grep 'token:'|awk '{print $NF}'|tee -a /root/k8s_results

echo

echo "you can look again dashboard and token info at >>> /root/k8s_results <<<"

echo "你可以在文件中查看仪表板和令牌信息 >>> /root/k8s_results <<<"重启

echo ">>>>>>>>>>>>>>>>> You need to excute command [ reboot ] to restart all nodes <<<<<<<<<<<<<<<<<<<<"

echo ">>>>>>>>>>>>>>>>> 你需要执行命令 [ reboot ] 来重启所有节点 <<<<<<<<<<<<<<<<<<<<"

#find / -type f -name "kubeasz*.tar.gz" -o -name "k8s_install_new.sh"|xargs rm -f运行部署k8s脚本

node1-4一起部署,node5后续添加

https://blog.csdn.net/weixin_46887489/article/details/133894707

将离线安装包复制到201节点

kubeasz-ubuntu-22.04.3-v1.27.5.tar.gz

k8s_install_new.sh

get-pip.py

ezdown# 注意:如果是在海外部署,而集群名称又不带aws的话,可以把安装脚本内此部分代码注释掉,避免pip安装过慢

if ! `echo $clustername |grep -iwE aws &>/dev/null`; then

mkdir ~/.pip

cat > ~/.pip/pip.conf <<CB

[global]

index-url = https://mirrors.aliyun.com/pypi/simple

[install]

trusted-host=mirrors.aliyun.com

CB

fi

# 直接执行上面的命令为在线安装,如需在离线环境部署,可自己在本地虚拟机安装一遍,然后将/etc/kubeasz目录打包成kubeasz.tar.gz,在无网络的机器上安装,把脚本和这个压缩包放一起再执行上面这行命令即是离线安装了# 运行

# 脚本安装参数依次为:

所有服务器的root统一密码、网络位、主机位、容器运行时、K8S网络插件、K8S集群名称

bash k8s_install_new.sh bogeit 10.0.1 201\ 202\ 203 containerd calico boge.com test-cn

bash k8s_install_new.sh bogeit 10.0.1 201\ 202\ 203\ 204 containerd calico boge.com test-cn# 脚本基本是自动化的,除了下面几处提示按要求复制粘贴下,再回车即可

# 输入准备创建ETCD集群的主机位,复制 203 202 201 粘贴并回车

echo "enter etcd hosts here (example: 203 202 201) ↓"

# 输入准备创建KUBE-MASTER集群的主机位,复制 202 201 粘贴并回车

echo "enter kube-master hosts here (example: 202 201) ↓"

# 输入准备创建KUBE-NODE集群的主机位,复制 204 203 粘贴并回车

echo "enter kube-node hosts here (example: 204 203) ↓"

# 这里会提示你是否继续安装,没问题的话直接回车即可

Enter to continue deploy k8s to all nodes >>>

# 安装完成后重新加载下环境变量以实现kubectl命令补齐

. ~/.bashrckubectl使用tab键补全报错问题

https://www.cnblogs.com/albert919/p/16677978.html

https://blog.csdn.net/shileilei1988/article/details/117674615

kubectl ge-bash: _get_comp_words_by_ref: command not foundapt-get install bash-completion

# 有报错就按照提示执行apt --fix-broken install

vim ~/.bashrc 添加source <(kubectl completion bash)

source ~/.bashrc

或者

echo 'source <(kubectl completion bash)' >> ~/.bashrc

source ~/.bashrc

# 还不行则,(执行前关闭窗口重新连接试试)

source /usr/share/bash-completion/bash_completionsource /usr/share/bash-completion/bash_completion 这条命令用于在当前的 Shell 会话中加载 Bash 的自动补全功能。

销毁集群

root@node-1:/etc/kubeasz# ./ezctl destroy test-cnkubectl get node -o wide

kubectl get pod -Aroot@node-1:~# kubectl get node -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

10.0.1.201 Ready,SchedulingDisabled master 18m v1.27.5 10.0.1.201 <none> Ubuntu 22.04.4 LTS 5.15.0-105-generic containerd://1.6.23

10.0.1.202 Ready,SchedulingDisabled master 18m v1.27.5 10.0.1.202 <none> Ubuntu 22.04.4 LTS 5.15.0-105-generic containerd://1.6.23

10.0.1.203 Ready node 17m v1.27.5 10.0.1.203 <none> Ubuntu 22.04.4 LTS 5.15.0-105-generic containerd://1.6.23

root@node-1:~# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-67c67b9b5f-wjb7l 1/1 Running 0 18m

kube-system calico-node-4x4g8 1/1 Running 0 18m

kube-system calico-node-k2kw6 1/1 Running 0 18m

kube-system calico-node-kd2hj 1/1 Running 0 18m

kube-system coredns-65bc7b648d-4txb7 1/1 Running 0 16m

kube-system metrics-server-56774d6954-lgn69 1/1 Running 0 16m

kube-system node-local-dns-9p4pd 1/1 Running 0 16m

kube-system node-local-dns-kcdtq 1/1 Running 0 16m

kube-system node-local-dns-rfwkm 1/1 Running 0 16m

root@node-1:~#containerd测试

# ctr namespace ls

NAME LABELS

k8s.io

# containerd需要指定命令空间导入镜像

docker pull nginx:1.21.6 && docker save nginx:1.21.6 > nginx-1.21.6.tar

# ctr -n k8s.io images import nginx-1.21.6.tar

# 查看所有镜像

ctr -n k8s.io images ls | grep nginx报错

cron

enter kube-node hosts here (example: 204 203) ↓

204 203

10.0.1.204

10.0.1.203

k8s_install_new.sh: line 284: /var/spool/cron/crontabs/root: No such file or directory

chown: invalid user: ‘root.crontab’

chmod: cannot access '/var/spool/cron/crontabs/root': No such file or directory

Failed to restart cron.service: Unit cron.service not found.

Enter to continue deploy k8s to all nodes >>>Failed to restart cron.service: Unit cron.service not found. 表示系统中可能没有安装 cron 服务,或者服务的名称与默认名称不同。

检查cron是否安装,服务是否启动

calico-node

FAILED - RETRYING: 轮询等待calico-node 运行 (2 retries left).

FAILED - RETRYING: 轮询等待calico-node 运行 (1 retries left).

FAILED - RETRYING: 轮询等待calico-node 运行 (1 retries left).

FAILED - RETRYING: 轮询等待calico-node 运行 (1 retries left).

FAILED - RETRYING: 轮询等待calico-node 运行 (1 retries left).

TASK [calico : 轮询等待calico-node 运行] **************************************************************************************

fatal: [10.0.1.202]: FAILED! => {"attempts": 15, "changed": true, "cmd": "/etc/kubeasz/bin/kubectl get pod -n kube-system -o wide|grep 'calico-node'|grep ' 10.0.1.202 '|awk '{print $3}'", "delta": "0:00:00.082782", "end": "2024-05-19 12:22:30.286262", "msg": "", "rc": 0, "start": "2024-05-19 12:22:30.203480", "stderr": "", "stderr_lines": [], "stdout": "Init:0/2", "stdout_lines": ["Init:0/2"]}

...ignoring

fatal: [10.0.1.201]: FAILED! => {"attempts": 15, "changed": true, "cmd": "/etc/kubeasz/bin/kubectl get pod -n kube-system -o wide|grep 'calico-node'|grep ' 10.0.1.201 '|awk '{print $3}'", "delta": "0:00:00.073667", "end": "2024-05-19 12:22:30.557955", "msg": "", "rc": 0, "start": "2024-05-19 12:22:30.484288", "stderr": "", "stderr_lines": [], "stdout": "Init:0/2", "stdout_lines": ["Init:0/2"]}

...ignoring

fatal: [10.0.1.203]: FAILED! => {"attempts": 15, "changed": true, "cmd": "/etc/kubeasz/bin/kubectl get pod -n kube-system -o wide|grep 'calico-node'|grep ' 10.0.1.203 '|awk '{print $3}'", "delta": "0:00:00.088652", "end": "2024-05-19 12:22:31.006400", "msg": "", "rc": 0, "start": "2024-05-19 12:22:30.917748", "stderr": "", "stderr_lines": [], "stdout": "Init:0/2", "stdout_lines": ["Init:0/2"]}

...ignoring

fatal: [10.0.1.204]: FAILED! => {"attempts": 15, "changed": true, "cmd": "/etc/kubeasz/bin/kubectl get pod -n kube-system -o wide|grep 'calico-node'|grep ' 10.0.1.204 '|awk '{print $3}'", "delta": "0:00:00.072507", "end": "2024-05-19 12:22:31.217710", "msg": "", "rc": 0, "start": "2024-05-19 12:22:31.145203", "stderr": "", "stderr_lines": [], "stdout": "Init:0/2", "stdout_lines": ["Init:0/2"]}

...ignoringkubectl -n kube-system describe pod calico-node-58wmrEvents:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 7m4s default-scheduler Successfully assigned kube-system/calico-node-j4thf to 10.0.1.203

Warning FailedCreatePodSandBox 4m32s (x12 over 7m4s) kubelet Failed to create pod sandbox: rpc error: code = Unknown desc = failed to get sandbox image "easzlab.io.local:5000/easzlab/pause:3.9": failed to pull image "easzlab.io.local:5000/easzlab/pause:3.9": failed to pull and unpack image "easzlab.io.local:5000/easzlab/pause:3.9": failed to resolve reference "easzlab.io.local:5000/easzlab/pause:3.9": failed to do request: Head "http://easzlab.io.local:5000/v2/easzlab/pause/manifests/3.9": dial tcp 10.0.1.201:5000: connect: connection refused

Warning DNSConfigForming 112s (x24 over 7m4s) kubelet Nameserver limits were exceeded, some nameservers have been omitted, the applied nameserver line is: 223.5.5.5 114.114.114.114 223.5.5.5Image: easzlab.io.local:5000/calico/cni:v3.24.6解决方法:

销毁集群并重新安装

root@node-1:/etc/kubeasz# ./ezctl destroy test-cn"destroy":这是命令的参数之一,表示要进行销毁操作。

"test-cn":这是要销毁的 Kubernetes 集群的名称。/etc/kubeasz/clusters/test-cn/大家在按照博哥的方式进行安装时,可能会出现“FAILED - RETRYING: 轮询等待calico-node 运行 (15 retries left)”的错误,如果再进一步排查的话会发现是镜像拉取的错误。再进一步会发现我们所需要的镜像根本没有load进来。而从脚本中会看到在执行“./ezdown -D ”时会启动Docker并加载镜像,而在docker镜像的systemd启动文件中有一句报错“ExecStartPost=/sbin/iptables -I FORWARD -s 0.0.0.0/0 -j ACCEPT (code=exited, status=203/EXEC)”。该错误就是一切问题的根源。而导致这个错误的原因是因为我们最初选择安装系统时是最小话安装,导致缺少了iptables库。 解决办法: sudo apt-get update sudo apt-get upgrade sudo apt-get install iptables

你在主节点docker ps看看,有没有一个私有仓库的容器启动,如果没有,可以手动./ezdown -D看看能不能load,如果不能,你看看报错是什么。如果想简单,可以直接重新制作系统模板。这样系统可以把一些包安装上(我还没重装系统,我解决上述问题就是按照我说的方法解决的,你可以看看自己系统是不是还有其他问题@)

看ansible安装时的日志,如果有报错,得解决后再进行下一步,你这个情况可以destroy掉,然后重新安装一遍

感觉其实脚本里有装,我运行完发现iptables是有的,可能是先后顺序问题,也就是视频里说的脚本未执行完就下一步了

确实是有这个问题,最小化安装的系统,在这个docker 需要加入iptables才行,所以docker 启动会报错,导致后面的不成功了

docker源

自动化部署的源文件配置

cat /etc/kubeasz/roles/docker/templates/daemon.json.j2

vim /etc/kubeasz/roles/docker/templates/daemon.json.j2docker镜像

easzlab.io.local:5000是二进制起的

# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

docker.elastic.co/elasticsearch/elasticsearch 8.11.3 792fab0c0bd8 6 months ago 1.43GB

easzlab.io.local:5000/elasticsearch/elasticsearch 8.11.3 792fab0c0bd8 6 months ago 1.43GB

easzlab.io.local:5000/eck/eck-operator 2.10.0 bd9bc5fa9eed 7 months ago 73.1MB

docker.elastic.co/eck/eck-operator 2.10.0 bd9bc5fa9eed 7 months ago 73.1MB

easzlab/kubeasz 3.6.2 6ea48e5ea9d3 9 months ago 157MB

coredns/coredns 1.11.1 cbb01a7bd410 10 months ago 59.8MB

easzlab.io.local:5000/coredns/coredns 1.11.1 cbb01a7bd410 10 months ago 59.8MB

registry 2 0030ba3d620c 10 months ago 24.1MB